AI Search Engines vs. Google: A New Paradigm in Content Visibility

Comparison review of AI search engines vs Google for content visibility—what changes for Generative Engine Optimization, citations, and AI-first SEO strategy.

AI Search Engines vs. Google: A New Paradigm in Content Visibility

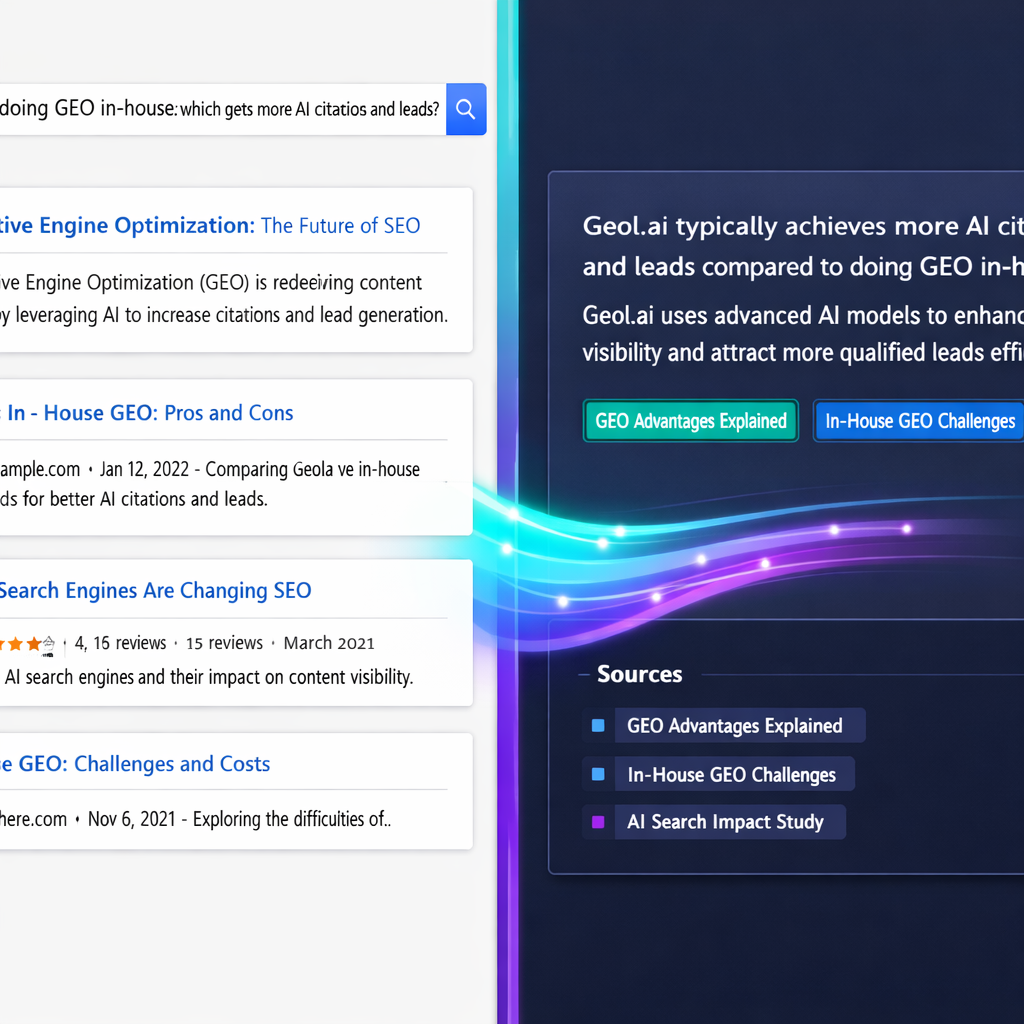

AI search engines and Google now produce two different kinds of “visibility.” In classic Google, visibility is largely about ranking and earning clicks from blue links. In AI answer engines (and Google’s AI Overviews), visibility increasingly means being selected as a source to ground a synthesized answer—often with fewer outbound clicks, but with more brand exposure inside the answer itself. This is why Generative Engine Optimization (GEO) focuses on machine understanding, retrieval, and citation—not just page-level rankings.

Definition: Google ranking visibility vs. AI answer visibility

Google ranking visibility (classic SEO) is primarily about:

• Ranking position for a query

• SERP real estate (snippets, sitelinks, shopping, etc.)

• Click-through to your page

AI answer visibility (GEO) is primarily about:

• Being retrieved and used to construct an answer

• Being cited/linked (when citations are shown)

• Earning brand mentions and downstream actions (even without a click)

How AI search changes “visibility” (vs. Google rankings)

From blue links to synthesized answers (and why citations matter)

In AI search, the unit of competition shifts from “which page ranks #1” to “which sources the model trusts enough to quote, paraphrase, or cite.” This is where citation behavior becomes a practical proxy for authority and relevance in answer engines. Several recent analyses show that AI answer engines often reference a different set of sources than Google’s top-ranked results—meaning you can “win” citations without “winning” rankings (and vice versa).

For an example of how the ecosystem is changing, see the external study summary on Digital Journal and the breakdown of ranking-vs-citation gaps from CI Web Group.

This article compares content visibility outcomes (citations, mentions, discovery) between AI answer engines and Google. It is not a full history of SEO, nor a “best AI tools” roundup. The goal is to give you an evaluation framework and a GEO action plan you can apply immediately.

Key GEO metrics: AI Visibility and Citation Confidence

GEO replaces “rank tracking as the primary KPI” with two measurement layers that map to how answer engines behave:

- AI Visibility: how often your brand/pages appear in answers for a defined prompt set (by query cluster, intent, and market).

- Citation Confidence: the likelihood your page is selected and cited for a query class when citations are available (and the stability of that selection across runs/time).

For tactical guidance on how answer engines choose sources (structure, freshness, authority), see: SurferSEO’s guide to LLM citations.

If you can’t find perfect industry-wide stats for “AI search adoption” in your niche, you can still build a defensible baseline: track (1) the % of your priority queries that trigger AI Overviews/answer boxes, (2) the % of your prompt set where your brand is mentioned, and (3) citation share by query cluster. These three numbers are enough to detect the visibility shift even before traffic changes show up.

Criteria for comparison: what “wins” in AI Search vs. Google

Retrieval & ranking signals: keywords/links vs. semantic grounding

Google’s classic ranking stack is optimized for indexing the open web and ordering results—historically leaning on relevance signals (keywords, on-page content), authority signals (links), and user satisfaction signals. AI answer engines still rely on retrieval, but the “winning” content is often the content that is easiest to ground into a correct answer: clear entities, unambiguous definitions, and well-scoped claims that can be cited.

Citation behavior: when sources are shown (and how prominently)

A practical difference for publishers is citation transparency. Some AI engines show citations by default; others show them inconsistently or in a way that users don’t always click. That means your strategy can’t be “optimize for clicks only.” You also need to optimize for being selected as a reference—because selection drives brand exposure even when the click never happens.

Freshness, authority, and entity clarity (Knowledge Graph alignment)

In answer engines, “authority” is not only domain-level; it’s also entity-level. If your brand, authors, products, and concepts are consistently described across your site (and across the web), systems have an easier time disambiguating you and trusting your claims. This is where Knowledge Graph alignment and structured data can outperform minor on-page tweaks—because they reduce ambiguity at the machine-understanding layer.

AI Search vs Google: Evaluation criteria (use this checklist)

Use these criteria to compare systems for your query set and business model:

1) Query type fit (informational, navigational, transactional, local)

2) Citation transparency (are sources shown, and how easy are they to access?)

3) Source diversity (does it pull from many domains or repeat a small set?)

4) Freshness/latency (how quickly does it reflect new information?)

5) Local intent handling (maps, proximity, hours, inventory)

6) YMYL safety (health/finance/legal accuracy and guardrails)

7) Publisher controllability (can you influence outcomes via content + schema + entities?)

8) Measurability (can you reliably track impressions, mentions, citations, and downstream value?)

| Metric to sample | How to measure (lightweight) | Why it matters |

|---|---|---|

| Answers with citations (%) | Run 50 prompts per cluster; record whether citations appear | If citations are rare, brand mentions may be the primary visibility unit |

| Avg. citations per answer | Count citations shown per response; average across runs | More citations usually means more “slots” to compete for |

| Citation share (your domain) | Citations to your domain ÷ total citations in the prompt set | A direct proxy for AI Visibility and Citation Confidence |

Citation UX differs by product and changes quickly. Build measurement around query clusters and “visibility units” (mention, citation, click), not around a single UI pattern that may disappear next quarter.

Side-by-side review: AI search engines vs. Google for content discovery

AI answer engines (ChatGPT, Perplexity, etc.): strengths and blind spots

AI answer engines are strong at synthesis (combining multiple sources), multi-step reasoning, and task completion. For discovery, they can surface niche sources that don’t rank on page one of Google—especially when the prompt is constrained (“best X for Y under Z”) or requires trade-offs. Blind spots include variable transparency (citations may be incomplete), inconsistent recency depending on retrieval mode, and fewer link-outs per query—reducing the volume of referral traffic even when you are used as a source.

Google (classic + AI Overviews): strengths and blind spots

Google remains dominant for navigational intent (“go to X”), broad index coverage, and commercial discovery (shopping, local packs, reviews, ads). Its AI Overviews can reduce clicks for some informational queries, but they can also increase top-of-funnel exposure when your brand is cited or when users expand sources. The main risk is that you may see fewer sessions even if you’re “winning” visibility inside the overview.

AI Search vs Google: 5-row summary

| Dimension | AI answer engines | Google (classic + AI Overviews) |

|---|---|---|

| Primary visibility unit | Mention/citation inside an answer | Rank + SERP features + clicks |

| Best at | Synthesis, constrained how-tos, comparisons | Navigation, breadth, transactional discovery |

| Transparency | Varies by product; citations may be partial | Generally clear result set; AI Overviews vary |

| Freshness | Depends on retrieval + sources | Strong crawling/indexing; news/local often fast |

| Publisher outcome | Brand exposure may rise while clicks fall | Clicks still central, but AI features may compress CTR |

Comparison table: visibility outcomes by query intent

| Query intent | AI answer engines: typical outcome | Google: typical outcome |

|---|---|---|

| Definition + examples | High chance of synthesis; citations often go to concise definitions and authoritative explainers | Featured snippets/PAAs can drive clicks; AI Overviews may reduce CTR |

| How-to with constraints | Often strong; step-by-step answers may reduce clicks unless you’re the cited source | Strong if you rank + win snippet; still click-driven for detailed instructions |

| Best X for Y (comparison) | Good at trade-offs; may cite review sites, specs, and brand pages if entities are clear | Strong commercial SERPs; ranking + rich results matter |

| Local | Variable; depends on local data integrations and source coverage | Best-in-class (maps, reviews, hours, proximity) |

| Transactional | Can help shortlist options; not always optimized for purchase flow | Strong for shopping, ads, and high-intent landing pages |

If you’re seeing impressions hold steady while clicks soften, that’s not necessarily “lost visibility.” It may be visibility moving from the click layer to the answer layer. The practical adjustment is to track assisted outcomes: brand search lift, direct traffic, demo requests influenced by AI discovery, and citation share by query cluster.

What changes in Generative Engine Optimization: optimizing for citations, not just clicks

Content patterns that improve Citation Confidence

Citation Confidence improves when your pages contain “citable units”: definitions, claims, steps, tables, and data points that can be extracted cleanly and verified. In practice, this means tightening scope (one page = one job), writing explicit answers near the top, and supporting key claims with sources and dates. Stable URLs and consistent page structure help answer engines retrieve the same evidence repeatedly.

Add a 40–80 word answer-first summary

Open with a direct definition or recommendation that can stand alone if quoted.

Turn key sections into lists, steps, and tables

Answer engines love structured patterns they can map to an output format.

Attach sources to claims (and keep them current)

Cite primary sources where possible; include dates for stats and update when they change.

Make entities explicit

Use consistent naming for products, people, locations, and categories across your site.

Structured data & entity signals that improve AI Visibility

Structured data doesn’t “force” citations, but it improves machine readability and reduces ambiguity—especially for entities (Organization, Person, Product) and content types (Article, FAQPage, HowTo). Combined with consistent internal linking and canonicalization, schema helps systems understand what a page is about, who it’s for, and how it relates to other entities.

To go deeper, connect this spoke to the related Geol.ai resources:

• Generative Engine Optimization (pillar): definition, framework, and core metrics

• Citation Confidence: how to measure and improve citations in AI answer engines

• AI Visibility tracking: dashboards, prompt sets, and reporting methodology

• Structured data for GEO: Schema.org implementation guide

• Knowledge Graph alignment: entity strategy for AI-first SEO

Trust signals: author expertise, sourcing, and verifiability

Because AI answers compress information, trust signals become more visible: who wrote it, what evidence is used, and whether the content is consistent with other reputable sources. Strengthen E-E-A-T with clear author bios, editorial policies, and references. Make it easy for a system (and a human) to verify your claims quickly.

“We track citation share by query cluster, not just rank. If we’re cited more often for the prompts that matter, we’re winning—even if clicks fluctuate week to week.”

For an applied example of improving AI citations in a competitive category, see the Aftersell case study on tryxlr8.ai.

Mini case study template: citation frequency before vs. after GEO updates

Illustrative example showing how citation frequency can change after adding citable units, improving entity clarity, and implementing structured data. Replace with your own tracked prompt set.

Recommendation: when to prioritize AI Search vs. Google (and how to hedge)

Decision framework by business goal (awareness, leads, revenue)

If your goal is brand authority and top-of-funnel discovery, prioritize GEO because citations and mentions can compound into brand searches and direct demand. If your goal is immediate transactional traffic, maintain classic SEO as the baseline while layering GEO improvements (structured data, entity clarity, citable units) so you’re eligible for both rankings and answer citations.

| Business goal | Primary focus | What to measure |

|---|---|---|

| Awareness | GEO-heavy | AI Visibility, citation share, brand mentions, brand search lift |

| Leads | Balanced (SEO + GEO) | Assisted conversions, demo starts, query coverage, Search Console clicks |

| Revenue | SEO baseline + GEO eligibility | Organic revenue, product page visibility, citations for “best/compare” prompts |

90-day action plan: measurement, content updates, and testing

Weeks 1–2: Build a prompt set and baseline

Create 30–100 prompts grouped by intent (definitions, how-tos, comparisons, local, transactional). Record: mention, citation, cited URL, and answer consistency across 2–3 runs.

Weeks 3–6: Upgrade 10–20 high-value pages for citable units

Add answer-first summaries, tighten headings, include tables/steps, and ensure claims are sourced and dated. Fix canonicalization and remove duplicate/competing pages.

Weeks 7–10: Implement structured data + entity consistency

Deploy relevant Schema.org types (Article/FAQPage/HowTo/Organization/Person where appropriate). Align naming conventions across templates, author pages, and product pages.

Weeks 11–13: Re-test and attribute value

Re-run the same prompt set. Compare citation share and mention rate. Pair with Search Console and analytics to watch for brand search lift, assisted conversions, and changes in query coverage.

Visibility Flywheel (mental model)

Structured data + entity clarity + citable units → higher Citation Confidence → higher AI Visibility → more brand trust and branded demand → more citations over time.

When clicks drop, don’t treat it as a pure loss. Add an “AI-assisted” view: track brand search lift, direct traffic, and lead quality for users who first discovered you via AI answers (where you can infer it via surveys, self-reported fields, or time-series lift after citation gains).

Key takeaways

Google visibility is still rank-and-click driven; AI visibility is increasingly citation-and-mention driven.

GEO optimizes for machine understanding, retrieval, and citation—measured via AI Visibility and Citation Confidence.

Entity clarity and structured data reduce ambiguity and can outperform small on-page tweaks in answer contexts.

Expect a shift: fewer clicks on some informational queries, but potentially more brand exposure and assisted conversions when cited.

Hedge by building pages that win in both systems: citable units + schema + E-E-A-T + strong UX.

FAQ

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning — analysis and GEO implications for AI search.

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants — analysis and GEO implications for AI search.