Google AI Mode Is Expanding From Feature to Default Search Behavior: How to Adapt Your AI Retrieval & Content Discovery Strategy

How to update AI Retrieval & Content Discovery for Google AI Mode becoming default: prerequisites, steps, KPIs, visuals, mistakes, and FAQs.

Google AI Mode Is Expanding From Feature to Default Search Behavior: How to Adapt Your AI Retrieval & Content Discovery Strategy

As Google AI Mode expands from an opt-in feature toward default search behavior, “winning search” stops being only about ranking a page and becomes about being selected, summarized, and cited inside AI responses—sometimes before a user ever sees a list of blue links. This spoke guide focuses on one thing: how to adapt your AI Retrieval & Content Discovery strategy (measurement + content packaging) so your best pages are easy for AI systems to retrieve, trust, and cite. It’s not a full SEO overhaul; it’s a practical playbook to baseline, repackage, and measure for AI Mode-as-default.

Google’s own framing of AI Mode highlights a shift in the economics of visibility: you’re competing to be included in an AI-generated response, not just to rank a link. Treat AI Mode as a new distribution layer with its own eligibility rules and KPIs (citations, assisted clicks, query-mix shifts).

Primary reference: Google Search AI Mode announcement and updates.

Prerequisites: Confirm AI Mode Exposure and Set a Baseline (Before You Change Anything)

Define what “default AI Mode” changes in AI Retrieval & Content Discovery

When AI Mode becomes the default experience, discovery shifts from “query → ranked results → click” to “query → retrieval + synthesis → citations/links → optional click.” That changes what “good performance” looks like: you may see higher impressions on informational queries, lower CTR, and more value delivered through citations and assisted downstream actions.

To make this measurable, treat AI Mode visibility as a funnel: eligibility (your page can be retrieved), selection (your page is used/cited), and value (assisted clicks, conversions, recall).

Baseline checklist: queries, pages, and SERP features to track

- Pick a representative query set (start with 50–200) that is likely to trigger AI answers: how-to, comparisons, troubleshooting, definitions, and “best X for Y.”

- For each query, capture: impressions, clicks, CTR, average position (GSC), plus a binary “AI answer present?” and “are we cited?” flag (manual or scripted).

- Segment performance by page type (docs/blog/product/support) and intent so you can see where AI Mode changes discovery vs. conversion.

Tooling setup: GSC, analytics, rank tracking, and log files

- Google Search Console: export query/page data weekly; annotate major content packaging changes.

- Analytics: create segments for landing-page groups (docs vs. blog) and intent clusters; track assisted conversions and engagement.

- Server logs: confirm crawl patterns and whether priority pages are being fetched after updates (especially for fast-changing topics).

| Query class | Example query | Baseline metrics to capture | AI answer present? | Your domain cited? |

|---|---|---|---|---|

| Definition | “What is AI retrieval?” | Impr/Clicks/CTR/Pos + landing page | 0/1 | 0/1 + cited URL |

| How-to | “How to set up X in Y” | Impr/Clicks/CTR/Pos + device + country | 0/1 | 0/1 + cited snippet context |

| Comparison | “X vs Y for Z” | Impr/Clicks/CTR/Pos + landing page type | 0/1 | 0/1 + competitor cited |

Once you have a baseline, you can make changes with attribution instead of guessing. Next, you’ll map what you have to what AI Mode tends to retrieve and trust.

Step 1 — Map Your Content to AI Retrieval & Content Discovery Inputs (So AI Mode Can Find and Trust It)

Create an “AI discoverability map” of your key pages

Start with the pages that should be eligible for AI answers (pages that actually complete a task, not just “mention a keyword”). For each priority page, map:

- Primary query + intent (definition/how-to/comparison/troubleshooting).

- Supporting sub-questions you want the AI to answer using your page as grounding.

- The single best “answer block” on the page (or note that it’s missing).

Emerging GEO research suggests structural features—clear headings, sectioning, and document organization—can influence whether LLMs cite a page, not just the semantics of the text. Treat layout as part of your retrieval strategy, not a design afterthought.

Source: “The New GEO Evidence: Structure, Not Just Semantics, May Drive Citations” (arXiv).

Strengthen entity clarity with lightweight knowledge graph signals

AI Mode retrieval favors pages that are unambiguous about “what is what” and “how concepts relate.” You don’t need to build a full knowledge graph to benefit—just make your entities consistent and explicit:

- Use consistent names for products/features and define them once (then reuse the definition).

- State relationships directly (Product → Feature → Use case → Constraints).

- Add/verify structured data only where it matches on-page content (e.g., Article, FAQPage, HowTo, Organization).

Make freshness and provenance explicit (dates, authorship, sources)

If AI Mode is selecting sources to ground an answer, it needs machine-legible trust cues: clear last-updated dates, author credentials, citations to primary sources, and versioning for fast-changing topics. This also reduces “citation failures” where a page is skipped, misquoted, or used out of context.

Source: “Citation Failures in GEO: Why Pages Get Skipped, Misquoted, or Ignored” (arXiv).

If you want a parallel perspective on how “source selection” becomes the new battleground, study agentic search patterns (multi-step workflows rather than single queries). It’s a preview of where AI Mode behavior tends to go.

Source: Perplexity product update (agentic search direction).

Content-to-Query Coverage (Intent Clusters vs. Page Readiness)

A proxy view of where you have many eligible pages versus where you have gaps or weak packaging for AI retrieval and citation. Use this to prioritize which clusters need dedicated answer blocks and task paths.

With your discoverability map in place, you can now repackage priority pages so AI Mode can extract correct, citable answers—without losing depth for humans.

Step 2 — Repackage Pages for AI Mode: Build “Citable Answer Blocks” and Task Paths

Write for citation: the 40–80 word answer block pattern

Citable answer block (copy/paste template)

Answer in 40–80 words: Start with a direct statement that stands alone. Include one key constraint (who it’s for, when it applies, what it excludes). Then add one sentence that names the “best next step” (what the user should do next).

Why it works: it reduces ambiguity during retrieval and grounding, and it gives AI systems a compact, accurate span to quote. Place it near the top of the page, after a short context line, before deep detail.

Add step-by-step task paths (How-To) that AI can summarize correctly

State prerequisites explicitly

Add a short “Requirements” list (accounts, permissions, versions, inputs). This prevents AI Mode from inventing missing steps.

Use numbered steps with one action per step

Keep steps atomic (one verb, one outcome). If a step has conditions, split it into 2–3 steps.

Add expected outputs and checkpoints

After key steps, add a checkpoint line (“You should now see…”) so summaries remain grounded in observable results.

Include a mini troubleshooting tree

Add 3–5 “If X, do Y” bullets for the most common failure modes. AI Mode tends to reward actionable resolution content.

Optimize for grounding: definitions, constraints, and edge cases

- Add a short “What this means” definition when you introduce a term (especially acronyms).

- State constraints that prevent misapplication (regions, plan limits, version compatibility, legal/safety boundaries).

- Add 2–3 edge cases (“This approach fails when…”) to reduce incorrect synthesis.

Snippet Readiness Scoring (Before vs. After Repackaging)

Track how many priority pages include the structural elements that improve AI citation likelihood: answer block, numbered steps, prerequisites, and troubleshooting.

Now that your pages are easier to extract and cite, you need KPIs that reflect AI Mode outcomes—because CTR alone can become misleading as AI answers satisfy more intent in-SERP.

Step 3 — Measure the Shift: New KPIs for Default AI Mode (Citations, Assisted Clicks, and Query Mix)

Define KPIs that reflect AI Retrieval & Content Discovery outcomes

| Layer | KPI | How to measure weekly | Why it matters in AI Mode |

|---|---|---|---|

| Eligibility | Impressions on AI-triggering queries | GSC export for fixed query set; segment by intent | Shows whether you’re even in the retrieval candidate set |

| Visibility | Citation rate | Manual/SERP capture: % queries where your domain is cited | Direct proxy for “being chosen” inside AI answers |

| Value | Assisted clicks + assisted conversions | Analytics: multi-touch paths; landing-page cohorts; engagement | CTR may fall even as business impact holds or rises |

Build a weekly monitoring workflow (30 minutes)

- Export GSC data for the fixed query set; compare WoW for impressions, CTR, and landing pages.

- Update a “citation log” for 20–50 head queries: which domains are cited and which page types win (docs/blog/forums).

- Review assisted value: conversions influenced by organic sessions that started on AI-eligible pages (or arrived later via brand/direct).

Attribution: separating “AI answer exposure” from “traditional clicks”

Expect a period where impressions rise and clicks soften. That doesn’t automatically mean performance is worse; it can mean AI Mode is satisfying basic intent and sending fewer, higher-intent visits. The job is to prove whether your content is being used as grounding and whether downstream actions are holding.

Weekly AI Visibility vs. Traditional KPIs (Same Query Set)

Illustrative trendlines: citations can rise even when CTR declines as AI Mode satisfies more intent in-SERP. Segment this by intent cluster to see where AI Mode becomes default first.

To understand how visibility is becoming measurable across AI surfaces, apply measurement thinking beyond classic rank. For a practical signal on the market’s direction, track AI visibility as a measurable channel and align your reporting to citations and recall—not only sessions.

Common Mistakes + Troubleshooting: Fix Why AI Mode Skips or Misuses Your Content

Common mistakes that reduce AI Retrieval & Content Discovery eligibility

- Burying the answer (no extractable 40–80 word block).

- Unclear authorship/provenance (no credible byline, no update date, no sources).

- Thin steps (missing prerequisites, missing expected outputs, no troubleshooting).

- Missing constraints and edge cases (increases mis-citation risk).

- Over-marked or mismatched schema (markup that doesn’t reflect on-page content).

Troubleshooting checklist: when you’re not cited

Confirm crawl/index + canonical

Check indexing, canonicalization, and whether the correct URL is the one earning impressions.

Add an extractable answer block

Place the 40–80 word answer near the top; include constraints to reduce misapplication.

Improve entity clarity and internal linking

Align terminology across pages and link supporting pages to the canonical “best answer” page for each sub-question.

Strengthen provenance

Add author credentials, update date, and citations to primary sources; ensure claims are evidenced.

Troubleshooting checklist: when you’re cited but clicks drop

If AI Mode uses your content as the “basic answer,” the click becomes a vote for depth. Add reasons to continue:

- Next-step hooks: templates, checklists, calculators, downloadable configs, or interactive tools.

- Deeper navigation: “Choose your path” links for beginner vs. advanced vs. troubleshooting.

- Re-align title/meta to post-AI intent (“examples,” “edge cases,” “benchmarks,” “implementation”).

Diagnostic Checkpoint Failures Across Priority Pages

Use a simple audit to quantify why pages are skipped or under-cited. Prioritize fixes by frequency * impact.

AI answer engines can reuse your content out of context. If your page is unclear about constraints, you increase the chance of being cited incorrectly—hurting trust and conversions. Prioritize correctness, provenance, and “safe-to-quote” phrasing.

Privacy and data-sharing norms also shape how AI systems learn from and attribute content. To reduce surprises and plan governance, review the tradeoffs in AI data-sharing behavior and align your content strategy with what you’re comfortable being extracted and summarized.

Expert Inputs + Implementation Checklist (Copy/Paste)

Where to add expert quotes for credibility and grounding

- Technical SEO / AI search specialist: explain how retrieval + grounding differs from classic ranking (1–2 quotes per major guide).

- Content strategist: define your internal standard for “citable answer blocks” and what “good structure” looks like.

- Analytics lead: clarify how you’ll report assisted value when CTR declines (what counts as success in AI Mode).

One-page implementation checklist for teams

- Baseline captured: fixed query set + weekly exports + SERP evidence (AI answer present/cited).

- Priority pages mapped: primary query, sub-questions, canonical URL, target answer block.

- Repackaging done: answer block + prerequisites + numbered steps + troubleshooting + edge cases.

- Schema validated: only where appropriate and consistent with visible content.

- Trust cues updated: author, last updated, sources, version notes for fast-changing topics.

- Internal linking strengthened: supporting pages point to the canonical “best answer” page.

- Monitoring cadence established: weekly citation log + query mix + assisted value reporting.

Custom visualization plan (what to build and where it goes)

AI Retrieval & Content Discovery Pipeline for Default AI Mode

Use this as a stakeholder diagram: Crawl/Index → Entity understanding → Retrieval/Grounding → Synthesis → Citation → Click/Assist. Pair each stage with the on-site signals you control.

Citable Answer Block Anatomy (What to Include on Priority Pages)

An annotated content wireframe translated into a measurable checklist: each component increases extractability, correctness, and usefulness after the click.

Key Takeaways

Treat default AI Mode as a new distribution layer: optimize for eligibility → citations → assisted value, not only rank and CTR.

Baseline first: fix a representative query set, capture AI answer presence/citations, and segment by intent + page type before making changes.

Repackage priority pages with citable answer blocks, explicit prerequisites, numbered task paths, and troubleshooting to improve extractability and reduce mis-citation.

Measure the shift weekly with a citation log and assisted outcomes; expect query-mix changes where impressions rise while CTR falls.

FAQ: Google AI Mode and AI Retrieval Strategy

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

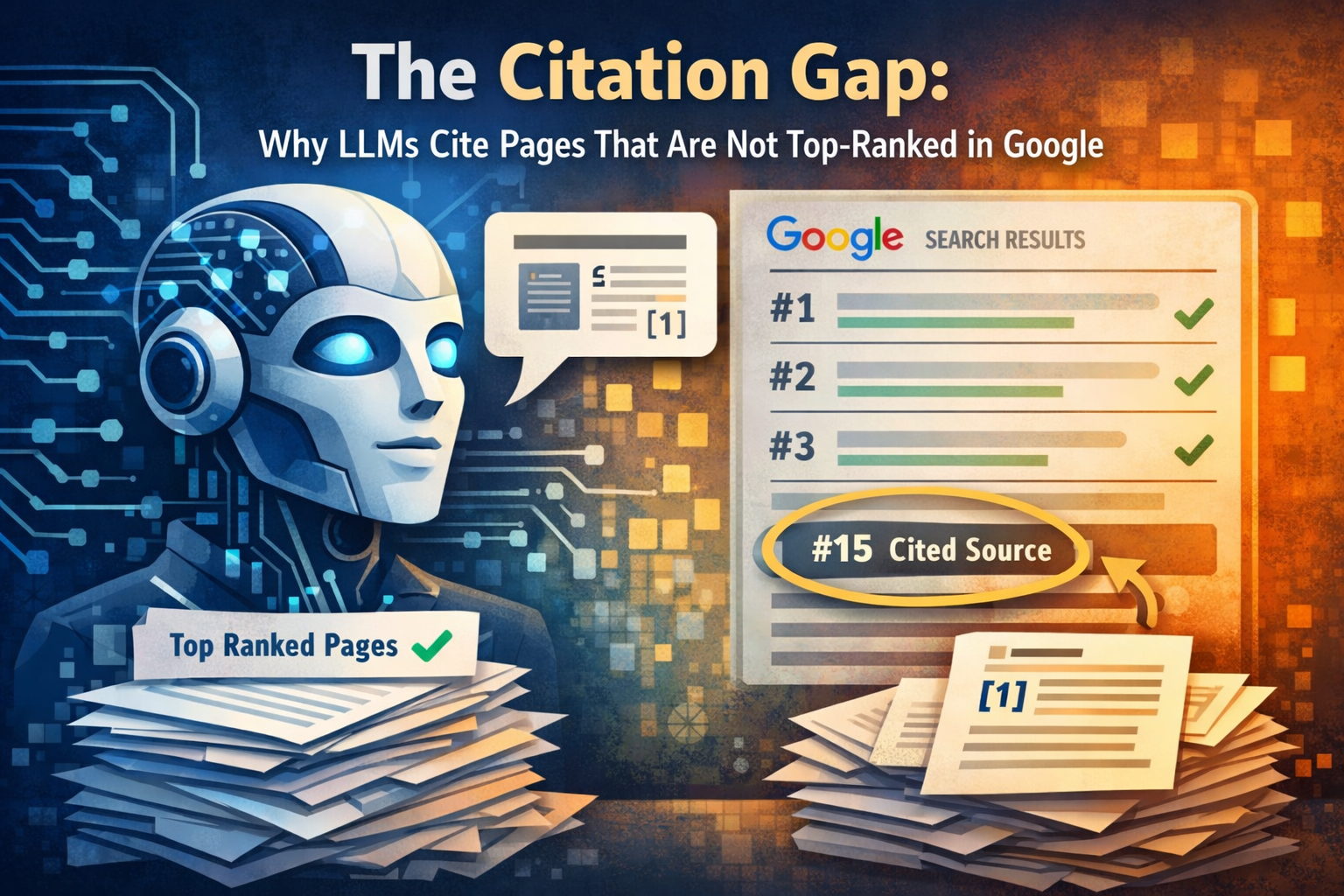

The citation gap: why LLMs cite pages that are not top-ranked in Google

Learn about Supporting article for OpenAI starts testing ads in ChatGPT — the monetiz cluster in this comprehensive guide.

Bing’s AI Performance Dashboard Is the First Real Citation Analytics Product for Publishers

A comparison review of Bing’s AI Performance dashboard vs legacy analytics, showing why citation metrics matter as ChatGPT tests ads and AI traffic shifts.