The citation gap: why LLMs cite pages that are not top-ranked in Google

Learn about Supporting article for OpenAI starts testing ads in ChatGPT — the monetiz cluster in this comprehensive guide.

The citation gap: why LLMs cite pages that are not top-ranked in Google

Introduction

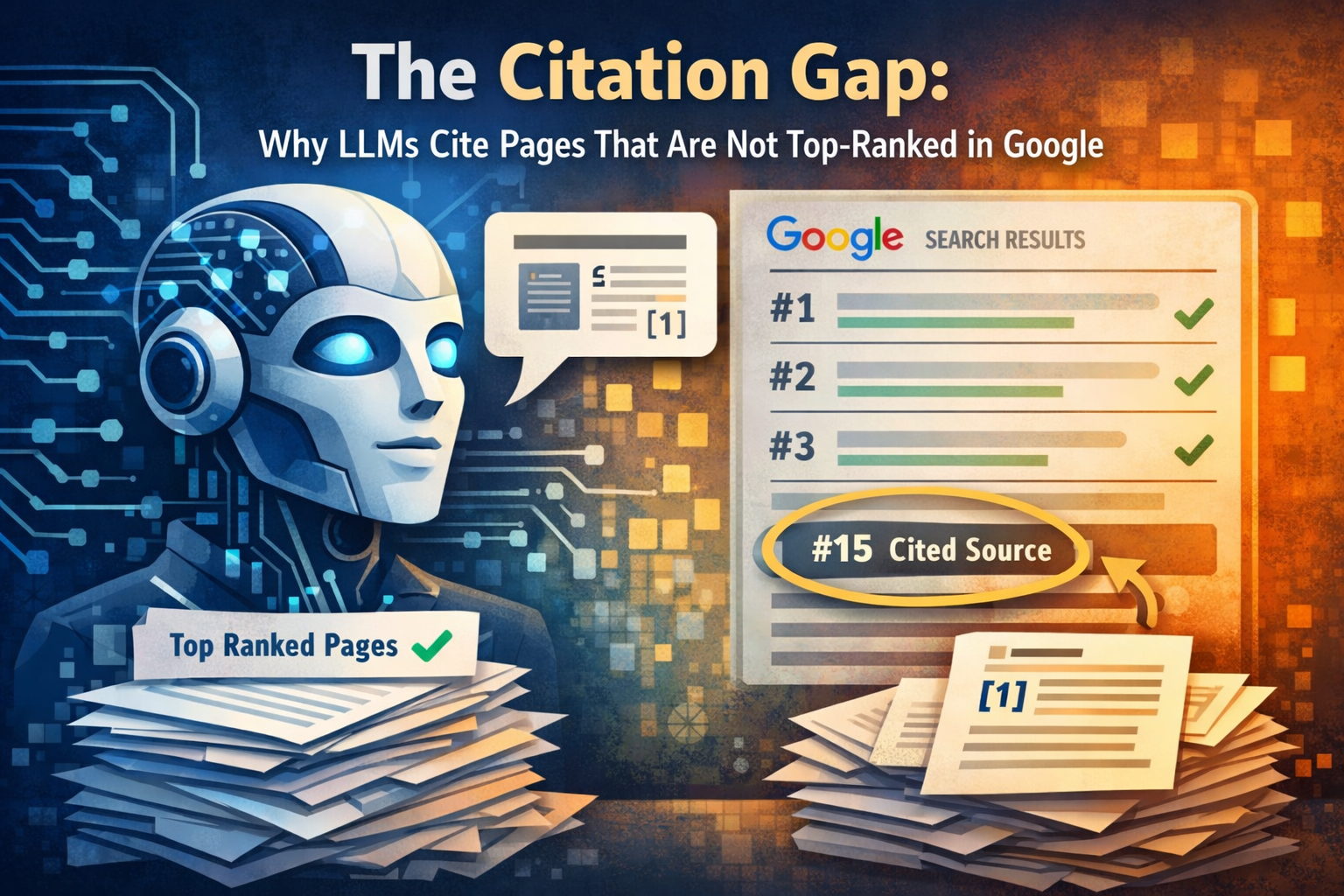

Google rank and AI citation are connected, but they are not the same thing. Large language models often cite pages that are not top-ranked in Google because answer engines are not trying to recreate a results page. They are trying to assemble the clearest, safest, and most extractable evidence for a response. A page can sit outside Google's top positions yet still contain the exact definition, product spec, transcript, policy line, or FAQ chunk a model wants to quote. That is why a GEO audit can surface high-value pages that barely stand out in a standard SEO dashboard.

That disconnect is the citation gap. It matters because AI interfaces increasingly answer before the click. When your brand is missing from cited sources, you can lose authority even if your traditional SEO program looks healthy. The competitive question is no longer only who ranks first, but who becomes the source the model trusts enough to name. For publishers and brands, this changes how success is defined: visibility is now partly about being included in the answer layer, not just appearing above the fold in search.

For teams adapting from classic SEO to AI visibility, our Generative Engine Optimization guide is a useful starting point for understanding why citations, source inclusion, and answer coverage deserve their own strategy and reporting layer.

Definition: the citation gap

The citation gap is the difference between where a page ranks in classic search and how often it is cited by LLM-powered answers. In practice, Google visibility and AI visibility overlap only partially.

This gap does not mean SEO stopped mattering. It means rankability and citeability are now separate optimization problems. Strong rankings still help discovery, crawling, and authority, but a citation is usually won by the page that makes a fact easiest to retrieve, verify, and attribute.

Understanding the fundamentals

Google still ranks pages, but AI answer systems retrieve passages, compare source candidates, and synthesize responses. The winning unit is often a chunk, not an entire URL. In Google's description of AI Mode's fan-out behavior, related sub-questions are expanded before an answer is generated, which helps explain why topic completeness can beat narrow keyword targeting. For a model-level view, see our guide to LLM ranking factors. In this environment, headings, tables, Q&A blocks, transcripts, and definitions are not cosmetic formatting choices. They are retrieval assets.

- Ranking: where a document appears in a search engine results page.

- Retrieval: the process for pulling source candidates that may support an answer.

- Chunking: breaking a page, PDF, or transcript into smaller passages.

- Citation readiness: how easy it is for a model to extract, trust, and attribute a passage.

- Answer coverage: the share of relevant prompts for which your brand appears.

Research from Similarweb shows that LLMs frequently over-index on source types such as official documentation, Wikipedia, Reddit, YouTube, and large corporate domains. A mid-ranking help page or transcript can outperform a better-ranked marketing page if it reduces ambiguity, carries clear authorship, or matches the user's phrasing more directly.

That source-type bias explains why information architecture matters more than many teams expect. If your best facts are scattered across blog posts, buried in PDFs, or written differently in product, support, and PR content, the model has less consistent evidence to work with. When the same entity, feature, claim, and definition are reinforced across your site and adjacent channels, citation readiness improves.

If your best information lives behind vague headings, weak page structure, or promotional copy, an LLM may skip it and cite a simpler competitor page, forum thread, or documentation entry instead. Retrieval systems reward clarity before polish.

Key findings and insights

The key finding is that ranking and citation are related but distinct systems. As LSEO notes, that disconnect should change content strategy, link-building, and information architecture. Pages win citations when they are explicit, attributable, and easy to quote, even when they are not the strongest ranking URLs for a head term. In other words, LLM relevance is often about quote-worthiness, not just page-level prominence.

The second insight is transparency risk. Research on answer bubbles suggests that AI search can produce different source sets and different narratives for similar prompts. That means two users can ask nearly the same question and receive answers grounded in different evidence. For brands, this raises the stakes: if your source is absent from one model or prompt variation, another publisher's framing may define the conversation instead.

Why a lower-ranked page can still be cited first

| Factor | What ranking systems often reward | What LLMs often cite |

|---|---|---|

| Primary objective | Order documents by overall relevance and authority | Assemble safe, direct evidence for a generated answer |

| Winning unit | Whole page or URL | Passage, sentence, table, transcript snippet, or FAQ |

| Format preference | Strong landing pages can perform well | Structured docs, definitions, lists, help content, and transcripts |

| Content style | Persuasive or broad coverage can rank | Clear claims, explicit facts, and low ambiguity win citations |

| Optimization focus | Keywords, links, page authority, and UX | Topic completeness, entity clarity, extractable structure, and citation readiness |

This does not make authority irrelevant. It reframes where authority should live. Link-building, digital PR, creator collaborations, and branded research are most valuable when they strengthen pages that contain quotable facts and reusable evidence. If your strongest authority points to thin marketing pages while your richest answers sit underdeveloped in support or resource sections, the citation gap persists.

Strategic implementation

Closing the citation gap starts with measurement. As GEO practice matures, the useful metrics are no longer just rankings and traffic. Teams should track citation frequency, share of model, answer coverage, and source inclusion rate. Our briefing on measuring AI visibility offers a practical framework for turning GEO from a buzzword into an operating model.

Audit prompts, models, and cited URLs

Start with the questions that matter commercially: comparison queries, problem queries, product explainers, trust queries, and branded prompts. Test them across multiple models and record which domains and URLs are cited. This gives you a baseline that rankings alone cannot reveal.

Map content by evidence type, not just keyword

Identify where your strongest definitions, methodologies, stats, FAQs, examples, and policy statements live. Many brands discover that the pages ranking best for a topic are not the pages containing the cleanest evidence. The fix is often structural, not purely editorial.

Rewrite for extractability

Use descriptive headings, short answer-first paragraphs, comparison tables, process steps, and FAQ blocks. Reduce vague promotional language and make core claims attributable. If a sentence would be useful as a standalone answer fragment, it is more likely to be retrieved and cited.

Strengthen entity consistency across channels

Keep product names, category terms, author signals, and company descriptions consistent across your site, docs, YouTube transcripts, and external profiles. Since LLMs often cite official docs and platform-native content, distribution strategy matters almost as much as on-page optimization.

Monitor, compare, and iterate by model

A page that is frequently cited in one system may be ignored in another. Re-test prompt sets regularly, track citation changes after edits, and compare which formats win. Over time, you can build a reliable picture of what each answer engine prefers from your brand.

To operationalize this, teams need a repeatable visibility layer. Our AI visibility monitoring page outlines how to track prompts, models, citations, and competitive share over time so improvements can be measured instead of guessed.

The fastest wins usually come from upgrading pages that already have some authority and topical relevance. Turning an okay page into a citation-ready page is often more efficient than creating a brand-new asset from scratch.

Common challenges and solutions

Most teams struggle with the citation gap because ownership is fragmented. SEO owns rankings, content owns editorial, product owns docs, support owns help content, and PR owns thought leadership. LLM citation performance cuts across all of them, so weak coordination creates weak evidence surfaces.

- Pitfall: relying on polished marketing copy. Solution: add direct answers, specs, examples, and FAQs that can stand on their own as evidence.

- Pitfall: thin topical coverage. Solution: build hub-and-spoke clusters so fan-out retrieval can find depth across adjacent subtopics.

- Pitfall: inconsistent naming of products, categories, and authors. Solution: align entity references across site sections and external channels.

- Pitfall: no measurement layer. Solution: separate ranking reports from model-specific citation tracking so you can see what changed and why.

Another common mistake is assuming homepage authority transfers automatically to every answer context. Models often select the deepest page with the cleanest supporting evidence. A mid-funnel explainer, changelog entry, benchmark page, or help article may deserve more investment than a glossy overview page because it answers the question with less ambiguity.

Future outlook

The citation gap will likely widen before it closes. Different models already prefer different source types, and answer-bubble research suggests users may continue to see different realities based on prompt wording, product defaults, and source access. As AI interfaces add more monetization and tighter answer layouts, organic citation slots could become even more competitive than organic rankings are today.

At the same time, Google's fan-out approach points toward a future where topic completeness, entity relationships, and structured information keep gaining importance. Brands that publish strong documentation, transcripts, first-party research, and coherent topic clusters will have more surfaces available for retrieval. The winners will not simply be the loudest publishers. They will be the ones that are easiest for models to understand and safest for models to cite.

There is no single universal AI search result. Brands should expect different citation patterns by model, interface, and prompt style, and should build monitoring processes that reflect that reality.

Conclusion and key takeaways

The citation gap is a strategic signal, not a temporary anomaly. LLMs cite pages that help them assemble trustworthy answers, and those pages are often not the same pages that win the top organic positions. Brands that respond by improving topic completeness, information architecture, extractable formatting, and model-level measurement will be better positioned to earn visibility in both search results and AI answers.

Key takeaways

Google rankings and LLM citations overlap, but they are not the same visibility system.

Lower-ranked pages can win citations when they contain clearer, more extractable evidence.

Official docs, transcripts, FAQs, and structured help content are disproportionately valuable in AI search.

Measurement should include citation frequency, answer coverage, share of model, and source inclusion rate.

The best GEO programs align SEO, content, docs, PR, and product information into one citation-ready system.

Frequently asked questions

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

Bing’s AI Performance Dashboard Is the First Real Citation Analytics Product for Publishers

A comparison review of Bing’s AI Performance dashboard vs legacy analytics, showing why citation metrics matter as ChatGPT tests ads and AI traffic shifts.

OpenAI starts testing ads in ChatGPT — the monetization moment AI search strategists have been waiting for

OpenAI’s ChatGPT ad tests signal a new era for AI search. Learn what’s changing, how targeting may work, and how to prepare with Knowledge Graph-led GEO.