Microsoft’s Multi-Model AI Strategy: A Paradigm Shift in Search Optimization (Structured Data Case Study)

Case study on how Structured Data improved visibility across Microsoft’s multi-model search stack—approach, metrics, and lessons for modern SEO.

Microsoft’s Multi-Model AI Strategy: A Paradigm Shift in Search Optimization (Structured Data Case Study)

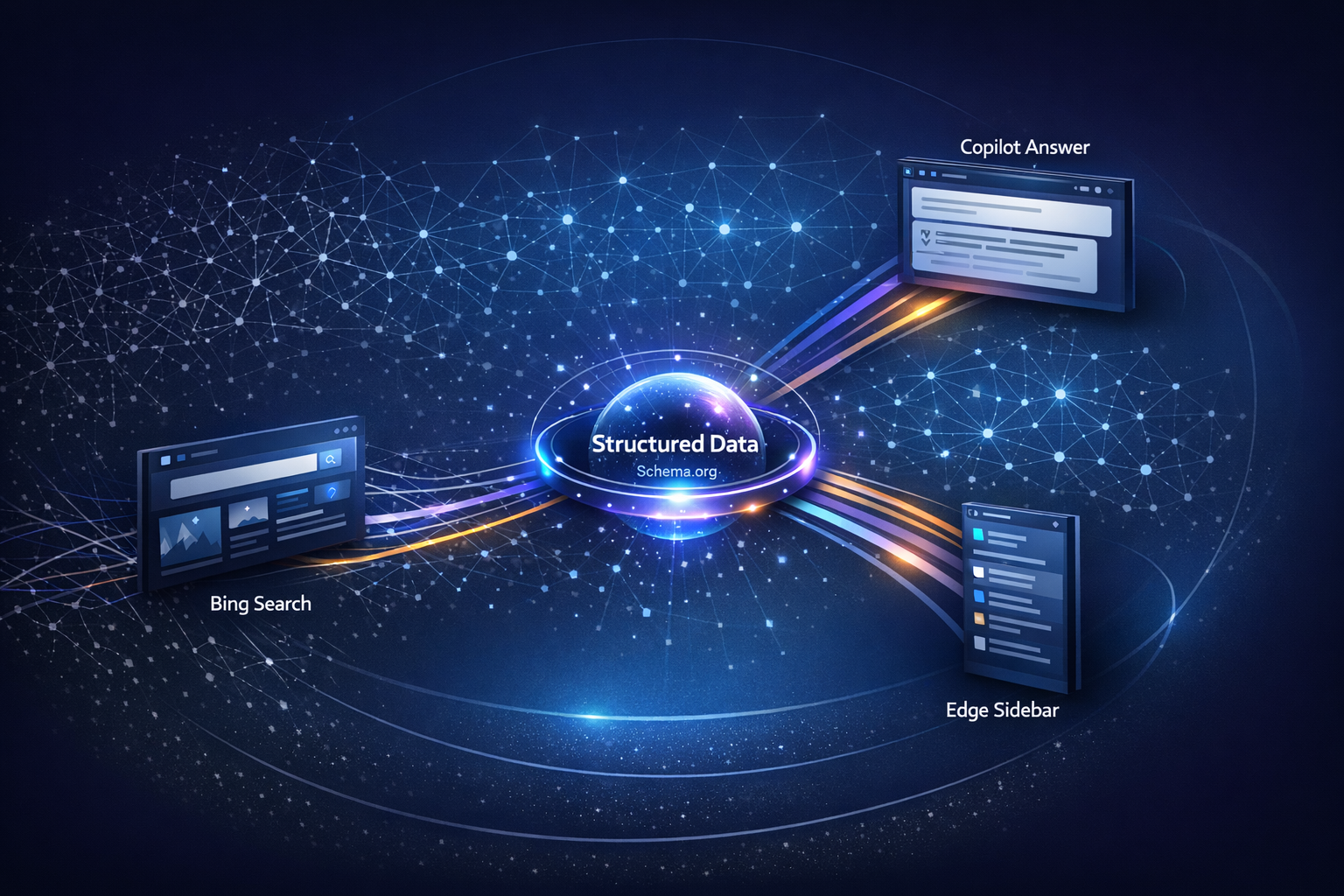

Microsoft’s search ecosystem is no longer “one ranker, one SERP.” Between Bing, Copilot experiences, and Edge-integrated answers, content is increasingly retrieved, summarized, and cited by multiple models and multiple pipelines. In that environment, Structured Data becomes less of a “rich results tactic” and more like an interoperability layer: it helps systems resolve entities, interpret intent, and safely extract facts. This spoke case study shows a practical, template-led Structured Data rollout (Product + FAQ + Organization/Author, plus foundational Article and Breadcrumb markup), the metrics we tracked, and what actually moved in Bing/Copilot visibility.

Context on Microsoft’s direction: reporting indicates Microsoft is increasingly embracing a multi-model approach—integrating different frontier models for accuracy and reliability across Copilot surfaces, rather than betting on a single model for every query and task. Axios highlights how multi-model orchestration is becoming a standard for “better answers.” For marketers, that implies a shift: optimize not only for ranking, but for machine understanding and citation-worthiness across multiple model behaviors.

In AI-mediated search, your page can “perform” even when it doesn’t rank #1—if it’s easy to retrieve, extract, and cite. Structured Data reduces ambiguity (entities, authorship, product identity, Q&A boundaries), which can increase the likelihood of accurate mentions and citations in Copilot-style answers.

Situation: Search Became Multi-Model—And Our Structured Data Started Underperforming

What “multi-model” means in Microsoft search surfaces (Bing, Copilot, Edge)

In classic SEO, a page competes primarily in a ranked list. In Microsoft’s newer stack, content can be:

- Retrieved as a source document (index → retrieval → re-ranking).

- Summarized into an answer (extractive + abstractive synthesis).

- Cited (or not) based on perceived trust, clarity, and “quote-ability.”

A multi-model strategy adds another layer: different models may interpret the same page differently, and orchestration can route tasks (classification, extraction, summarization) to specialized components. That increases the payoff of explicit, standardized signals—especially entity and relationship signals.

The failure mode: good pages, weak machine understanding

We saw a common pattern in a mid-sized content library (hundreds of URLs across articles and product-led pages): editorial quality was solid, but performance in Bing/Copilot-like experiences was inconsistent. Traditional keyword targeting didn’t translate cleanly into AI-mediated retrieval and answer generation because machines struggled with:

- Entity ambiguity (Is this a software product, a feature, or a concept?).

- Authorship/ownership uncertainty (Who is responsible for the claims?).

- Poor navigational context (breadcrumbs/canonical hierarchy not explicit).

Structured Data is a leverage point here because it provides explicit entity/relationship signals that reduce ambiguity for ranking, retrieval, and summarization. Scope-wise, we focused on one implementation area rather than “all SEO”: Product + FAQ + Organization/Author markup across priority templates, plus foundational Article and Breadcrumb markup.

Baseline Structured Data diagnostics (pre-change)

Illustrative baseline metrics used to prioritize templates: validity rate, coverage, and Bing enhancement eligibility. Replace with your measured values from Bing Webmaster Tools and validators.

Baseline diagnostics we captured before making changes: percent of pages with valid Structured Data, schema coverage by template, Bing rich result/enhancement eligibility indicators, and matched-period impressions/clicks from Bing (plus Copilot referrals where available in analytics).

Approach: Designing Structured Data for Entity Clarity in a Multi-Model Stack

Markup strategy: map content types to Schema.org (JSON-LD) and Knowledge Graph entities

We used a selection rule that prioritizes disambiguation over volume. Instead of adding many schema types, we focused on the smallest set that makes the page’s “who/what/where” unambiguous:

- Content pages: Article/BlogPosting + author + Organization + BreadcrumbList (foundation).

- Question-led pages: FAQPage only when the page contains visible Q&A blocks (no synthetic FAQs).

- Commercial/product pages: Product or SoftwareApplication when the page’s primary intent is evaluation, pricing, or feature comparison.

To support multi-surface AI understanding, we aligned markup to a lightweight internal “entity layer”: stable IDs, consistent naming, and selective sameAs references to authoritative profiles (e.g., company social profiles, knowledge base entries, or Wikipedia/Wikidata when appropriate and accurate). The goal wasn’t to build a full Knowledge Graph—just to stop creating new, slightly different entities on every page.

Implementation details: templates, validation, and governance

We operationalized the work like technical SEO infrastructure:

Inventory and map templates to schema

Identify the 3–5 templates that drive most organic landings (e.g., article, category, product, pricing, help). Map each to a minimal schema set that clarifies entities and intent.

Implement JSON-LD at the template level

Use JSON-LD for maintainability and separation from UI components. Ensure canonical URLs, stable @id patterns, and consistent Organization/Person objects across the site.

Add automated validation in CI

Run structured data tests on representative URLs per template on every release. Fail builds on critical errors (invalid JSON-LD, missing required fields, broken @id/canonical mismatches).

Govern with a checklist to prevent drift

Create rules for when FAQPage is allowed, how Product attributes are sourced, and who owns Organization/Author data. This prevents schema bloat and “spammy FAQ” regressions.

Validation quality trend (errors and warnings) during rollout

Illustrative trend showing how automated validation reduces errors over time. Replace with your validator exports (Bing + Schema.org tooling) and CI logs.

If a field is not visibly supported on the page (e.g., FAQ answers, pricing, ratings, availability), we do not mark it up. Mismatch is the fastest way to lose eligibility and reduce trust in AI extraction.

Execution: A 30–45 Day Rollout Across Priority Templates (What We Changed)

Template-level changes: Article + Breadcrumb + Organization (minimum viable set)

We started with a minimum viable set because it scales and reduces risk. Across the article and product-led templates, we standardized:

| Change area | What we implemented | Why it matters in multi-model search |

|---|---|---|

| BreadcrumbList | JSON-LD breadcrumbs aligned with visible nav + canonical URLs | Improves navigational context and reduces hierarchy ambiguity for retrieval and summarization |

| Organization + Author/Person | Stable @id patterns, consistent names, sameAs links where appropriate | Supports entity resolution and credibility signals when models decide what to cite |

| Article/BlogPosting | headline, datePublished/dateModified, author, mainEntityOfPage, image (when present) | Clarifies document type and provenance; improves extractability of “who said what, when” |

High-intent additions: FAQPage and Product/SoftwareApplication where justified

Next, we added high-intent markup only where it matched on-page structure:

- FAQPage on pages with real Q&A modules (question headings + direct answers). Each Q&A was also visible to users, not hidden behind tabs that failed rendering in some clients.

- Product/SoftwareApplication on evaluation pages where the primary entity is the product (not the company blog). We kept attributes conservative—name, description, url, brand/Organization, and only included offers/pricing when the page displayed it clearly.

QA included: Bing validation checks, structured data testing, and spot-checking how snippets and enhancements appeared over time (not all changes show immediately due to crawl and processing lag).

Results: What Improved in Bing/Copilot Visibility and Why It Mattered

Search performance deltas: impressions, CTR, and rich result eligibility

Using matched pre/post windows (and annotating the rollout period), we observed directional gains concentrated in the updated templates. The biggest improvements correlated with pages where entity identity and page purpose were previously ambiguous (e.g., product-feature explainers that looked like generic blog posts).

Matched-period performance (illustrative): Bing metrics pre vs post Structured Data rollout

Illustrative lift pattern often seen when entity clarity improves: higher CTR and more eligible enhancements. Replace with your Bing Webmaster Tools exports and analytics.

AI answer readiness: improved extractability and citation likelihood

The most meaningful “multi-model” outcome wasn’t just CTR. It was fewer extraction mistakes in internal QA prompts and a higher rate of correct attributions (e.g., the assistant naming the right product, company, or author). When entities are stable and relationships are explicit, retrieval precision improves and summarization is less likely to blend your brand with a similarly named concept.

Structured Data doesn’t “force” a model to cite you—but it can make your content easier to retrieve and safer to quote by reducing ambiguity around who the page is about and what claims it supports.

What did not change (or changed slowly): rankings for broad, non-entity queries; pages with thin substance; and topics where the page lacked unique facts worth extracting. Structured Data amplified clarity—it didn’t replace content quality.

When reporting impact, annotate (1) crawl/processing lag, (2) seasonality, and (3) concurrent changes (title rewrites, internal linking, content updates). Use matched periods and, if possible, a holdout template that did not receive markup changes.

Lessons Learned: A Playbook for Structured Data in Microsoft’s Multi-Model Era

What worked: entity consistency, template governance, and “minimum viable markup”

- Entity consistency beat schema volume: stable

@idpatterns, consistent naming, and carefulsameAsimproved reconciliation across surfaces. - Template-level implementation produced fast coverage gains with low editorial overhead.

- Governance prevented drift: CI validation + a checklist reduced regression after releases.

What to avoid: schema bloat, mismatched content, and unstable identifiers

Minimum viable markup vs. schema bloat

- Higher validity and lower maintenance burden

- Clearer entity signals for retrieval and summarization

- Less risk from policy/eligibility changes around enhancements

- Fewer “instant” rich-result experiments

- Requires discipline: you must choose what not to mark up

- Impact is often indirect (extractability/citation), not always a visible SERP feature

Two practical anti-patterns we saw during audits: (1) unstable identifiers (changing @id when URLs change, creating duplicates), and (2) mismatched markup (FAQ added without visible Q&A, Product fields sourced from inconsistent CMS inputs). Both create ambiguity—exactly what multi-model systems struggle with.

Ongoing KPI dashboard (schema health + search outcomes)

A simple dashboard model: combine technical validity KPIs with outcome KPIs by template to keep Structured Data working as infrastructure.

Key Takeaways

Microsoft’s multi-model search surfaces reward machine understanding: optimize for retrieval, extraction, and citation—not only rank position.

Structured Data is highest leverage when it clarifies entities and relationships (Organization/Author, Article, Breadcrumb, Product/SoftwareApplication) with stable identifiers.

FAQPage works best when it reflects visible Q&A blocks; mismatched or “bloat” markup increases eligibility risk and can harm trust.

Treat Structured Data like product infrastructure: template ownership, CI validation, monitoring dashboards, and change control drive sustained gains.

FAQ

Competitive pressure is accelerating AI-native search experiences (for example, Perplexity’s growth and funding has been framed as a threat to incumbents), which increases the importance of being machine-readable and citation-ready across platforms. VentureBeat provides helpful context on how quickly AI search is evolving.

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning — analysis and GEO implications for AI search.

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants — analysis and GEO implications for AI search.