Model Context Protocol: Standardizing AI Integration Across Platforms

Why Model Context Protocol (MCP) is the missing standard for reliable AI integrations—and what it changes for Generative Engine Optimization teams.

Model Context Protocol: Standardizing AI Integration Across Platforms

Model Context Protocol (MCP) matters because AI products are rapidly shifting from “chat that answers” to “agents that do”—and that shift breaks when every tool, data source, and permission model is integrated differently. MCP is emerging as a practical standard for how models discover tools, exchange context, and return outputs with consistent provenance. For Generative Engine Optimization (GEO) teams, that consistency isn’t just engineering hygiene: it directly affects whether answer engines can reliably retrieve your content, attribute it correctly, and cite it with confidence across platforms.

When tool/context interfaces are standardized, you reduce retrieval failures and attribution breakage—two of the most common reasons brands lose AI citations. MCP can become part of the technical foundation for improving AI Visibility and Citation Confidence by making context, provenance, and permissions more reliable end-to-end.

Featured-snippet setup: What is Model Context Protocol (MCP) and why it matters now

Definition in one paragraph (snippet-ready)

Model Context Protocol (MCP) is a standardized way for AI models and agents to discover and use external tools, data sources, and actions across platforms while handling context consistently—such as authentication, permissions, schemas, inputs/outputs, and provenance. Instead of building one-off “connectors” for every app and model, MCP defines a repeatable contract so tools can be exposed in a predictable, auditable way, improving reliability and governance as AI systems become more integrated with real workflows.

Reference background (high-level): Wikipedia’s MCP overview describes MCP adoption and its role in enabling integration and data sharing between AI systems and external tools.

The integration problem MCP is trying to solve

Most organizations now run dozens (often hundreds) of SaaS applications, each with its own API patterns, auth methods, data schemas, and rate limits. AI teams then replicate effort across models and channels: one integration path for a chatbot, another for an internal agent, another for a browser-like assistant, and another for analytics. The result is integration sprawl: brittle glue code, inconsistent permissions, inconsistent “source of truth” selection, and inconsistent citation/provenance behavior—exactly the conditions that make AI outputs unreliable and hard to govern at scale.

Opinionated thesis

MCP is becoming table stakes for scalable AI productization. Without a standard tool/context contract, integrations remain bespoke, fragile, and difficult to audit—especially as answer engines evolve into action engines.

This shift is visible in how major AI experiences are converging on “AI-native” browsing and action flows (e.g., AI browsers and assistants that can navigate, retrieve, and transact). Coverage of AI-first browsing and integrated actions underscores that the interface is no longer just text: it’s tool execution. See: OpenAI’s ChatGPT Atlas browser coverage and Perplexity’s Comet browser overview, plus action-oriented AI search experiences like Perplexity’s “Buy with Pro”.

| Integration sprawl: quick benchmark stats (use as planning inputs) | What it implies for MCP |

|---|---|

| Organizations use ~112 SaaS apps on average (Okta “Businesses at Work” report). | Even “just” 20 high-value tools can create dozens of model/channel-specific connectors without a standard contract. |

| Integration and maintenance costs often dominate lifecycle effort (engineering time shifts from building features to keeping connectors working). | MCP-style standardization is primarily a reliability and governance play: fewer bespoke patterns to test, secure, and audit. |

| Source: Okta Businesses at Work (SaaS app count). | Use this as a baseline for inventorying “tool surface area” before you design MCP endpoints. |

Okta reference: Businesses at Work.

The real bottleneck: Context fragmentation is killing reliability (and trust)

Teams often blame model quality when outputs go wrong. In practice, many production failures trace back to context fragmentation: the model can’t reliably discover the right tool, can’t access the right data under the right permissions, can’t interpret the schema consistently, or can’t preserve provenance so citations survive the generation pipeline.

Why “prompt + plugin” doesn’t scale

- Tool discovery is inconsistent: different models and runtimes expose different “capabilities,” naming conventions, and parameter contracts.

- Permissions drift: a tool that works in a dev sandbox fails in production due to auth scope, token lifecycle, or user impersonation differences.

- Schema drift: fields change, IDs change, “customer” means different things across CRM vs billing vs support systems.

- Provenance is bolted on: citations are added as an afterthought, not a first-class output of tool calls.

Failure modes: wrong tool, wrong data, wrong citation

Common AI integration failure modes (illustrative audit baseline)

Example distribution from a lightweight internal audit of 50 production answers. Use this as a template: categorize failures, then re-measure after standardizing tool/context contracts (e.g., MCP).

Sample 50 AI answers across your top query clusters. For each answer, score: (1) retrieval success (did it use the right source?), (2) citation accuracy (do links support the claim?), (3) provenance completeness (can you trace tool calls and documents used?), and (4) entity consistency (are key entities named and disambiguated consistently). This gives you a baseline to justify standardization work and to measure post-MCP impact.

How this maps to GEO outcomes:

- Lower AI Visibility: if retrieval fails or tool selection is wrong, your content never enters the model’s evidence set.

- Lower Citation Confidence: if provenance is incomplete, citations are missing, or sources don’t support claims, answer engines avoid citing (or cite competitors).

- Weaker Knowledge Graph alignment: inconsistent entity/relationship context leads to inconsistent naming, attributes, and linkages across channels.

Opinionated take: MCP is the “HTTP moment” for AI toolchains—especially for GEO workflows

The analogy is imperfect, but useful: HTTP didn’t make content good—it made content interoperable. MCP doesn’t make your data clean or your content strategy coherent. It makes the tool layer interoperable, so models can reliably call functions, retrieve evidence, and return outputs with traceable provenance across platforms.

Standardization as a force multiplier for Answer Engine Optimization

In GEO, “winning” often comes down to repeatability: can the system retrieve the same canonical facts, from the same authoritative sources, with the same entity framing, every time? Standardization helps you move from artisanal prompt-crafting to industrial workflows where retrieval and citations are engineered outputs—not happy accidents.

MCP-style standardization vs bespoke integrations (GEO lens)

- Reusable tool contracts across models/channels (less duplicated integration work)

- More consistent provenance and logging (better citation traceability)

- Clearer boundaries for permissions and governance (safer agentic actions)

- Easier to enforce entity schemas and structured outputs (better Knowledge Graph alignment)

- Upfront design work: tool schemas, auth patterns, provenance requirements

- Risk of “standardizing the wrong thing” if your information architecture is weak

- May not fit extreme performance or specialized compliance constraints without extensions

How MCP can improve retrieval, attribution, and governance

- Retrieval: standard tool discovery + schema-aligned responses reduce “wrong tool/wrong query” errors and make it easier to implement canonical retrieval paths.

- Attribution: if tools return evidence bundles (document IDs, URLs, excerpts, timestamps), citations become composable and verifiable instead of reconstructed post-hoc.

- Governance: consistent auth patterns, audit logs, and tool boundaries lower the risk of silent permission drift and make compliance reviews less painful.

Benchmark template: time-to-integrate and incident rate before vs after standardization

Use this KPI pattern to quantify MCP impact. Plot integration cycle time (days) and change-failure incidents (per month) across two quarters.

If you need a concrete “why now,” look at how leading models are being positioned for search and tool use. For example, coverage of newer model generations emphasizes stronger reasoning and search-like behaviors (see Claude model overview: https://en.wikipedia.org/wiki/Claude_(language_model)). As capabilities rise, the limiting factor becomes integration reliability and governance—not raw model IQ.

What MCP changes in practice: A reference architecture for standardized AI integration

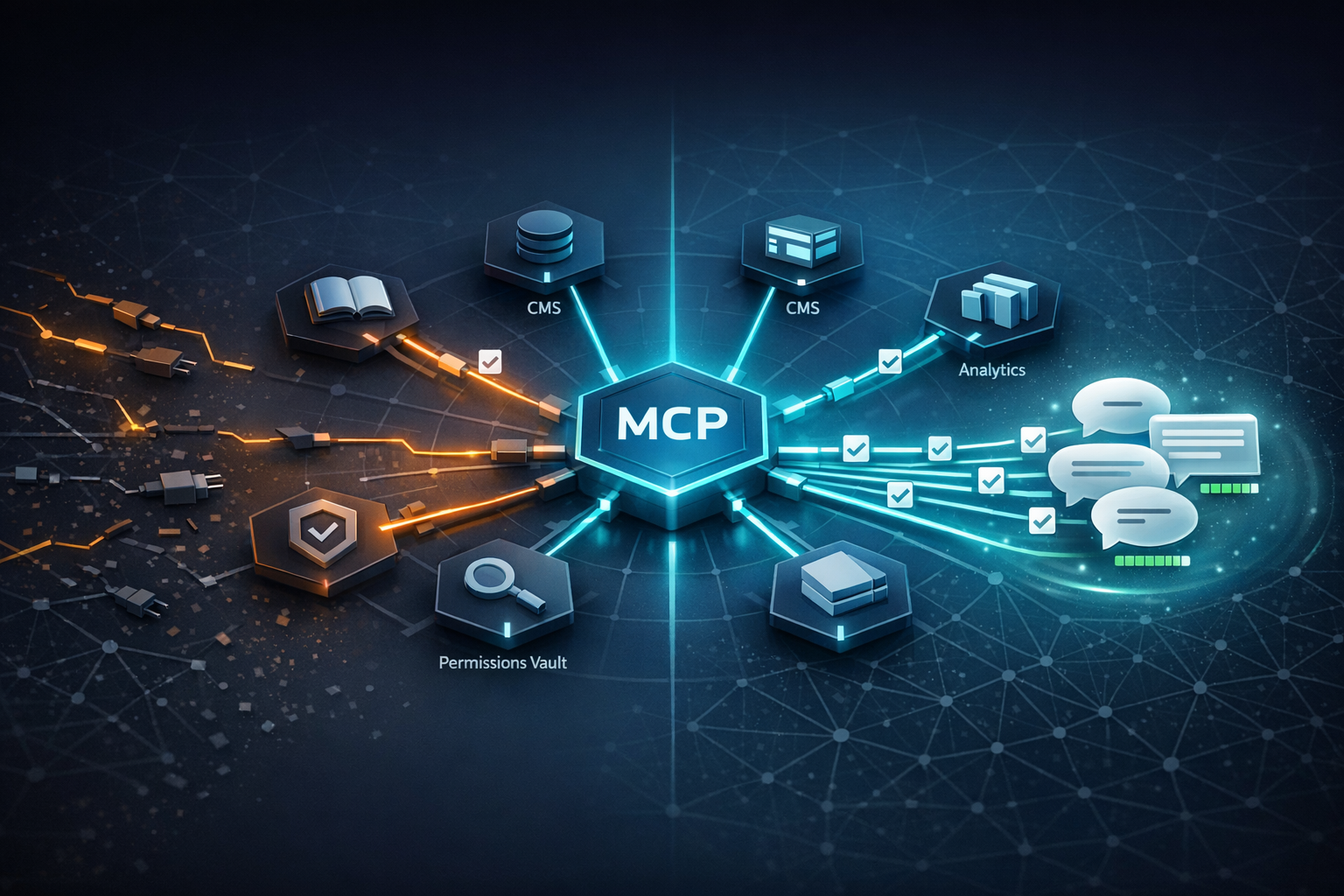

MCP in the stack (model ↔ context broker ↔ tools/data ↔ governance)

Reference architecture: MCP-enabled AI integration (conceptual)

Conceptual stack showing how models/agents interact with MCP servers to access tools and data with consistent auth, schemas, and provenance logging.

A practical way to think about MCP is as the contract layer between models and the messy real world. You expose a set of tools (e.g., “searchDocs,” “getProductSpec,” “fetchPricingPolicy,” “lookupEntity”) through MCP servers. A context broker (sometimes separate, sometimes embedded) enforces authentication, policy, and routing. Crucially, tool responses should return not only data, but also provenance: where the data came from, when it was retrieved, and what identifiers/URLs support citations.

Where structured data and Knowledge Graphs fit

MCP doesn’t replace structured data or a Knowledge Graph—it makes them easier to use consistently. The key design move for GEO teams is to ensure MCP tools return schema-aligned entities and relationships (even if the underlying system is unstructured). For example:

- A “getEntity” tool returns: canonical name, aliases, unique ID, type, attributes, and authoritative URLs.

- A “searchPolicyDocs” tool returns: ranked results plus evidence snippets and source metadata required for citations.

- A “comparePlans” tool returns: a normalized comparison table with explicit field definitions (preventing schema drift in generated answers).

If you want citations, require every MCP tool response to include citation-ready metadata (URL, title, publisher/owner, timestamp, and a stable document or entity ID). Don’t rely on the model to “remember” where something came from.

Implementation checklist (90-day plan)

Days 1–15: Inventory tools and define “citation-critical” journeys

List the top 10–20 tools your AI experiences touch (CMS, docs, web search, analytics, CRM, support KB). Identify the top GEO query clusters and map which tools must be called to answer them with verifiable evidence.

Days 16–35: Define entity schema + provenance contract

Define the minimum entity fields your answers must preserve (IDs, canonical names, types, key attributes). Specify provenance fields required for citations (URL, excerpt, timestamp, owner). Align these to how your content is published and updated.

Days 36–60: Implement 2–3 high-value MCP endpoints

Start with endpoints that reduce the biggest failure modes: (1) canonical retrieval/search, (2) entity lookup, (3) policy/spec fetch. Ensure consistent error handling (auth failures, rate limits, partial data) so the model can degrade gracefully.

Days 61–75: Add logging, audits, and guardrails

Instrument tool-call success rate, latency, and “evidence attached” rate. Implement permission reviews, least-privilege scopes, and audit trails for sensitive tools. Treat tool execution as production software, not experimentation.

Days 76–90: Measure GEO outcomes and expand

Re-run the 50-answer audit. Track citation accuracy, retrieval success, and answer consistency across platforms. Expand MCP coverage to the next set of tools only after you can show measurable improvement in reliability and provenance completeness.

Counterpoint: Standardization can backfire—here’s where MCP won’t save you

The risks: monoculture, leaky abstractions, and security theatre

Standardization is not a substitute for good data, good content, or good governance. If your underlying sources are contradictory, outdated, or poorly structured, MCP will help you retrieve the wrong thing more reliably. And if you standardize without real policy enforcement, you can create “security theatre”: logs exist, but no one reviews them; scopes exist, but are overly broad; approvals exist, but are rubber-stamped.

A standard interface can expand the impact of a mis-scoped permission. Design for least privilege, tool-level authorization, and continuous audits—especially for action tools (purchases, publishing, CRM updates).

When custom integrations are still justified

- Highly specialized workflows with unique domain logic that doesn’t generalize across teams or platforms.

- Extreme performance constraints (ultra-low latency) where a standard broker layer adds unacceptable overhead.

- Compliance requirements that demand bespoke controls, isolation boundaries, or specialized auditing beyond your MCP stack today.

Call to action for Generative Engine Optimization teams: Build for citations, not just completions

The MCP-first GEO playbook

- Identify “citation-critical” answers: the queries where being cited changes revenue, trust, or adoption.

- Expose canonical sources as tools: make the “right place to look” a first-class MCP tool, not a prompt instruction.

- Require evidence bundles: every tool response should carry citation-ready metadata and stable identifiers.

- Standardize entity outputs: enforce consistent entity naming, types, and attributes so answers align with your Knowledge Graph strategy.

- Measure what answer engines reward: citation rate, citation accuracy, retrieval success, and cross-platform answer consistency.

| GEO scorecard metric | Baseline (example) | Target for “ready to scale” |

|---|---|---|

| Retrieval success rate (tool returns correct canonical source) | 70% | ≥ 90% |

| Citation rate (answers that include at least one verifiable citation) | 55% | ≥ 80% |

| Citation accuracy (citations support the claim) | 60% | ≥ 90% |

| Provenance completeness (tool calls logged + evidence IDs stored) | 40% | ≥ 95% |

| Answer consistency (same query across platforms yields aligned facts/entities) | Mixed | Defined variance thresholds + monitoring |

Expert quote opportunities and what to ask

- Platform engineer: “What integration work disappeared after standardizing tool contracts? What new failure modes appeared?”

- Security leader: “How do you enforce least privilege for tools and audit agentic actions? What’s your incident response plan for tool misuse?”

- SEO/GEO lead: “Which query clusters saw the biggest lift in citation rate and consistency once provenance became mandatory?”

Key Takeaways

MCP standardizes how models/agents discover and use tools, data, and actions—making context handling (auth, schemas, provenance) more consistent across platforms.

Most AI reliability failures in production come from context fragmentation (wrong tool, wrong data, missing provenance), not just “model hallucinations.”

For GEO, MCP is a leverage point: standardized retrieval and evidence bundles can improve AI Visibility and Citation Confidence by reducing attribution failures.

Standardization isn’t magic: you still need clean sources, entity modeling, and real governance (least privilege + audits) to avoid scaling mistakes.

FAQ

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning — analysis and GEO implications for AI search.

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants — analysis and GEO implications for AI search.