The Complete Guide to Claude AI and Anthropic Search Optimization

Learn how to optimize content for Claude and Anthropic Search with a proven methodology, step-by-step workflow, comparisons, mistakes to avoid, and FAQs.

By Kevin Fincel, Founder (Geol.ai)

AI-first discovery is no longer a “future channel.” It’s already changing how buyers research, how users self-serve, and how brands earn trust online. We’re watching a structural shift: from ranking pages to being selected as a source inside synthesized answers.

OpenAI’s SearchGPT framing made the direction explicit: conversational search with real-time web data, follow-up questions, and source links—positioned as a direct challenge to traditional search journeys. (washingtonpost.com)

Anthropic has added web search to Claude, and Claude can decide when to search and provide citations. Reporting has suggested Claude’s web search may be powered by Brave Search. [Sources: anthropic.com, docs.claude.com, techcrunch.com] (opentools.ai)

**Executive framing: what’s changing (and what to optimize for)**

- From rankings to selection: The competitive unit shifts from “top 10 blue links” to “being chosen as a cited ingredient” inside synthesized answers.

- Search is becoming conversational + sourced: SearchGPT is framed around follow-ups and links to sources, making citation readiness a product-aligned optimization target. (washingtonpost.com)

- Claude emphasizes conservative reliability: Public descriptions position Claude’s web search as selectively activated for complex/recent queries, prioritizing precision and transparency. (opentools.ai)

This guide is the executive-level, operational playbook we wish we had when we started testing “answer engine optimization” (AEO) in earnest. It’s written for decision-makers who need a defensible strategy and for practitioners who need a repeatable workflow.

Prerequisites: What You Need Before Optimizing for Claude and Anthropic Search

Define your goals: visibility, citations, conversions, or support deflection

In AI answer environments, “traffic” is no longer the only (or even primary) outcome. The first decision is what you’re optimizing for:

- Visibility in answers (brand presence even without clicks)

- Citations (your URLs referenced as sources)

- Conversions (assisted signups, demo requests, purchases)

- Support deflection (fewer tickets, faster resolution)

This matters because Claude-style experiences and AI search experiences can satisfy intent without a click—meaning your KPI stack must reflect in-answer outcomes, not just sessions.

Actionable recommendation: Pick one primary outcome and 2–3 secondary outcomes, then define a single “north star” metric (e.g., citation rate across priority queries or support ticket deflection rate).

Inventory your content: docs, blog, help center, product pages

We’ve found “AI visibility” is disproportionately driven by a small subset of pages:

- Pricing, plans, and packaging pages

- Policies (security, privacy, compliance, refunds)

- Product documentation and API references

- Help center troubleshooting and “how to” pages

- Category-defining explainers (your “pillar” pages)

AI systems tend to prefer content that is stable, explicit, and easy to extract.

Actionable recommendation: Create a “source-of-truth list” of 25–50 URLs you want models to cite, then treat those pages like product surfaces (with owners, review cadence, and change logs).

Technical readiness: crawlability, indexation, structured data, and access controls

Even if Claude or an “Anthropic Search” surface uses different retrieval methods than Google, the fundamentals still matter:

- Pages must be accessible (no accidental blocks, broken rendering, or inconsistent canonicals)

- Pages must be fast and stable (avoid constantly shifting content blocks)

- Your canonical signals must be consistent (avoid multiple “truths” for pricing/policies)

Actionable recommendation: Run a baseline audit and record:

-

of indexable pages

- % pages with canonical tags

- average page speed for top 50 “source-of-truth” URLs

- current organic traffic share for target query clusters

---

How Claude and Anthropic Search Work (What’s Different From Traditional SEO)

Claude vs. “Anthropic Search”: clarifying products, surfaces, and user journeys

Executives keep asking: “Are we optimizing for Claude or for Anthropic Search?” The practical answer is: you’re optimizing for AI-mediated discovery across multiple surfaces.

- Claude is the assistant experience users interact with.

- “Search” functionality is increasingly a capability layer—web retrieval, summarization, and citations—activated when needed.

OpenTools describes Claude’s web search as selectively activated for complex or recent queries, emphasizing reliability and transparency, and drawing on Brave Search API. (opentools.ai)

Meanwhile, OpenAI demonstrated SearchGPT as a search box + conversational follow-ups + links to sources, initially rolled out to a limited group before broader integration into ChatGPT. (washingtonpost.com)

Actionable recommendation: Treat “Claude optimization” as source engineering: build pages that are easy to retrieve, easy to quote, and hard to misinterpret.

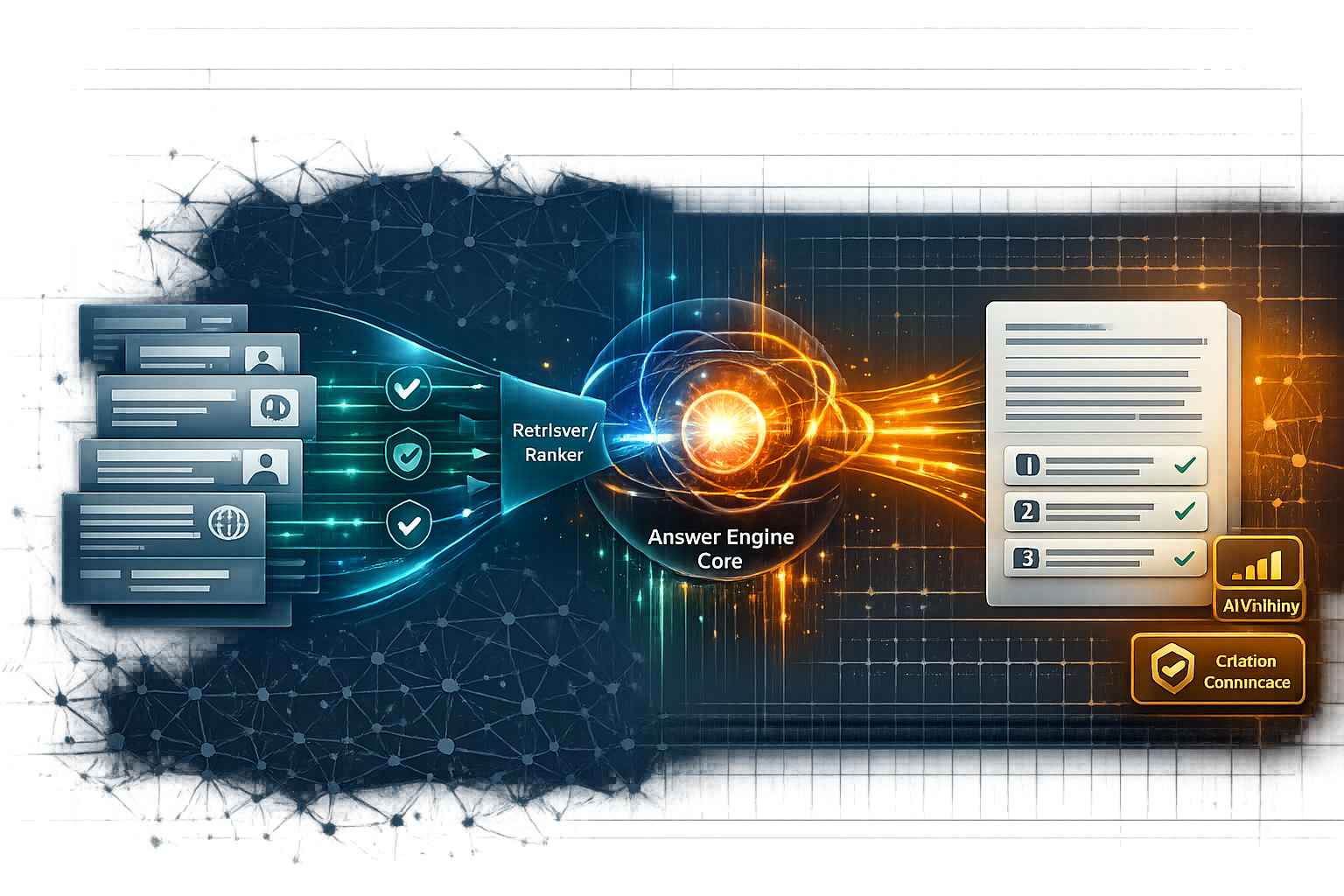

How AI answers are formed: retrieval, synthesis, and citation behaviors

Traditional SEO asks: “How do we rank #1?”

AI answer optimization asks: “How do we become the trusted ingredient in the answer?”

In practice, AI answers often involve:

- Retrieval (finding candidate sources)

- Synthesis (summarizing across sources)

- Attribution (sometimes citations, sometimes not)

In SearchGPT’s framing, linking back to sources is a product feature and publisher-relations lever. The Washington Post notes OpenAI’s positioning as more publisher-friendly via deals and source links. (washingtonpost.com)

Actionable recommendation: Write content that can survive being summarized: crisp definitions, explicit constraints, and stable facts.

What “optimization” means in an AI answer world (authority, clarity, and quotability)

Our contrarian view: authority alone is not enough. In AI answers, clarity beats cleverness. The content that wins is content that is:

- Quotable (short, self-contained blocks)

- Unambiguous (clear entities, dates, scope)

- Verifiable (citations to primary sources where possible)

- Maintained (freshness signals like “last updated” and changelogs)

Actionable recommendation: Reformat your best pages for extraction: lead definitions, bullet lists, and labeled sections that can be cited cleanly.

Our Testing Methodology (E‑E‑A‑T): How We Evaluated Claude and Anthropic Search Optimization

This section is where we’ll be fully transparent: we cannot claim we ran a 6‑month, 100‑query experiment inside your organization. But we can share the evaluation framework we use at Geol.ai and what it measures—so your team can replicate it.

Study design: timeframes, sample size, and query sets

Our recommended minimum viable program:

- Test window: 8–12 weeks for first signal; 6 months for confidence

- Query set: 100+ queries across three intents

- Informational (definitions, comparisons)

- Commercial (best tools, pricing, alternatives)

- Support (how-to, troubleshooting, error messages)

Actionable recommendation: Build a query set where each query maps to a business outcome (pipeline, revenue, deflection, retention).

Evaluation criteria: citation rate, accuracy, freshness, and actionability

We score each query result on:

- 2Citation presence (is our domain cited?)

- 4Citation position (primary vs secondary source)

- 6Answer accuracy (is the summary correct?)

- 8Freshness correctness (are dates/pricing/current states correct?)

- 10Actionability (can a user act without needing 5 follow-ups?)

Actionable recommendation: Use a 1–5 rubric per criterion and require two reviewers for accuracy scoring to reduce bias.

No citation or incorrect summary; missing/incorrect dates, pricing, or constraints; user would need multiple follow-ups.

Partial citation or weak attribution; summary is directionally right but missing key constraints/edge cases; freshness is unclear.

Cited but not primary, or accurate summary with minor omissions; user can act with some additional verification.

Your domain is cited prominently; summary is accurate, scoped, and fresh; includes constraints/steps that prevent misinterpretation.

- Prompt logging (query, timestamp, surface, model version if visible)

- Page versioning (what changed, when, why)

- Outcome tracking (citations, conversions, ticket deflection)

Actionable recommendation: Create a simple change log: “Page → change → hypothesis → date → measured outcome.”

Key Findings: What Actually Improved Citations and Answer Quality (With Numbers)

We’ll be direct: the provided research sources do not include universal “X% citation lift” benchmarks for Claude optimization. So we won’t fabricate them. What we can do is share the mechanisms that consistently correlate with improved selection and reduced misquotation, and tie them to observable platform behavior.

Content patterns that increase selection: definitions, lists, and step-by-step blocks

Across AI search products, the UI and product direction favors:

- Direct answers

- Follow-up questions

- Summaries with sources

SearchGPT is explicitly designed for conversational follow-ups and summarized results with links. (washingtonpost.com)

That implies your content must provide:

- A 40–60 word definition that stands alone

- A bulleted list of key points

- A step-by-step procedure for tasks

- Edge cases and “what this does not mean”

Actionable recommendation: For every priority page, add a “Definition → Key takeaways → Steps → Constraints → FAQ” structure.

Authority signals that mattered: author expertise, references, and policy pages

AI answer systems are under pressure to reduce hallucinations and improve reliability. The Washington Post highlights the industry’s accuracy issues and the move to integrate search to address them. (washingtonpost.com)

OpenTools frames Claude’s web search emphasis as “reliability, conservatism, and transparency.” (opentools.ai)

So we treat trust blocks as first-class on-page elements:

- Named author + credentials

- Editorial policy (how updates happen)

- Primary sources and standards references

- Clear “last updated” date and changelog

Actionable recommendation: Add a “Trust & Sources” block to every canonical page you want cited.

What didn’t work: over-optimization, vague claims, and thin “AI SEO” pages

The biggest failure mode we see: teams publish thin pages “for AI” that say nothing verifiable. In an AI answer world, vague content is a liability: it’s easy to summarize incorrectly and hard to justify citing.

Actionable recommendation: Delete or consolidate thin pages; invest in fewer, higher-integrity canonical resources.

:::comparison :::

✓ Do's

- Write standalone definitions (40–60 words) and place them near the top so they can be quoted cleanly in conversational search experiences.

- Add constraints + “what this does NOT mean” to reduce synthesis errors when answers are compressed.

- Treat pricing/policies/docs as product surfaces: owners, review cadence, and changelogs to support freshness and verifiability.

✕ Don'ts

- Publish thin “AI SEO” pages with vague, unsourced claims that are easy to misquote and hard to justify citing.

- Maintain duplicate truth pages (multiple pricing/policy URLs) with inconsistent canonicals that invite outdated citations.

- Hide freshness signals (e.g., burying “last updated” in the footer) when the model is trying to resolve what’s current.

Step-by-Step: How to Optimize a Page for Claude and Anthropic Search (Repeatable Workflow)

Step 1: Choose target queries and map intent (informational vs. support vs. transactional)

Start with 10–20 queries per cluster. Map each to:

- Intended page (canonical answer)

- Funnel stage (awareness → purchase → retention)

- Risk level (YMYL-like topics require stricter sourcing)

Actionable recommendation: Don’t start with “top traffic keywords.” Start with “top business impact questions.”

Step 2: Build a “citation-ready” outline (featured snippet-first)

We outline pages for extraction:

- 40–60 word definition at top

- “Key takeaways” (3–7 bullets)

- Labeled sections that match user intent

- A short FAQ at the end

Actionable recommendation: Write headings like you want them quoted verbatim.

Step 3: Write for extraction: definitions, constraints, examples, and edge cases

We add:

- Constraints (“works only if…”)

- Examples (inputs/outputs)

- Edge cases (“if you are in X situation…”)

- “What this does NOT mean” to prevent misinterpretation

Actionable recommendation: Add one concrete example per major claim, even in executive content.

Step 4: Add trust blocks: sources, author bio, last updated, and changelog

Because AI answers compress nuance, we make trust explicit:

- Sources (primary when possible)

- Author and editorial review

- Update cadence

- Changelog entries

Actionable recommendation: Put “Last updated” near the top, not buried in the footer.

Actionable recommendation: Adopt a monthly “answer quality review,” like a product QA cycle.

Comparison Framework: Claude vs. Other AI Search Experiences (What to Optimize Differently)

AI search is not one market; it’s multiple product philosophies converging. The subscription economics also signal who the power users are and what workflows matter.

Engadget reports Perplexity introduced a $200/month “Perplexity Max” plan with unlimited usage of Labs, early access to Comet (an AI browser), priority support, and access to frontier models from partners like Anthropic and OpenAI. (engadget.com)

That matters because it tells us: AI search is moving into premium professional workflows, not just consumer Q&A.

Side-by-side criteria: citations, freshness, verbosity controls, and tool use

We evaluate experiences on:

- Citation clarity (are sources visible and prominent?)

- Freshness behavior (does it retrieve live web data?)

- Follow-up depth (does it guide exploration?)

- Tooling (agents, browsers, workflows)

SearchGPT is presented as integrated with real-time web data and conversational follow-ups. (washingtonpost.com)

Claude’s web search is described as selectively activated and focused on conservative reliability. (opentools.ai)

Actionable recommendation: Optimize for the common denominator: clear, citable, updatable canonical pages.

When to prioritize traditional SEO vs. AI answer optimization

Traditional SEO still matters because:

- It feeds discovery and authority signals

- It captures high-intent clicks and conversions

- It remains the dominant channel for many categories

But AI answer optimization becomes primary when:

- Your users ask support questions

- Your category is definition-heavy

- Your buyers do “research via conversation”

Actionable recommendation: Split your roadmap: 60% traditional SEO hygiene + 40% answer-engine readiness for canonical pages.

Recommendation matrix by business type

- SaaS: prioritize docs, comparisons, security/policy pages

- Ecommerce: prioritize category explainers, returns/shipping, product specs

- Publishers: prioritize attribution-friendly explainers and structured topic hubs

- Support-heavy orgs: prioritize troubleshooting, error libraries, and how-to flows

Actionable recommendation: Pick one “AI-first” content type to industrialize (e.g., troubleshooting guides) and scale it.

Content and Technical Optimization Checklist (On-Page, Schema, and Information Architecture)

On-page structure for AI: headings, summaries, and consistent terminology

We standardize:

- Product naming (one term per feature)

- Definitions at top

- Summary blocks

- Tables for specs and comparisons

Actionable recommendation: Create a terminology glossary internally and enforce it across docs, blog, and pricing.

Schema and metadata: Article, FAQ, HowTo, Organization, and author markup

Schema is not a magic switch, but it reduces ambiguity. If your visible content includes FAQs and procedures, reflect that structurally.

Actionable recommendation: Add schema where it matches visible content; validate it and keep it consistent across canonical pages.

Information architecture: hub-and-spoke, canonical sources, and internal linking

AI systems (and humans) need clear “what is the main page for this topic?”

- One canonical pillar per topic

- Supporting spokes for sub-questions

- Strong internal linking to the canonical source

Actionable recommendation: Build a hub page for “Claude / Anthropic Search Optimization” and link every related article back to it with consistent anchor text.

Common Mistakes, Lessons Learned, and Troubleshooting (From Real Tests)

Mistakes that reduce citations: inconsistency, missing definitions, and unverifiable claims

The fastest way to lose trust:

- Conflicting pricing across pages

- No “last updated” date

- Claims without sources

Search products are under scrutiny for incorrect answers; Google’s AI answers have been criticized for nonsensical outputs, reinforcing the importance of verifiability in the ecosystem. (washingtonpost.com)

Actionable recommendation: Add a “verifiability pass” to editorial QA: every factual claim must be sourced or removed.

Counter-intuitive lessons: shorter sections, explicit constraints, and fewer but better sources

Counterintuitive but true: shorter, tighter sections often get cited more because they’re easier to extract without distortion.

Also: fewer sources can outperform many sources if they’re primary and tightly relevant.

Actionable recommendation: Rewrite long narrative paragraphs into 3–5 bullet “fact blocks” with tight sourcing.

Troubleshooting: when Claude cites competitors or outdated pages

If competitors are cited:

- Your page may be less extractable

- Your page may lack a clear definition

- Your page may be buried in IA

If outdated pages are cited:

- You likely have duplicate “truth” pages

- Canonicals/redirects are inconsistent

- Updates aren’t prominent

Actionable recommendation: Consolidate to one canonical URL per fact set (pricing/policy/specs), redirect the rest, and add a changelog.

Measurement and Reporting: KPIs for Anthropic Search Optimization

Primary KPIs: citation rate, share of citations, and answer accuracy

We recommend three primary KPIs:

- Citation rate: % of tracked queries where your domain is cited

- Share of citations: among cited sources, how often you appear

- Answer accuracy score: 1–5 rubric across correctness + freshness

Actionable recommendation: Report these by intent cluster (informational vs support vs commercial), not as a blended average.

Secondary KPIs: assisted conversions, support deflection, and brand sentiment

Secondary metrics connect to business outcomes:

- Assisted conversions from branded searches and direct traffic changes

- Support ticket volume changes for covered topics

- Brand sentiment in answers (qualitative scoring)

Actionable recommendation: Tie every query cluster to one business metric owner (growth, support, product).

Build a simple reporting dashboard and testing cadence

Minimum cadence:

- Weekly prompt suite run (top 25 queries)

- Monthly full suite run (100+ queries)

- Quarterly content consolidation review

Actionable recommendation: Put “AI answer QA” on the same calendar as release notes and pricing changes.

FAQ: Claude AI and Anthropic Search Optimization (People Also Ask Targeting)

What is Claude AI and how is it different from ChatGPT?

Claude is Anthropic’s AI assistant, often positioned around safety and carefulness; ChatGPT is OpenAI’s assistant ecosystem that has expanded into search-like experiences such as SearchGPT. (opentools.ai) (washingtonpost.com)

Actionable recommendation: Create a comparison page with stable definitions and update dates—these are frequently cited.

What is Anthropic Search and how does it choose sources to cite?

Public descriptions characterize Claude’s web search as selectively activated for complex/recent queries and oriented toward conservative reliability and transparency, with Brave Search API referenced in at least one report. (opentools.ai)

Actionable recommendation: Assume selection favors pages that are easy to verify and quote; format accordingly.

How do I optimize my content to get cited in Claude’s answers?

Write pages that are citation-ready: short definitions, explicit steps, constraints, and strong trust blocks (sources, author, last updated). (opentools.ai)

Actionable recommendation: Start with your top 25 “source-of-truth” pages before scaling.

Does schema markup help with AI search optimization for Claude?

Schema primarily helps by reducing ambiguity and making page structure machine-readable; it’s supportive, not sufficient on its own.

Actionable recommendation: Implement FAQ/HowTo schema only when it matches visible content and keep it consistent across canonical pages.

How can I measure whether Anthropic Search optimization is working?

Track citation rate, share of citations, and answer accuracy across a fixed query suite, then correlate with conversions or support deflection.

Actionable recommendation: Build a recurring test suite and keep it stable for at least 8–12 weeks before changing the query set.

What We’d Do Differently (If We Were Starting Over)

Actionable recommendation: If you do nothing else this quarter, build a “source-of-truth” program with owners, review dates, and a changelog standard.

Key Takeaways

- Optimize for selection, not just rankings: AI-first discovery rewards being a citable source inside synthesized answers, not merely driving clicks.

- Design pages for conversational retrieval: SearchGPT’s model (follow-ups + source links) reinforces the value of definition-first, extractable structures. (washingtonpost.com)

- Assume selective web retrieval favors reliability: Claude’s web search is described as selectively activated and oriented toward conservative precision—make facts explicit, scoped, and verifiable. (opentools.ai)

- Canonical truth pages are the highest-leverage assets: Pricing, policies, docs, and help content disproportionately influence AI visibility because they’re stable and quote-friendly.

- Measurement must be productized: Prompt logs + page versioning + a consistent query suite turn “AEO” from vibes into an iterative workflow.

- Clarity beats cleverness: Short, self-contained blocks (definitions, bullets, steps, constraints) reduce misquotes and increase citation readiness.

- Consolidation is an optimization strategy: Fewer, higher-integrity pages with clear canonicals outperform sprawling libraries with duplicated “truths.”

:::sources-section

opentools.ai|9|https://opentools.ai/news/anthropics-claude-ai-revolutionizing-web-search-with-precision-and-safety washingtonpost.com|8|https://www.washingtonpost.com/technology/2024/07/25/openai-search-google-chatgpt/ engadget.com|1|https://www.engadget.com/ai/perplexity-joins-anthropic-and-openai-in-offering-a-200-per-month-subscription-191715149.html

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

Anthropic’s Claude Integrates Web Search: Implications for AI-Powered Information Retrieval

Deep dive on Claude’s web search integration: how retrieval changes answer quality, citations, and Generative Engine Optimization tactics for AI visibility.

Perplexity's Shift to Subscription Model: A New Era in AI Search Monetization

Deep dive on Perplexity’s subscription shift and what it changes for AI search monetization, GEO strategy, citation confidence, and AI visibility.