Anthropic’s Claude Integrates Web Search: Implications for AI-Powered Information Retrieval

Deep dive on Claude’s web search integration: how retrieval changes answer quality, citations, and Generative Engine Optimization tactics for AI visibility.

Anthropic’s Claude Integrates Web Search: Implications for AI-Powered Information Retrieval

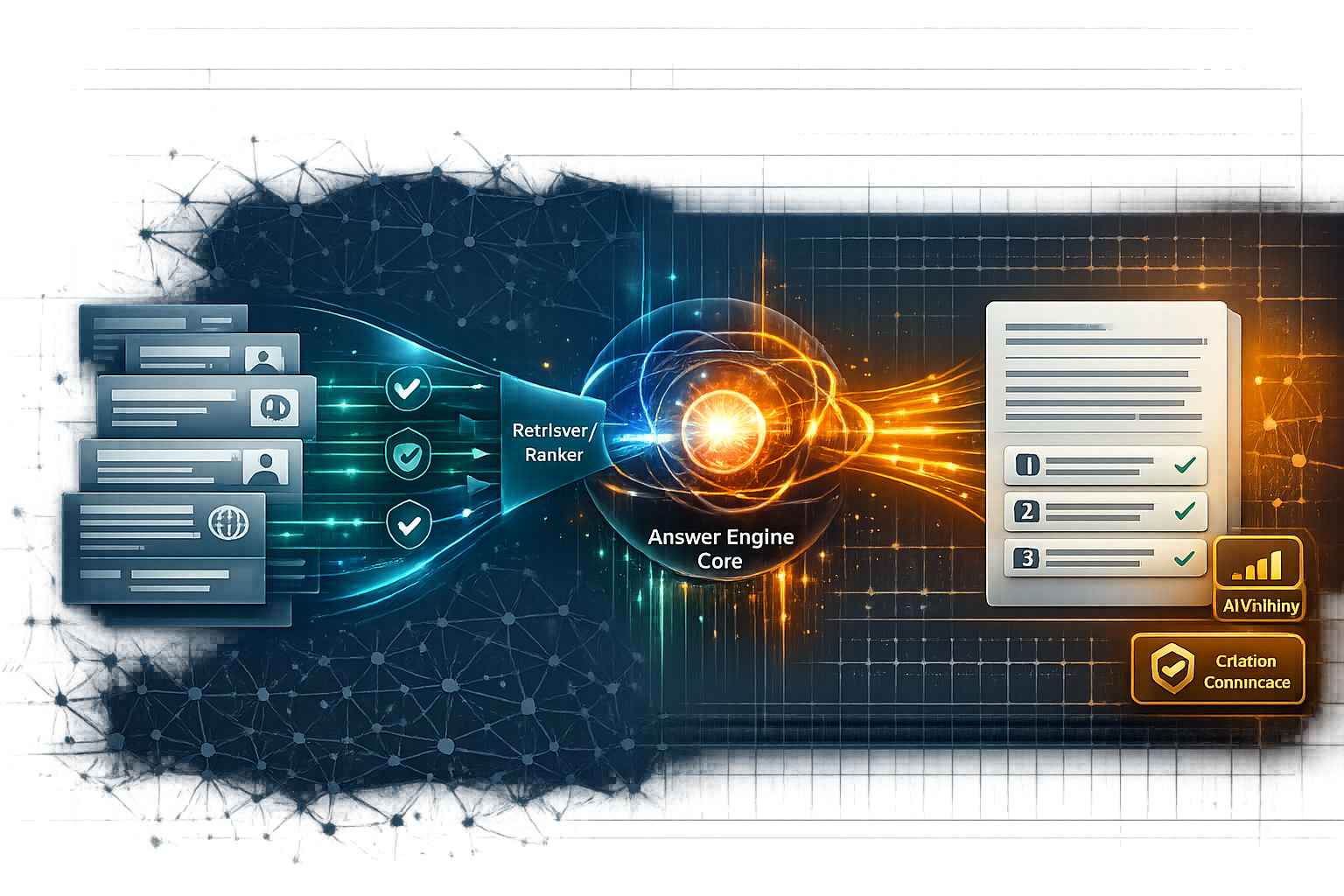

Claude’s web search integration matters because it shifts Claude from a mostly “closed-book” language model into an answer engine that can retrieve, select, and synthesize live web information. That changes what “good performance” looks like (freshness, provenance, verifiability) and it changes what publishers should optimize for: not only model comprehension, but retrieval eligibility and citation-worthiness—the two levers that increasingly determine AI Visibility and Citation Confidence in AI-powered discovery.

Once web search is in the loop, your content competes in a retrieval-and-ranking pipeline (query rewriting, SERP selection, passage extraction). In practice, that means “being the best explanation” is not enough—you need to be easy to retrieve and easy to verify at the passage level.

Executive Summary: What Claude’s Web Search Changes (and Why It Matters)

From closed-book LLM to answer engine: the retrieval shift

Without search, Claude answers primarily from its training distribution and whatever context you provide. With search, Claude can (a) interpret intent, (b) fetch candidate documents, (c) extract passages, and (d) generate a grounded response. This mirrors broader “AI search” dynamics—where ranking and re-ranking can be as decisive as generation. For evaluation implications, see Re-Rankers as Relevance Judges: A New Paradigm in AI Search Evaluation.

Immediate implications for AI Visibility and Citation Confidence

- Discovery shifts from “what the model remembers” to “what the system can retrieve and trust.”

- Citations become a competitive surface: pages that are extractable, specific, and well-evidenced are more likely to be referenced.

- Freshness becomes measurable: if the answer cites sources updated recently, stale pages lose retrieval share on time-sensitive queries.

Key takeaways for Generative Engine Optimization

This is where GEO diverges from traditional SEO: you’re optimizing for selection into an evidence set and for downstream citation behavior, not only for blue-link clicks. The gap between “ranking in Google” and “being cited by LLMs” is already documented; see LLM Citations vs. Google Rankings: Unveiling the Discrepancies.

| Metric (10–20 queries) | Claude (no web search) | Claude (with web search) | How to label |

|---|---|---|---|

| Hallucination / error rate | Baseline (manual) | Expected lower on factual queries | % answers with ≥1 unsupported claim (rubric) |

| Citation rate | Often none / limited | Higher when retrieval is used | % answers with citations + # unique domains |

| Freshness (days since update) | Not applicable (no sources) | Measurable via cited pages | Median days using page timestamps |

External context on the product direction and implications is discussed in reporting such as Yahoo Tech’s coverage of Claude’s AI search capabilities: https://tech.yahoo.com/ai/articles/anthropics-claude-launches-ai-search-200907052.html.

How Claude’s Web Search Likely Works: Retrieval, Ranking, and Grounding

Retrieval pipeline basics: query rewriting → SERP selection → passage extraction

Most search-augmented LLM systems follow a multi-stage retrieval pipeline. Even if implementation details vary, the behavior typically resembles:

- Query interpretation & rewriting: expands acronyms, adds constraints (time, geography), and generates sub-queries.

- SERP candidate selection: chooses documents/snippets from ranked results (often biased toward top positions).

- Passage extraction: pulls the most “answerable” spans (definitions, numbers, steps, policy language).

- Grounded generation: synthesizes an answer constrained by retrieved evidence, sometimes with citations.

Grounded generation and citation behavior: when sources appear (and when they don’t)

Citations tend to appear when the system can clearly map answer claims to specific passages. You’ll often see weaker citation behavior when:

- The query is subjective (e.g., “best”, “should I”).

- The retrieved set is redundant or thin (syndicated copies, scraped summaries).

- The model fuses multiple sources into one claim, but can’t attribute cleanly.

Failure modes: retrieval bias, source duplication, and “citation without support”

Search reduces some hallucinations, but introduces retrieval-specific risks. Three to watch in Claude-with-search evaluations:

Common failure modes to test

- Retrieval bias: over-weights top-ranked sources even when they’re not the most authoritative

- Source duplication: cites multiple URLs that are effectively the same content (syndication/press releases)

- Citation without support: provides a citation that does not actually substantiate the specific claim

- Harder to diagnose than pure hallucination (looks “grounded” on the surface)

- Can amplify a single error across many answers if one high-ranked source is wrong

- Can create false trust if users equate “has citations” with “is correct”

Source diversity diagnostic (example metrics to track across a query set)

Illustrative baseline targets for evaluating whether Claude-with-search over-relies on a small set of domains and duplicates syndicated content.

For broader industry movement toward multi-model retrieval and orchestration (which can influence how “search + synthesis” products evolve), see: https://techcrunch.com/2026/02/27/perplexitys-new-computer-is-another-bet-that-users-need-many-ai-models/ and Perplexity’s product notes on embedding/search improvements: https://www.perplexity.ai/changelog/what-we-shipped---february-27-2026.

Implications for AI-Powered Information Retrieval: Accuracy, Freshness, and Trust

Accuracy and verifiability: does search reduce hallucinations in practice?

In IR terms, web search can increase factuality by constraining generation to retrieved evidence—but only if the retrieval set is high quality and the model correctly binds claims to passages. Your evaluation should separate:

- Unsupported claims (no evidence in cited/retrieved text).

- Mis-citations (citation exists but doesn’t support the claim).

- Outdated claims (supported, but by stale sources).

Freshness: time-sensitive queries and update cadence

Freshness becomes a first-class metric because the system can cite pages updated days (or hours) ago. For publishers, this turns “update cadence” into a retrieval advantage—especially for product docs, policy pages, pricing, and fast-changing comparisons. For signals that still matter in classic search visibility (and indirectly affect retrieval), track performance and page experience; see Google Core Web Vitals Ranking Factors 2025: What’s Changed and What It Means for Knowledge Graph-Ready Content.

Mini-study design: source recency on time-sensitive queries

Illustrative trend lines showing how median cited-source recency could differ between search-enabled and non-search behavior across 15 time-sensitive queries.

Trust signals: author expertise, references, and transparent sourcing

When Claude can browse, users expect it to show its work. Trust increases when answers cite primary sources (standards bodies, original research, official docs) and when claims are easy to verify. Publisher-side trust signals that tend to help retrieval and citation include: clear authorship, editorial policy, stable URLs, and explicit references. (For web publishers, Google’s guidance on content quality and E-E-A-T is still a pragmatic proxy for “trustworthiness,” even when the surface is an answer engine.)

For context on agentic browsing experiences around Claude in the browser, see: https://indianexpress.com/article/technology/anthropic-unveils-claude-for-chrome-an-ai-agent-to-browse-and-multitask-smarter-10214592/lite/.

Generative Engine Optimization for Claude Search: Improving Retrieval Eligibility and Citation Confidence

Optimize for retrieval: crawlability, indexation, and snippet-ready formatting

Remove avoidable access friction

Avoid blocking critical explanatory content behind hard paywalls, aggressive interstitials, or bot blocks. If you must gate, provide a crawlable abstract/summary with key facts and references.

Make pages extractable

Place the answer early, use descriptive headings, keep definitions and numbers in plain text (not images), and add short “TL;DR” blocks that match common query wording.

Reduce ambiguity with clear scope

One page = one primary intent. If a page mixes multiple intents, extraction systems may pull the wrong passage, lowering citation quality.

Optimize for citation: claim structuring, evidence, and entity clarity

Citation Confidence improves when the model can tightly bind a claim to nearby evidence. Practical tactics:

- Write “claim → evidence → implication” blocks (especially for numbers, comparisons, and recommendations).

- Cite primary sources directly and keep reference links stable (avoid frequent URL changes).

- Disambiguate entities: full product names, version numbers, dates, and jurisdiction (for policy/legal topics).

Structured Data and Knowledge Graph alignment: making meaning machine-readable

Claude-with-search still depends on machine-readable cues to interpret entities and relationships. Structured data won’t guarantee citations, but it can reduce ambiguity and improve extraction reliability. For related structured-data-first thinking across assistants and AI search ecosystems, see Samsung's Bixby Reborn: A Perplexity-Powered AI Assistant and OpenAI GPT-5.3-Codex-Spark Deployment: Structured Data-First Rollout for Reliable AI Content Operations.

Before/after GEO tracking (example 30-day dashboard)

Illustrative relationship between AI Visibility, citation count, and passage-level extraction success after retrieval and citation optimizations.

If Claude’s retrieval over-selects a narrow set of domains, it can be tempting to “chase the SERP.” But for sustainable GEO, prioritize primary evidence, unique data, and clear methodology. Systems can change ranking partners, re-rankers, and citation policies quickly—your defensible edge is verifiability.

Measurement & Research Plan: What to Test Now (and How to Report Results)

A practical evaluation framework: query sets, rubrics, and reproducibility

To evaluate Claude-with-search like an IR system, build a stable, versioned query set and score outputs with a rubric. Include at least four buckets:

- Navigational: “brand + login”, “docs + feature”.

- Informational long-tail: definitions, how-tos, comparisons.

- YMYL-adjacent: finance/health/legal-adjacent informational queries (with caution).

- Time-sensitive: “current”, “2026”, “latest update”, “policy change”.

KPIs for Claude Search: AI Visibility, Citation Confidence, and retrieval share

Treat these as three separate KPIs (they move independently):

| KPI | Definition | How to measure |

|---|---|---|

| AI Visibility | Share of answers where your brand/page is mentioned or cited | Mentions/citations per query across a fixed query set |

| Citation Confidence | Likelihood that citations support the exact claims made | Supported-claim rate: % claims verifiable in cited passages |

| Retrieval share | How often your domain is selected among sources for relevant queries | Domain frequency in citations and/or retrieved set (if exposed) |

Scorecard template: weighted metrics you can report to stakeholders

Claude Search answer quality scorecard (example weights)

A reusable rubric for comparing domains or content clusters on supported-claim accuracy, citation quality, freshness, and source diversity.

Operationally, you’ll want faster anomaly detection for visibility drops (e.g., when retrieval partners or ranking behavior changes). Pair your AI visibility tracking with more frequent search diagnostics using tools like Search Console; see Google Search Console 2025 Enhancements: Hourly Data + 24-Hour Comparisons for Faster GEO/SEO Anomaly Detection and Google Search Console Social Channel Performance Tracking: Unifying SEO + Social Signals for Faster GEO/SEO Diagnosis.

A practical rule: if a claim can’t be verified by a human in under 30 seconds on your page, it’s unlikely to earn consistent, high-confidence citations in answer engines.

Key Takeaways

Claude’s web search turns generation into a retrieval pipeline—ranking, re-ranking, and passage extraction now shape answer quality as much as the model.

GEO for Claude Search expands the target: optimize for retrieval eligibility (access, extractability, scope) and for citation-worthiness (claim-evidence proximity, primary references, entity clarity).

Measure separately: AI Visibility (mentions/citations), Citation Confidence (supported-claim rate), and retrieval share (domain selection frequency).

Freshness and provenance become differentiators: maintain updated pages, stable URLs, and transparent sourcing to earn trust in grounded answers.

FAQ

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

Google Search Console 2025 Enhancements: Hourly Data + 24-Hour Comparisons for Faster GEO/SEO Anomaly Detection

Google Search Console’s 2025 hourly data and 24-hour comparisons speed anomaly detection for SEO/GEO. Learn workflows, metrics, and impacts.

Perplexity's Shift to Subscription Model: A New Era in AI Search Monetization

Deep dive on Perplexity’s subscription shift and what it changes for AI search monetization, GEO strategy, citation confidence, and AI visibility.