The Rise of Listicles: Dominating AI Search Citations

Deep dive on why listicles earn disproportionate AI search citations—and how to structure them for Generative Engine Optimization and higher citation confidence.

The Rise of Listicles: Dominating AI Search Citations

Listicles are being cited disproportionately in AI search (ChatGPT browsing, Perplexity, and Google AI Overviews) because they break information into discrete, verifiable units: named items with short, scannable support. That structure maps cleanly to how answer engines retrieve passages (“chunks”), synthesize responses, and attach citations with minimal rewriting. This spoke explains the mechanism—and gives a GEO-first checklist to build listicles that increase citation confidence, not just clicks.

In Generative Engine Optimization (GEO), you’re optimizing for retrievability (can the model find the right passage?) and grounding (can it confidently cite it?). Listicles naturally provide both via consistent headings, item boundaries, and entity-rich labels—especially when paired with structured data and transparent criteria.

Key takeaways

Listicles map to answer-engine retrieval: each item becomes a clean, citable passage (“chunk”).

Entity-first item labels + consistent templates increase citation confidence by reducing ambiguity and improving corroboration.

Criteria transparency (methodology, inclusion rules, update date) is a hidden trust lever for AI Overviews and answer engines.

Structured data (e.g., ItemList, FAQPage) can reinforce list semantics and improve machine understanding of item membership.

Executive Summary: Why Listicles Are Over-Indexed in AI Citations

Featured-snippet-first structure maps cleanly to answer engines

Listicles often start with a tight definition, a short “top picks” preview, and then repeat a predictable item template. That mirrors the “snippet” patterns search systems already understand (paragraph + list + supporting passages). When AI Overviews or answer engines need to answer “best X” queries, they can lift item names and one-sentence summaries with low transformation cost—making citations easier to attach and justify.

Listicles boost citation confidence via scannability and entity coverage

Citations are a trust decision. Listicles tend to cover more entities (tools, tactics, frameworks) per page than narrative articles, and they present attributes in a consistent, corroboratable format. That combination improves the model’s ability to: (1) match the prompt to a specific item, (2) verify the item’s claims via nearby context, and (3) cite a passage that looks “complete.” For related research on why structure impacts citations, see Optimizing Content for AI: The Shift from SEO to GEO and AI Search and Content Structure: The Importance of Numbered Lists.

Illustrative citation share by content format in AI answers

Example distribution showing why listicles often dominate citations for “best/top” queries. Use this as a template for your own 50–100 query audit.

Data opportunity: run a simple audit—sample 50–100 citations from AI Overview/Perplexity/ChatGPT browsing results for head terms like “best X” and “top tools for Y,” categorize the cited URLs by format, then report citation share and median citation position. This turns “listicles win” from a hunch into a trackable GEO KPI.

Mechanism: How Answer Engines Retrieve, Chunk, and Cite Lists

Retrieval + chunking: why items become “citable units”

Most modern systems retrieve passages, not whole pages. Listicles create predictable chunk boundaries (H2/H3 headings plus item blocks), which reduces ambiguity: each item looks like a self-contained answer. That improves passage retrieval and makes citations cleaner because the model can point to the exact item block that supports the claim. For a broader view on how LLM-era retrieval differs from classic SEO, see The Evolution of AI Search: From Traditional Engines to LLMs.

Knowledge graph alignment: entities, attributes, and typed relationships

Entity-first headings (tool names, frameworks, tactics) map naturally to knowledge graph nodes. The short description under each item supplies attributes and relationships—use-cases, constraints, integrations, pricing model, “best for” qualifiers, and comparisons. When the model can bind a claim to a named entity plus a specific attribute, it has an easier time grounding the answer and choosing a citation.

Citation confidence signals: consistency, specificity, and corroboration

Answer engines prefer sources that are easy to corroborate. Listicles that use consistent item templates, clear criteria, and concrete qualifiers (e.g., “best for mid-market B2B SaaS with Salesforce”) look more reliable than pages full of vague superlatives. This is also why affiliate-only copy and thin descriptions often underperform in AI citations: they don’t provide enough verifiable attributes for the model to cite confidently.

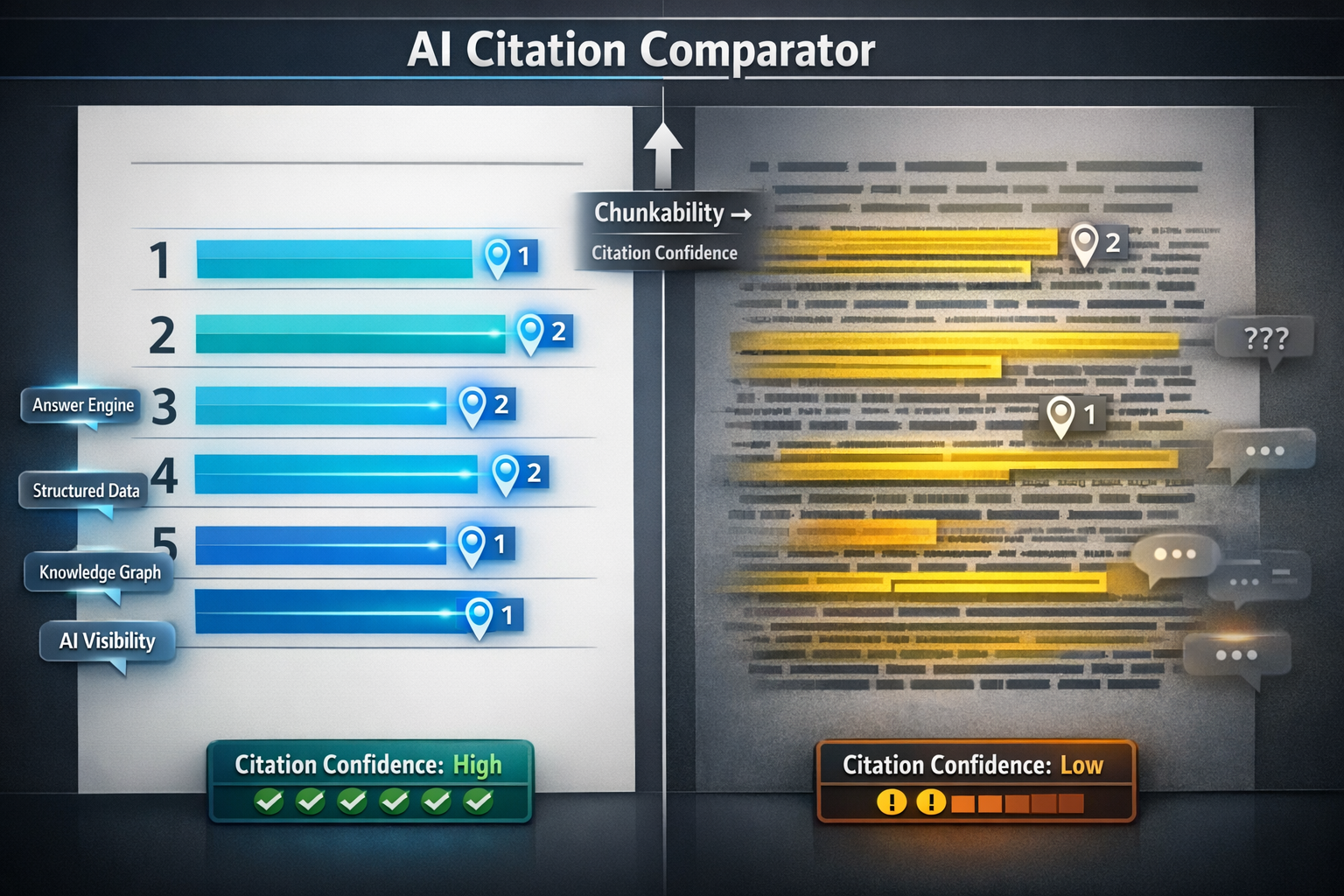

Why listicles tend to be more citable (conceptual model)

Use this as a scoring rubric when comparing listicles vs narrative guides in your own corpus.

Data opportunity: measure chunk retrievability by comparing average passage length, heading density, and unique entity count between listicles and narrative articles in your corpus; then correlate those features with citation frequency in AI answers.

What Makes a Listicle “Citable” in Generative Engine Optimization (GEO)

The citation-ready item template (headline → claim → evidence → context)

A citable listicle is less about “10 things” and more about repeatable, extractable units. Use a consistent item pattern so the model can reliably parse what the item is, what you’re claiming, and why it’s true.

- Entity name (exact, unambiguous label).

- One-sentence value statement (the core claim).

- 2–3 bullets with concrete attributes (features, constraints, integrations, pricing model, performance).

- “Best for” qualifier (narrows scope; reduces overgeneralization).

- Limitations (trade-offs; increases trust and citation confidence).

Prefer “Ahrefs (SEO tool suite)” over “Ahrefs” and “RAG (retrieval-augmented generation)” over “RAG.” Self-contained labels reduce entity ambiguity in retrieval and improve citation accuracy.

Schema and structured data that reinforce list semantics

Structured data won’t guarantee citations, but it can reduce machine uncertainty about what the page is and what the items are. For listicles, the most relevant patterns are typically ItemList (for membership), FAQPage (for common questions), and sometimes HowTo if the page includes a procedural section. For more on the GEO angle, see Structured Data for GEO.

Which schema patterns support listicle-style pages?

| Schema pattern | Best when | What it clarifies for answer engines |

|---|---|---|

| ItemList | You have a true list of entities/items | Item membership, ordering, and item names |

| FAQPage | You answer common questions related to the list | Canonical Q&A passages that are easy to cite |

| HowTo | You include a step-by-step process (not just recommendations) | Procedural steps and required materials |

| Product/SoftwareApplication | Items are products/tools with specs | Typed attributes (pricing, OS, category) |

Criteria transparency: the hidden lever for trust

Listicles get cited when they look like they were produced with a method, not just opinions. Add a short methodology block near the top: what you evaluated, how you scored, inclusion/exclusion rules, and the last update date. This helps answer engines decide your page is safe to cite and reduces “hallucination risk” during synthesis. Tie this to your measurement program in Citation Confidence.

Before/after: listicle upgrades and AI citation lift (template)

Track citations, impressions, and referral clicks for 4–8 weeks after adding criteria + item templates + schema.

Deep Dive: Designing Listicles for Featured Snippet + AI Overview Extraction

Snippet capture blueprint: definitions, numbered steps, and tight intros

Front-load a 40–60 word definition, then a short preview list (5–8 items) before expanding each item. This targets both paragraph and list featured snippets and gives answer engines an early “index” of entities. Keep item labels specific and avoid clever names that don’t match how people prompt (e.g., “Best CRM for SMB” is more prompt-aligned than “The SMB Closer”).

Write a one-paragraph definition (40–60 words)

Define the category and include 1–2 constraints (scope, audience, timeframe) so the passage is citeable as a definition.

Add a 5–8 item preview list with entity-first labels

This creates early entity coverage and a clean list snippet candidate.

State your criteria + last updated date

Include evaluation dimensions, inclusion rules, and how often you refresh the list.

Use a consistent item template for every entry

Headline → claim → attributes → best for → limitations. Consistency improves passage retrieval and reduces synthesis errors.

Add corroboration hooks (evidence links, specs, screenshots)

Where possible, link to primary docs, pricing pages, or official specs to increase grounding.

Include a comparison table (items × criteria)

Tables make attributes extractable and reduce hallucinated comparisons.

Add internal anchors for each item

Anchors improve navigability for users and can help systems reference specific sections.

Reinforce semantics with structured data (when appropriate)

Use ItemList and FAQPage where they match the content; avoid spammy or mismatched schema.

SERP-to-AI flywheel: why snippets often become AI citations

Featured snippets are already “pre-chunked” answers. When a URL wins a snippet, it’s a strong signal that the page contains a concise, extractable passage that matches intent. AI Overviews and answer engines often reuse the same kinds of passages—so snippet ownership can translate into higher citation probability, especially for head terms with stable intent.

Common anti-patterns that reduce AI Visibility

- Vague item names (no entity, no qualifier).

- Inconsistent formatting across items (hard to parse; weak chunk boundaries).

- Affiliate-only copy without concrete attributes or evidence.

- Missing dates and methodology (low trust).

- Ungrounded superlatives (“best ever”) without scope (“best for whom?”).

| SERP observation | How to record it | Why it matters for AI citations |

|---|---|---|

| Featured snippet present? | Yes/No + snippet type (paragraph/list/table) | Snippets indicate extractable passages that often become citation candidates. |

| AI Overview present? | Yes/No + number of citations shown | Lets you compute overlap rate between snippet owners and AI citations. |

| Does AI cite the snippet URL? | Yes/No + citation position | Quantifies the SERP-to-AI flywheel effect. |

Expert Perspectives + Practical Checklist (GEO-First Listicle Build)

Expert quote opportunities: what SEOs and IR researchers look for

If you want this spoke to earn citations itself, add expert perspectives that reinforce why structure matters. Three high-leverage quote angles: (1) a technical SEO on structured data and passage indexing, (2) an information retrieval/LLM researcher on chunking and citation behavior, and (3) an editorial lead on criteria transparency and update cadence. These quotes act as “corroboration” for your methodology and give answer engines additional, attributable claims to cite.

Implementation checklist: the 12-point “citable listicle” standard

Use this checklist to build or refactor listicles for AI Visibility and citation confidence. (Related: AI Visibility and Answer Engine Optimization.)

- Entity-first title and item labels (no ambiguous naming).

- 40–60 word definition near the top.

- Preview list of 5–8 items before the deep sections.

- Criteria + methodology block (what you evaluated and how).

- Last updated date (and refresh cadence if relevant).

- Consistent item template across all entries.

- Concrete attributes (2–3 bullets) per item; avoid fluff.

- “Best for” qualifier and “Limitations” for each item.

- Evidence links to primary sources (docs, specs, pricing) where possible.

- Comparison table (items × criteria) to make attributes extractable.

- Internal anchors for each item (improves navigability and referencing).

- Appropriate structured data (ItemList, FAQPage; HowTo only when truly procedural).

Custom visualization plan

Custom visualization #1: “Citable Listicle Anatomy” diagram mapping page sections to answer-engine needs (retrieval, grounding, citation). Custom visualization #2 (optional): a comparison matrix template (items × criteria) that demonstrates how to make attributes extractable. Use these visuals to differentiate your content from generic “top 10” pages and increase the likelihood that models reuse your structure.

Answer engines are under scrutiny for how they use and attribute content. Keep citations and primary-source links clear, avoid copying competitor phrasing, and ensure your listicle adds original evaluation and context. For background on the broader debate, see Perplexity AI’s legal and copyright discussions: Perplexity AI (Wikipedia overview).

FAQ: Listicles and AI search citations

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning — analysis and GEO implications for AI search.

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants — analysis and GEO implications for AI search.