Google Search Console 2025 Enhancements: Hourly Data + 24-Hour Comparisons for Faster GEO/SEO Anomaly Detection

Google Search Console’s 2025 hourly data and 24-hour comparisons speed anomaly detection for SEO/GEO. Learn workflows, metrics, and impacts.

Google Search Console 2025 Enhancements: Hourly Data + 24-Hour Comparisons for Faster GEO/SEO Anomaly Detection

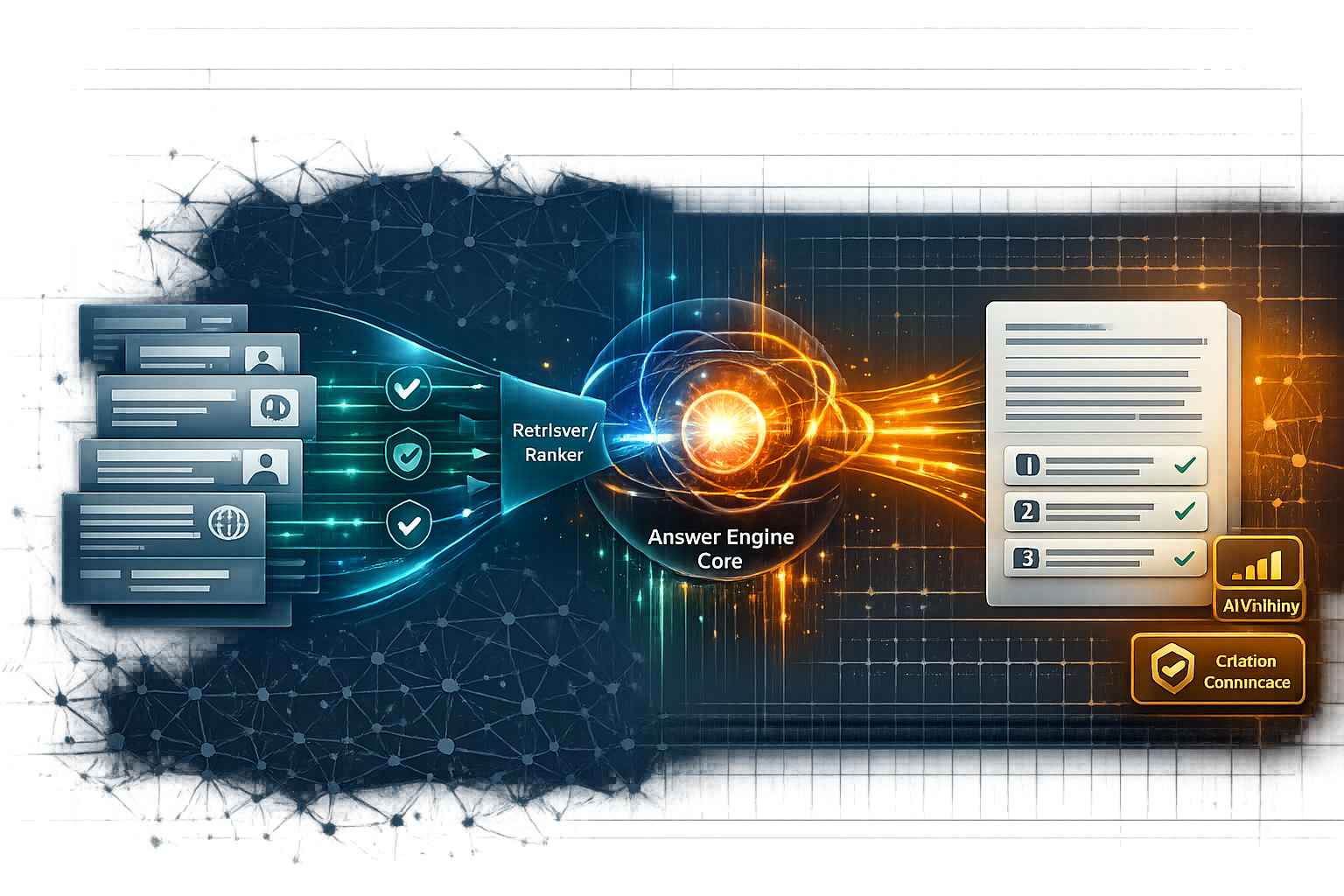

Google Search Console’s 2025 enhancements—hourly performance data (via the Search Analytics API) and 24-hour comparisons (in the Performance report)—reduce the time it takes to spot and validate SEO/GEO anomalies from “next day” to “same day.” In practice, that means you can detect indexing mistakes, rollout volatility, SERP feature changes, and entity/query mix shifts within hours, then correlate them to deployments, content launches, migrations, or algorithm turbulence before losses compound.

This matters beyond traditional SEO: as search experiences add more generative and multimodal surfaces, early signals often show up first as query mix and CTR behavior changes, not just rankings. Hourly monitoring makes those shifts observable quickly enough to respond with technical fixes, snippet/structured data adjustments, or entity clarity improvements.

Generative Engine Optimization (GEO) depends on fast feedback loops. If AI-driven SERP layouts, answer modules, or retrieval preferences change, the earliest public evidence is often a CTR compression or query-intent shift—and hourly Search Console data is one of the only widely available, first-party ways to spot it quickly.

What changed in Google Search Console in 2025—and why it matters now

The news hook: hourly performance data and 24-hour comparison views

Two changes are doing most of the operational work:

- A 24-hour comparison mode in the Performance report, designed to compare “last 24 hours” to “previous 24 hours” and reduce guesswork during short-term swings.

- Hourly data in the Search Analytics API (reported as up to ~10 days of hourly granularity), enabling monitoring, alerting, and post-incident forensics without waiting for daily rollups.

Industry coverage of these updates emphasizes anomaly detection and more granular reporting for teams running frequent releases and content updates (see the 2025 update summary on LinkedIn).

External source: Google Search Console 2025 enhancements overview.

Why this is a GEO/SEO story (not just a reporting upgrade)

Historically, many teams treated Search Console as a lagging diagnostic tool: you learned about problems after daily aggregation and reporting delays. Hourly + 24-hour comparisons shift it closer to a monitoring surface—useful for incident response, release validation, and “search visibility observability.”

This timing matters because Google Search is increasingly shaped by generative and multimodal interactions (e.g., richer AI experiences, Lens-style discovery, and evolving SERP components), which can change click behavior faster than rankings alone explain.

External source: TechTarget on Google’s expanding generative AI search features.

Where Knowledge Graph signals intersect with faster detection

Knowledge Graph and entity understanding changes don’t always announce themselves as a simple rank drop. They often show up as abrupt changes in:

- Branded/entity query volume and composition (e.g., more disambiguation modifiers)

- Page group winners/losers (entity hubs vs. blog posts)

- Search appearance mix (rich result eligibility, snippet rewrites, SERP modules)

Hourly data helps you see those shifts quickly enough to connect them to a schema deployment, internal linking change, or content consolidation—before weekly reporting obscures causality. For deeper context on how algorithm volatility changes what gets surfaced and cited, explore Google Algorithm Update March 2025: What the Core Update Signals for AI Search Visibility, E-E-A-T, and Citation Confidence.

Detection latency: daily rollups vs hourly monitoring (illustrative medians)

Illustrative median time-to-detect (TTD) and time-to-mitigate (TTM) for common SEO incidents. Actual times vary by site size, alerting maturity, and release discipline.

How hourly + 24-hour comparisons change anomaly detection workflows

A practical “first 60 minutes” triage checklist

Confirm scope and timeframe

Verify the correct property (domain vs URL-prefix), then set date range to Last 24 hours and enable Compare → Previous period (previous 24 hours).

Scan the four core metrics together

Check clicks, impressions, CTR, and average position deltas. Treat any single-metric change as a hypothesis until you see how the others move.

Segment quickly to localize the blast radius

Slice by query, page, country, device, and search appearance. The goal is to answer: “Is this sitewide, or isolated to a cluster?”

Correlate with change events

Overlay deployment times, CMS releases, CDN changes, robots/canonical edits, schema updates, and content launches. Hourly granularity is most valuable when you can attribute changes to a timestamped event.

Decide: monitor, mitigate, or escalate

Use sustained-duration rules (e.g., 3 consecutive hours) before rolling back—unless you see strong technical signatures (e.g., impressions collapsing across many URLs).

Use the 24-hour comparison to control for time-of-day effects. A one-hour dip at 3 a.m. local time is rarely actionable; a sustained deviation across multiple hours and segments usually is.

Separating demand shifts from technical issues with comparison windows

The biggest practical benefit of “last 24 vs previous 24” is that it helps you distinguish:

- Demand shifts: impressions move first (up or down), position often stable.

- Technical disruptions: impressions collapse across many pages/queries, sometimes with position turning noisy or unavailable for affected URLs.

- SERP/layout changes: CTR shifts disproportionately while impressions and position look “normal.”

Entity/query segmentation: using Knowledge Graph-aligned slices

To make hourly monitoring useful for GEO (not just SEO), segment in ways that map to entity understanding and retrieval behavior:

- Branded/entity queries vs non-branded (include common disambiguation modifiers).

- Entity hub pages (category/product/service) vs supporting content (blog, docs, FAQs).

- Pages with structured data vs pages without (to spot eligibility regressions).

- Search appearance types (rich results, video/image, etc.) when available for your property.

| Segment | Metric to watch hourly | Alert threshold (example) | Likely interpretation |

|---|---|---|---|

| Top 20 pages (commercial) | Clicks delta vs previous 24h by hour | ≤ -20% for 3 consecutive hours | Potential indexing/ranking/CTR issue; isolate by query + appearance |

| Branded/entity queries | Impressions share + CTR | Impr ≤ -15% and CTR ≤ -10% sustained | Possible entity understanding shift, SERP module change, or reputation/intent change |

| Rich results appearance | Impressions by appearance type | ≤ -25% after schema release | Eligibility regression or SERP feature volatility; validate in Rich Results Test + logs |

If you want to formalize anomaly detection, you can compute a simple hourly anomaly score (e.g., percent delta vs previous 24 hours for the same hour, with a sustained-duration rule). This is especially useful for GEO teams tracking citation/visibility proxies that may fluctuate quickly (see discussion of citation visibility metrics in: The Rise of LLM Citation Visibility).

What to watch: the 6 anomaly patterns hourly data exposes earlier

Hourly monitoring is most actionable when you recognize “metric signatures”—combinations of clicks, impressions, CTR, and position that point to likely root causes.

| Pattern | Hourly signature in GSC | Most likely causes to check first |

|---|---|---|

| 1) Indexing/crawling disruption | Impressions drop sharply across many pages/queries; clicks follow; position may become erratic | Robots/noindex, canonicals, server 5xx, sitemap changes, rendering issues |

| 2) Ranking volatility (algorithmic/competitive) | Average position worsens; impressions may stay flat; clicks decline gradually | Competitor movement, core update effects, intent reclassification, internal linking shifts |

| 3) CTR shift (SERP layout/AI modules) | Clicks drop; impressions stable; position stable; CTR down disproportionately | Snippet rewrites, new SERP features, AI Overview crowding, rich result loss |

| 4) Geo/device spike | Anomaly isolated to a country or device; sitewide looks normal | Hreflang, localization routing, CDN/regional outage, mobile UX/performance regression |

| 5) Structured data eligibility change | Search appearance impressions shift after schema change; CTR may follow | Schema regression, invalid markup, template rollout, missing required properties |

| 6) Entity visibility shift (Knowledge Graph/disambiguation) | Branded queries change composition; more modifiers; hub pages lose/gain share quickly | Entity ambiguity, competing entities, inconsistent naming, weak corroboration signals |

A useful rule: if impressions collapse, suspect discoverability (indexing/crawling). If position worsens, suspect ranking/competition. If CTR collapses with stable impressions and position, suspect SERP layout or snippet dynamics.

Implications for GEO: faster feedback loops for AI retrieval and citations

Why GEO teams should care about hourly Search Console signals

In GEO, “visibility” is increasingly multi-surface: classic blue links, rich results, and AI-influenced layouts. When AI answer systems accelerate response generation and change how they ground outputs, the downstream effect can be faster shifts in click behavior and query intent distribution.

External source: coverage of AI search speed and model integrations highlights how quickly answer experiences can change user behavior and traffic patterns (e.g., Perplexity’s Gemini-related acceleration commentary): PromptInjection AI roundup.

Knowledge Graph, structured data, and entity clarity as leading indicators

If your entity representation is strong (consistent naming, clear entity-page relationships, corroborating references, and clean structured data), you tend to see:

- More stable branded/entity query performance during volatility

- Cleaner query-to-page mapping (fewer “wrong page ranking” hours after releases)

- Faster detection when something breaks (because the baseline is less noisy)

Operational impact of hourly monitoring (example outcomes)

Illustrative improvements teams often target after adding hourly monitoring and 24h comparisons: faster detection, faster recovery, and better attribution to releases.

Predictions: how teams will operationalize this in 2025

- Always-on monitoring becomes standard for mid-market and enterprise sites (not only news and e-commerce).

- Release-to-observation cycles tighten (schema/template releases validated within hours, not days).

- GEO teams track “early warning” segments: branded/entity queries, rich-result appearances, and top conversion pages.

What to do next: a lightweight monitoring setup (without overengineering)

Minimum viable alerting: thresholds, segments, and cadence

A minimal setup that works for most teams is to monitor five segments hourly (or every 2–3 hours) using last-24 vs previous-24 deltas:

- Sitewide (all queries/pages)

- Top 20 pages by conversions/revenue (or leads)

- Top queries (branded + highest intent non-branded)

- Top country/market

- Top device (usually mobile)

| Segment | Metric | Threshold (example) | Sustained rule | Primary owner |

|---|---|---|---|---|

| Sitewide | Impressions | ≤ -25% vs previous 24h | 3 consecutive hours | Technical SEO / SRE |

| Top pages | Clicks | ≤ -20% | 3 consecutive hours | SEO lead + Product owner |

| Top queries | Avg position | ≥ +1.5 worse | 2–4 hours sustained | SEO / Content |

Expert quote opportunities and what to ask

- SEO lead: “What incidents do you now catch within hours that used to take a day or more?”

- Technical SEO: “What metric signature most reliably indicates a crawling/indexing break?”

- GEO/AI search specialist: “Which Search Console changes correlate best with citation volatility or AI-driven SERP module changes?”

- Structured data/Knowledge Graph expert: “Which entity clarity signals reduce disambiguation and stabilize branded query performance?”

Limitations and caveats (sampling, timezone, noise)

- Hourly data can be noisier—use rolling windows and sustained rules to avoid overreacting.

- Expect reporting latency; “hourly” does not mean instantaneous.

- Timezone alignment matters for comparisons; standardize on a single operational timezone for alerts and annotations.

Key Takeaways

Hourly Search Analytics API data + 24-hour comparisons reduce anomaly detection from “next day” to “same day,” improving incident response and release validation.

Use metric signatures (impressions vs position vs CTR) to distinguish demand shifts, technical issues, ranking volatility, and SERP/AI layout changes.

For GEO, prioritize entity-aligned segmentation (branded/entity queries, hub pages, structured-data pages, search appearance types) to detect retrieval/citation-adjacent shifts earlier.

A lightweight monitoring matrix (5 segments, simple thresholds, sustained-duration rules) delivers most of the value without overengineering.

FAQ: Hourly GSC data and 24-hour comparisons

For deeper coverage of how core updates affect AI search visibility and “citation confidence,” read Google Algorithm Update March 2025: What the Core Update Signals for AI Search Visibility, E-E-A-T, and Citation Confidence, then align your hourly monitoring segments to the pages and queries most sensitive to volatility.

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

The Complete Guide to Claude AI and Anthropic Search Optimization

Learn how to optimize content for Claude and Anthropic Search with a proven methodology, step-by-step workflow, comparisons, mistakes to avoid, and FAQs.

Anthropic’s Claude Integrates Web Search: Implications for AI-Powered Information Retrieval

Deep dive on Claude’s web search integration: how retrieval changes answer quality, citations, and Generative Engine Optimization tactics for AI visibility.