Perplexity AI’s $400 Million Snapchat Deal: A Case Study in AI Retrieval & Content Discovery at Social Scale

Case study on Perplexity AI’s reported $400M Snapchat integration and what it signals for AI Retrieval & Content Discovery, product UX, and publisher traffic.

Perplexity AI’s $400 Million Snapchat Deal: A Case Study in AI Retrieval & Content Discovery at Social Scale

Perplexity AI’s reported $400M integration with Snapchat is a clear signal that “search” is shifting from a destination (a search box in a browser) to a capability embedded inside social and messaging surfaces. The strategic bet: if users already ask questions in chat, then an in-chat answer engine that can retrieve, rank, ground, and cite sources can become the default discovery layer—at Snapchat scale—without requiring users to leave the app.

This spoke article treats the deal as a case study in AI Retrieval & Content Discovery: what changes when retrieval pipelines and citation UX must work under mobile latency budgets, safety constraints, and social behavior loops (share, follow-up, abandon). We’ll focus on what the integration likely requires, what success metrics look like, and what publishers/brands can do to increase eligibility for retrieval and citation.

Social apps have historically optimized discovery for in-app objects (accounts, lenses, creators, Stories). An answer engine adds a second discovery mode: open-web and knowledge discovery with citations. That changes product UX, publisher traffic patterns, and how content must be structured to be retrieved.

What the $400M Snapchat Integration Signals for AI Retrieval & Content Discovery

Featured snippet target: What is the Perplexity–Snapchat deal?

The Perplexity–Snapchat deal (as reported) is an agreement to integrate Perplexity’s AI search/answer experience directly into Snapchat, positioning Perplexity as a native in-app discovery and question-answering layer. In practice, that implies Snapchat users can ask natural-language questions inside chat-like surfaces and receive concise, grounded answers with source attribution—without switching to a browser.

Reporting on the partnership and its strategic implications: Engadget’s coverage.

Why this matters: From social feed to answer engine inside chat

Snapchat’s incentive is straightforward: reduce friction between curiosity and resolution. If users can satisfy intent (definitions, comparisons, “what should I buy,” “what does this mean,” “what’s happening”) inside chat, Snapchat increases session depth and retention. Perplexity’s incentive is equally clear: access to social-scale query volume and behavioral feedback loops that improve retrieval and ranking over time.

Zooming out, this move fits a broader industry pattern: major model providers are adding web retrieval to improve freshness and reduce hallucinations. See how web search is being integrated into assistants in reports like InfoQ’s coverage of Claude web search and WIRED’s reporting on ChatGPT’s real-time web integration.

For deeper coverage on how different products approach retrieval, grounding, and browsing versus RAG-style citation patterns, explore: Yahoo's 'Scout' Chatbot: A New Contender in the AI Search Arena.

Situation: Snapchat’s Discovery Problem and Perplexity’s Retrieval Advantage

User intent shift: Navigational search vs conversational discovery in chat

Snapchat’s native search historically excels at navigational intent: “find this creator,” “open this Lens,” “search this Snap Star.” But chat surfaces increasingly capture informational and commercial intent: users ask friends (and now apps) to explain concepts, summarize events, compare products, or recommend what to do next. That’s not an “account lookup” problem—it’s an AI Retrieval & Content Discovery problem: interpret intent, retrieve relevant sources, and synthesize a short answer that’s credible enough to trust.

Constraints: latency, safety, and citations on mobile-first surfaces

An in-chat answer engine has less room for error than a browser search page. Users expect near-instant responses, minimal scrolling, and high confidence. That means retrieval has to be fast, generation must be constrained, and citations must be compact but meaningful (e.g., 2–4 sources, clear publisher names, and stable URLs). On top of that, Snapchat must enforce strict safety policies for minors and sensitive topics, which makes source selection and answer phrasing as important as raw relevance.

Competitive landscape: Google/Apple search defaults vs in-app answer engines

Social platforms have always competed with default search pathways (mobile browser, OS search, Google app). What changes with embedded answer engines is that the “search moment” can be captured before a user ever leaves chat. If this works, Snapchat doesn’t need to replace Google globally; it just needs to win the micro-moments that start inside a conversation.

Mobile Answer Engine Performance Targets (Illustrative Benchmarks)

Illustrative p50/p95 latency and quality guardrails teams often use for mobile, in-chat answer experiences. Values are directional targets, not Snapchat- or Perplexity-specific measurements.

At social scale, small error rates become large absolute numbers. If an answer engine is fast but frequently uncited, stale, or overconfident, trust collapses quickly. Product teams should treat citation coverage and unsupported-claim rate as first-class reliability metrics, not “nice-to-haves.”

Approach: How an In-Chat Answer Engine Would Implement AI Retrieval & Content Discovery

Retrieval pipeline design: indexing, freshness, and ranking signals

A plausible Snapchat-tailored retrieval system starts with query understanding (intent classification, entity extraction, locale/language detection), then retrieves candidates from one or more indexes (web, news, knowledge base, creator/content graph), reranks them with context (recency, authority, user location, conversation context), and only then generates a short response. The key is that generation should be downstream of ranking, not a substitute for it.

Grounding & citations: RAG-style synthesis with source selection

To reduce hallucinations, the answer should be grounded in retrieved passages (RAG-style). Source selection becomes a product decision: do you prefer fewer, higher-trust sources; or broader diversity to reduce single-source bias? In social chat, citations must be scannable (publisher + title + date) and tappable, with stable canonical URLs. Systems may also run redundancy checks (multiple sources supporting the same claim) before allowing an answer to be stated confidently.

Feedback loops: implicit signals from chat behavior to improve retrieval

Snapchat has a unique advantage: chat-native feedback. Follow-up questions can indicate incomplete retrieval; citation clicks can validate relevance; quick abandonment suggests mismatch or low trust; and shares/saves can act as “answer usefulness” signals. Over time, these signals can tune retrieval and reranking so the system learns which sources and formats resolve intent fastest for different cohorts and locales.

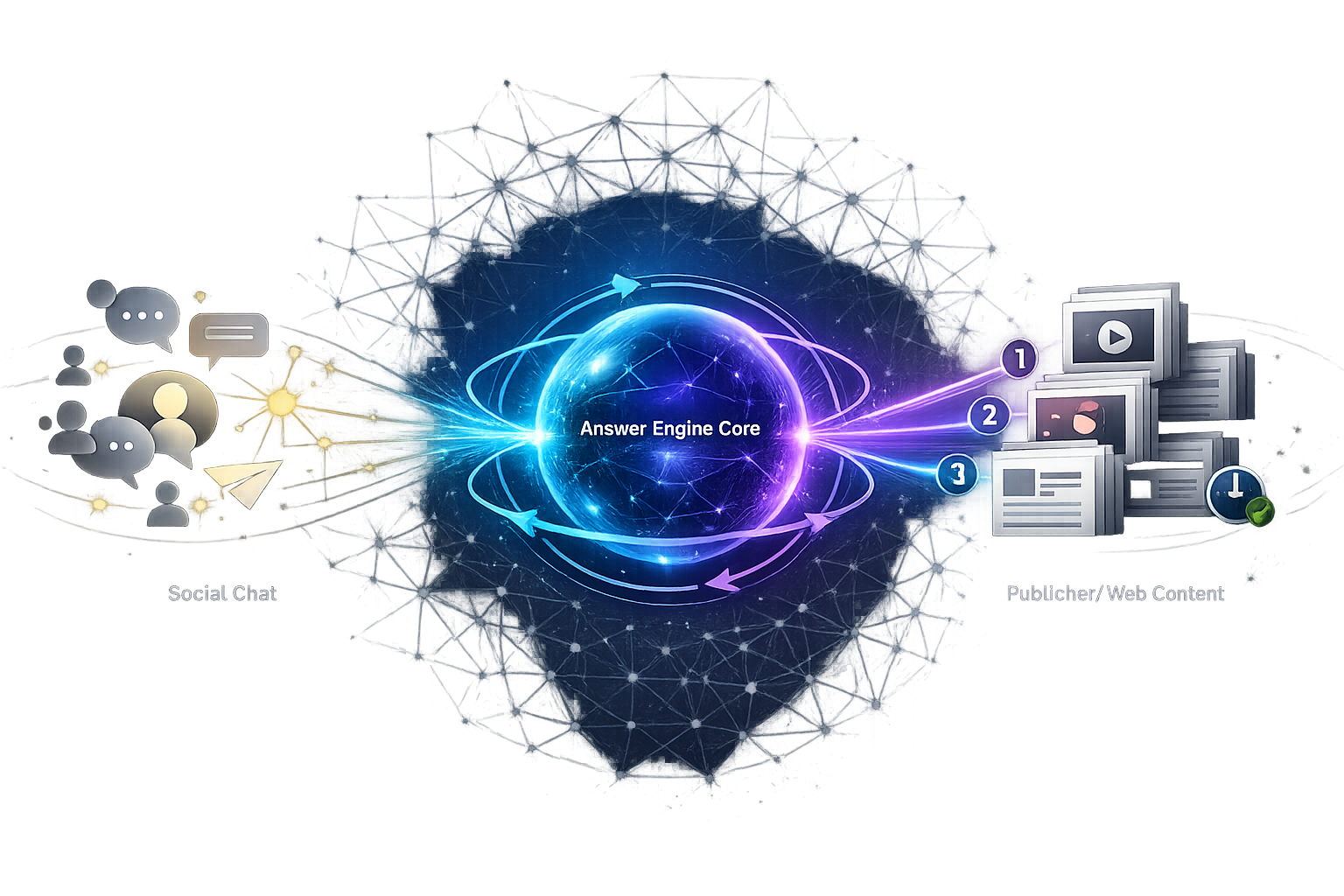

In-Chat AI Retrieval & Content Discovery Pipeline (Conceptual)

A conceptual stage-by-stage view of how an in-chat answer engine can move from a user question to a cited, safe response under mobile latency constraints.

Define “answerable” intents and a gold set

Create a labeled set of common Snapchat-style questions (short, slangy, context-dependent) and define what “good” looks like: must cite sources, must be recent, must be safe, must be concise.

Track stage-level latency (p50/p95) and failure modes

Measure retrieval time, rerank time, generation time, and safety-filter time separately. Also log “no answer,” “no citation,” and “policy block” events to avoid hiding problems behind a single average latency metric.

Use proxy metrics for precision/recall

In production, you rarely have true recall. Use proxies: citation CTR, long-click (dwell) on citations, follow-up rate, and “answer reformulation” rate (user re-asks in different words) to infer relevance and completeness.

Audit citations for diversity and redundancy

Track concentration (top domains cited), diversity by publisher type, and redundancy (multiple sources supporting key claims). This is both a quality and ecosystem health check.

Results to Watch: What Success Looks Like (and How to Measure It)

Product KPIs: engagement, retention, and query frequency

The simplest “did it work?” read is behavioral: do users ask more questions over time, and do they come back more often? For Snapchat, success likely looks like higher queries per DAU, deeper sessions for cohorts who use AI answers, and reduced churn for users who adopt the feature early.

Retrieval KPIs: citation quality, coverage, and freshness

Perplexity’s brand is tightly tied to citations and grounded answers. So the integration should be evaluated on: (1) how often answers include citations, (2) whether citations are relevant and recent, (3) whether the system can cover long-tail questions without fabricating, and (4) how quickly it updates for breaking topics.

Ecosystem KPIs: outbound traffic to publishers and creator discovery

Citations create an explicit bridge to the open web, but the net effect on publishers depends on UX. If answers fully satisfy intent, clicks can drop; if citations are compelling and positioned as “read more / verify,” clicks can rise. The right evaluation is incremental: measure net-new referral traffic driven by citations versus traffic cannibalized from existing search/social referrals.

A/B Test Dashboard View (Illustrative): Engagement vs Citation CTR Over Time

Illustrative trend lines showing how teams might track adoption (queries/DAU) alongside ecosystem impact (citation CTR) during a staged rollout.

Lessons Learned for Entity Optimization: Designing Content for AI Retrieval & Content Discovery in Social Answer Surfaces

What content gets retrieved: entity clarity, topical authority, and structured signals

Answer engines retrieve what they can confidently interpret. That favors pages with clear entity definitions (who/what is this?), unambiguous naming, and consistent terminology across the site. It also favors content that demonstrates topical authority: clusters of related pages, strong internal linking, and “hub-and-spoke” coverage that helps retrieval systems understand relationships among entities and subtopics.

How citations get chosen: trust, freshness, and redundancy checks

Citation selection is often a blend of relevance and trust signals: publisher reputation, transparent authorship, dates and update history, and consistency across multiple sources. In fast-moving topics, freshness can outrank depth. In regulated topics (health, finance), trust and policy constraints often dominate, and models may refuse to answer or cite only a narrow set of sources.

Practical playbook: optimizing for answer engines without sacrificing humans

- Write a quotable definition near the top: 1–2 sentences that precisely define the primary entity/topic (helps snippet-style answers).

- Make authorship and dates explicit: show author name, credentials (where relevant), publish date, and “last updated” date.

- Use structured data where it fits (e.g., Article, Organization, Product, FAQ): improve machine readability and disambiguation.

- Maintain canonical URLs and reduce duplication: answer engines can fragment signals when the same entity lives on multiple near-identical pages.

- Build internal links to entity hubs: make it easy for crawlers (and retrieval systems) to traverse from broad topics to specific entities and back.

- Update strategically: for “fresh” topics, add visible update notes and keep key facts current—stale pages lose retrieval eligibility when recency is a ranking feature.

Assume the user will see only the first 2–4 lines of your content via a citation preview. Put the definitional sentence, the key number/date, and the disambiguating context early—so the citation is self-evidently useful.

Expert Perspectives and Next Steps for Teams Building on AI Retrieval & Content Discovery

Expert quote opportunities: product, safety, and publisher economics

If you’re building or integrating an answer engine at social scale, the most useful “expert perspectives” to capture internally (or via interviews) tend to cluster into three roles: (1) a search/AI product manager on chat UX and latency budgets, (2) a trust & safety lead on grounding, citation policy, and sensitive-topic handling, and (3) a publisher growth/SEO strategist on attribution, referral quality, and monetization impacts of citation-driven traffic.

Implementation roadmap: pilot → measurement → iteration

- Start with bounded intents: definitions, “what is,” local info, and non-sensitive explainers where citations are easy to validate.

- Gate expansion on retrieval quality: require minimum citation coverage, low unsupported-claim rates, and stable latency at p95 before widening topic coverage.

- Add personalization carefully: use conversation context and locale, but avoid over-personalizing sources in ways that reduce diversity or increase bias risk.

- Iterate on citation UX: test whether “verify” framing increases trust and clicks, and whether compact citations outperform long lists on mobile.

Finally, Perplexity’s broader ambitions in the search ecosystem—covered in reporting such as Al Jazeera’s piece on its Chrome bid—underscore that distribution and defaults matter as much as model quality: Perplexity AI’s unsolicited bid to acquire Google Chrome.

And Perplexity’s fundraising trajectory provides context for why partnerships that deliver query volume and product distribution are strategically valuable: Tech Funding News on Perplexity’s funding round and valuation.

Key Takeaways

Embedding an answer engine in Snapchat turns “chat curiosity” into a first-party discovery channel—capturing intent before users leave for browser search.

At social scale, AI Retrieval & Content Discovery quality is defined by latency, grounding, and citations—not just fluent generation.

Success measurement should combine product KPIs (queries/DAU, retention) with retrieval KPIs (citation coverage, freshness) and ecosystem KPIs (incremental publisher referral impact).

Publishers and brands improve retrieval eligibility by optimizing entities: clear definitions, structured data, transparent dates/authors, canonical URLs, and strong internal linking to entity hubs.

FAQ

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning — analysis and GEO implications for AI search.

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants — analysis and GEO implications for AI search.