Perplexity AI's 'Incognito Mode' Under Legal Scrutiny: Privacy Concerns in AI Search (and What It Means for Citation Confidence)

Perplexity AI’s Incognito Mode faces legal scrutiny. Analyze privacy claims, logging risks, and how trust signals affect Citation Confidence in AI search.

Perplexity AI's 'Incognito Mode' Under Legal Scrutiny: Privacy Concerns in AI Search (and What It Means for Citation Confidence)

If an AI search product markets an “Incognito Mode,” users often interpret that as “my prompts won’t be stored or used for tracking.” Legal scrutiny around Perplexity AI’s Incognito Mode highlights a core tension in AI search: even when local browsing artifacts are minimized, server-side logging, telemetry, and third-party tooling can still exist. For publishers and marketers, that trust gap matters because privacy perceptions can change user behavior (what they search, how often, and how sensitive the queries are) and can also change how platforms tune retrieval and citation—both of which influence Citation Confidence: the likelihood your content is selected, cited, and attributed consistently in AI answers.

This spoke article focuses narrowly on (1) what “incognito” can realistically mean in an AI-search stack, (2) where legal risk tends to concentrate when privacy claims are marketed, and (3) the downstream impact on citation patterns—plus a practical monitoring and content playbook that doesn’t depend on user tracking.

“Incognito” is not a standardized technical guarantee. In most products it means reduced local storage (history/cookies) rather than zero server-side collection. Treat it as a feature name that must be validated against the platform’s data flows and disclosures.

Executive summary: why Incognito Mode scrutiny matters for Citation Confidence

What’s being questioned: privacy promises vs. technical reality

Reporting indicates Perplexity AI faces a lawsuit alleging user data sharing with third parties despite Incognito Mode messaging—raising questions about what users were led to believe versus what data flows may have occurred in practice. See the overview and allegations summarized by Tom’s Guide: https://www.tomsguide.com/ai/perplexity-is-being-sued-for-allegedly-sharing-user-data-with-meta-and-google-heres-what-we-know-so-far.

Even without adjudicating the claims, the scrutiny itself is the signal: “private mode” marketing is a high-liability surface because users reliably over-infer protections that may not exist (e.g., no logging, no analytics, no retention, no vendor access).

Thesis: privacy trust signals can indirectly shift Citation Confidence

Citation Confidence is not only a content-quality problem; it’s also a distribution and behavior problem. If users trust an AI search experience less, they may:

- Reduce usage overall (fewer AI-search sessions → fewer citation opportunities).

- Avoid sensitive or high-intent queries (changing which topics are asked and which sources are retrieved).

- Shift to alternative platforms with different citation behavior and source preferences (changing where attribution accrues).

That’s the analytic frame for the rest of this article: product claims → data flows → legal scrutiny → user behavior → downstream citation patterns.

What Incognito Mode likely does (and doesn’t): a data-flow reality check

Threat model: what users assume vs. what services can still see

In consumer software, “incognito/private mode” is commonly understood as preventing local traces (history, cache, cookies) from being stored on a device. But an AI search service still receives the request on its servers. Unless explicitly prevented by architecture and policy, the service can still observe and potentially store:

- Prompt text and attachments (the most sensitive artifact in AI search).

- Network identifiers (IP address, coarse location inference, ASN), plus timestamps.

- Device/app metadata (user agent, OS/app version, language, screen size).

- Interaction signals (clicked citations, dwell time, copy events, thumbs up/down, follow-up prompts).

Where data can persist: prompts, metadata, IP/device signals, and third-party services

A realistic AI-search request path often includes multiple layers beyond the visible chat UI. Conceptually, it looks like:

- User prompt in web/app UI

- Edge/CDN/WAF (rate limiting, abuse detection, caching, bot filtering)

- Application layer (routing, session handling, feature flags, A/B tests)

- Model provider/inference layer (LLM call; sometimes multiple calls)

- Retrieval + citation layer (web fetch, index lookup, reranking, snippet extraction, citation formatting)

- Telemetry/monitoring (logs, traces, error reporting, analytics, fraud detection)

“Incognito” may change how the application associates these events (e.g., not tying to an account, using shorter retention, disabling personalization), but it doesn’t automatically remove the operational need for security logs, abuse prevention, or performance monitoring.

| Data type | Typical operational purpose | Privacy risk level | What “incognito” might change |

|---|---|---|---|

| Prompt text | Answer generation, safety filtering, debugging | High | Shorter retention, no training use, reduced association to identity (if promised and implemented) |

| IP address + timestamp | Security, rate limiting, fraud/abuse prevention, regional routing | Medium–High | Often unchanged; may be truncated/hashed or retained for less time (implementation-specific) |

| Device/app metadata (user agent, OS, locale) | Compatibility, debugging, analytics, anti-bot heuristics | Medium | May reduce persistent identifiers; may still be collected for ops/security |

| Click logs (citations clicked, dwell time) | Ranking/citation evaluation, UX improvements, spam detection | Medium | Could be aggregated/de-identified; could be disabled or minimized (varies by product) |

Transition: once you see how many layers can touch a “private” query, it becomes clearer why regulators and plaintiffs focus on marketing language and disclosure completeness.

Legal scrutiny and compliance pressure points for AI search privacy claims

Advertising/consumer protection: deceptive or misleading privacy representations

“Incognito” claims tend to be evaluated through a simple lens: what would a reasonable user believe, and were material limitations clearly disclosed? Common legal theories in privacy marketing disputes include:

- Misleading representation: the product implies no collection/sharing when collection/sharing still occurs.

- Omission: key caveats (server logs, vendor processing, analytics) are buried or absent.

- Inconsistent disclosures: UI says one thing, privacy policy/terms say another, or implementation contradicts both.

Data protection regimes: consent, purpose limitation, retention, and access rights

Across major regimes (e.g., GDPR/UK GDPR, CPRA/CCPA, sectoral rules), “private mode” scrutiny often collapses into operational controls. Can the company prove it has:

- Data minimization: collecting only what’s necessary for security and functionality.

- Purpose limitation: not repurposing “incognito” queries for advertising/measurement beyond what was disclosed.

- Retention schedules: defined windows for prompts, logs, and identifiers—plus deletion workflows.

- DSAR readiness: the ability to locate, export, and delete user data when required (even if “incognito” is supposed to reduce linkability).

- Vendor management: contracts and technical controls for model providers, analytics SDKs, CDNs, and monitoring tools.

Where “Incognito Mode” privacy claims tend to face the most scrutiny (conceptual)

Illustrative distribution of scrutiny areas based on common enforcement and litigation themes; not a measurement of the Perplexity case.

Why this matters to publishers: trust shocks can change the “query mix.” If fewer users run sensitive searches in AI tools—or they migrate to competitors—your citation footprint can shift even if your content quality stays constant. Competitive context in AI search (source transparency, personalization, action connectivity) is evolving quickly; see a comparative overview here: https://www.trensee.com/en/blog/comparison-chatgpt-search-ai-mode-perplexity-2026-04-04.

How privacy trust impacts Citation Confidence in AI search results

Behavioral pathway: trust → usage → query breadth → citation surfaces

Privacy controversy changes behavior before it changes algorithms. When users are unsure whether an AI search tool is “safe,” they tend to:

- Self-censor: fewer medical, legal, financial, workplace, and relationship queries (topics where citations often demand higher evidentiary quality).

- Simplify: shorter prompts and fewer follow-ups (reducing opportunities for multi-source citation chains).

- Switch tools: moving to alternatives perceived as more private or more “enterprise-safe,” which can have different citation policies and retrieval stacks.

Net effect: the set of queries that produce citations (and the domains eligible to be cited) can change. If the remaining usage concentrates on generic, non-sensitive informational queries, citation surfaces may tilt toward broad reference domains and away from specialist publishers.

Product pathway: telemetry limits → ranking/citation tuning constraints

There’s also a quieter mechanism: if platforms reduce telemetry to meet privacy expectations (or legal requirements), they may lose optimization signals used to tune retrieval and citation selection. With less granular interaction data, systems can become more conservative—leaning on sources that are:

- Widely recognized and consistently crawlable

- Structurally easy to extract and quote

- Low-risk from a safety/compliance perspective (clear authorship, citations, and stable claims)

Practical inference for publishers: privacy controversy can indirectly increase citation concentration among a smaller set of “safe” domains. Niche publishers can still win, but they must be exceptionally attribution-ready and unambiguous.

Citation diversity vs. concentration around a privacy news event (illustrative)

Example of what to measure: unique domains cited per 100 answers (diversity) and top-10 domain share (concentration) before and after a major privacy controversy.

A privacy trust drop can reduce sessions, but it can also change the types of queries asked. Track citation performance by topic sensitivity (e.g., health, finance, workplace) rather than only overall counts—because the mix shift is often the real driver.

What to monitor and how to respond (publisher playbook focused on Citation Confidence)

Monitoring: citation volatility, topic sensitivity, and attribution patterns

Start by establishing a baseline you can defend over time. For each priority topic cluster, capture:

- Citation frequency: how often your domain is cited per 100 eligible queries.

- Citation position: whether you appear as a primary citation vs. a “supporting” citation.

- Snippet alignment: whether the cited passage accurately reflects your claim (misalignment can reduce future selection).

- Topic sensitivity tags: label queries as sensitive/non-sensitive to detect mix shifts after privacy news.

Response: strengthen attribution readiness without relying on user tracking

If AI platforms collect less telemetry—or if users reduce engagement—publishers need to win on content properties that retrieval systems can evaluate without personalization. Prioritize:

Make claims quotable and bounded

Use descriptive headings, short definitional paragraphs, and clearly scoped statements (who/what/when). Avoid burying the key answer behind long narrative intros.

Attach evidence and provenance

Cite primary sources, link to standards/regulators, and include publication/updated dates and author credentials. In high-stakes topics (health/medicine), bibliometric work shows how citation networks influence perceived authority; rigorous sourcing helps your page become the “safe” citation. Example related research: https://journals.sagepub.com/doi/10.1177/20552076251365059.

Reduce extraction friction

Keep stable URLs, avoid intrusive interstitials, ensure fast rendering, and structure pages so a retrieval system can reliably extract the key passage. Use schema where appropriate (Organization, Person, Article, FAQ) to reinforce attribution.

Write for verifiability, not virality

AI citation systems reward clarity and cross-checkability. “Citation-worthy” patterns—definitions, stepwise methods, constraints, and explicit source links—improve selection odds. Practical guidance: https://gracker.ai/data-and-research-reports/building-citation-worthy-content.

Sample publisher dashboard: Citation Confidence vs. concentration risk (illustrative)

How to visualize whether you’re gaining citations while the ecosystem becomes more concentrated. Use your own measured data.

How to interpret the metrics

If Citation Confidence falls while concentration rises after a privacy controversy, you’re likely losing out to “default safe” domains. Your best lever is not user tracking—it’s improving extractability, evidence quality, and unambiguous attribution signals so retrieval systems can justify citing you even with weaker telemetry.

Key takeaways

“Incognito Mode” usually reduces local traces; it does not automatically eliminate server-side logging, vendor processing, or analytics.

Legal scrutiny often targets the gap between user expectations and disclosed limitations (especially around sharing, retention, and third-party tooling).

Privacy trust impacts Citation Confidence indirectly by shifting query behavior and, potentially, by reducing telemetry that platforms use to tune retrieval and citation selection.

Publishers can stay resilient by monitoring citation volatility by topic sensitivity and investing in attribution-ready, evidence-backed, easily extractable content.

FAQ: Incognito Mode, privacy, and citation confidence

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

Google's Gemini 3.1 Pro: Redefining AI Search with 1M Token Context Windows (How to Adapt Your Knowledge Graph Strategy)

Learn how to adapt Knowledge Graph and structured data for Gemini 3.1 Pro’s 1M-token context—improve grounding, retrieval, and AI search visibility.

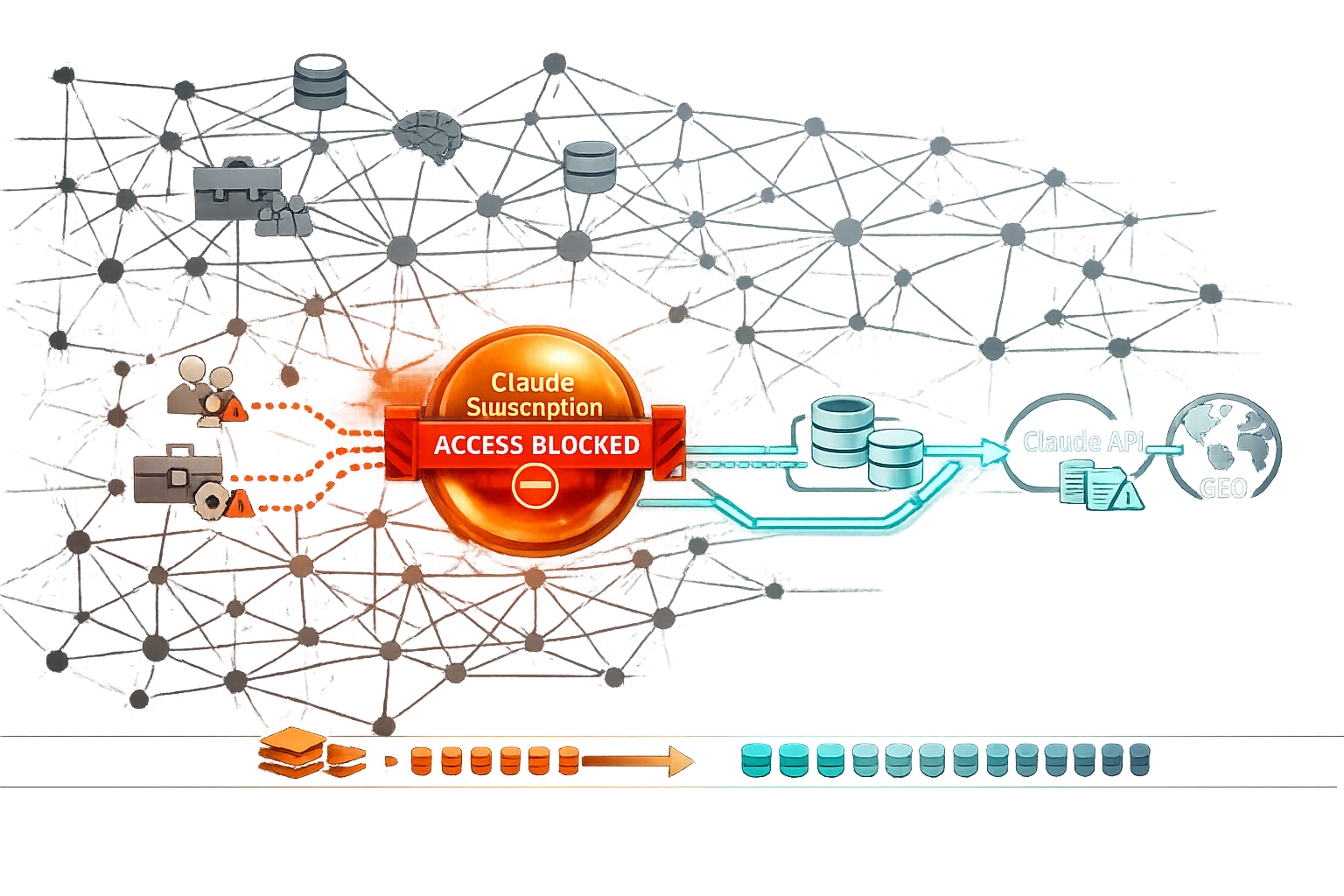

Anthropic Blocks Third‑Party Agent Harnesses for Claude Subscriptions (Apr 4, 2026): What It Changes for Agentic Workflows, Cost Models, and GEO

Deep dive on Anthropic’s Apr 4, 2026 block of third‑party agent harnesses for Claude subscriptions—workflow impact, cost models, compliance, and GEO.