Structural Feature Engineering for GEO: A Comparison Review of Techniques That Improve AI Visibility

Compare structural feature engineering techniques for GEO that boost AI Visibility—schemas, entity markup, chunking, and citations—with data ideas and a decision guide.

Structural Feature Engineering for GEO: A Comparison Review of Techniques That Improve AI Visibility

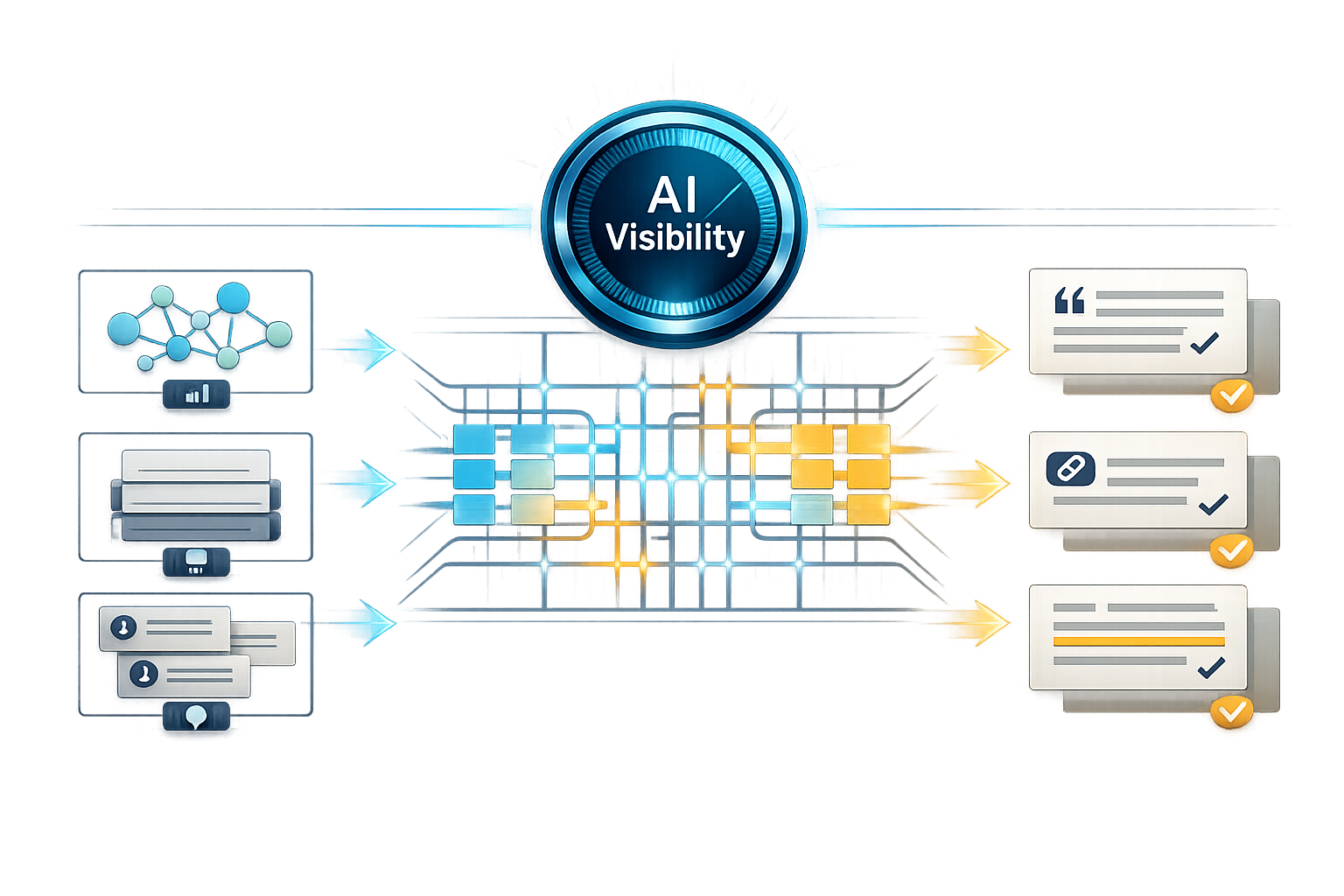

Structural feature engineering for GEO is the practice of changing how a page is built (not just what it says) so AI answer engines can more reliably retrieve, quote, and cite it. In this spoke review, we compare three high-leverage structural techniques—entity-first structuring, chunk-first structuring, and evidence-first structuring—using a clear decision framework (impact, effort, risk, measurability) and a practical 90-day rollout plan.

If you’re calibrating your strategy to how LLM systems prioritize and select sources, pair this with our deeper explainer on LLM Ranking Factors: Decoding How AI Models Prioritize Content (relationship: EXPANDS).

Before changes, log AI Visibility %, citations by engine, quote accuracy, and 14/30/60/90-day deltas.

What “structural feature engineering” means in GEO (and how it impacts AI Visibility)

Featured-snippet-ready definition: structural features vs. content quality

Definition (GEO)

Structural feature engineering is the deliberate design of document structure—markup, headings, chunk boundaries, entity disambiguation, and evidence formatting—to increase retrievability and citability in LLM-based answer engines.

This differs from “content quality” work (better writing, deeper expertise, stronger POV). Quality helps humans and can help rankings, but structure determines whether a system can (a) extract the right passage, (b) attribute it correctly, and (c) feel safe citing it. In GEO, success often looks like being selected, quoted, or cited—sometimes even when you’re not the #1 blue-link result.

How answer engines retrieve & cite: chunks, entities, and evidence

Most answer engines behave like a pipeline: retrieval identifies candidate passages, ranking selects the most useful ones, and generation composes an answer (sometimes with direct quotes and links). Structural features influence each stage:

- Chunks: clearly bounded, query-aligned passages are easier to lift accurately (and less likely to be paraphrased incorrectly).

- Entities: explicit “who/what/where” reduces ambiguity, improving matching for “What is X?”, “X vs Y”, and brand/entity queries.

- Evidence: citations, dates, and methods increase the likelihood an engine can justify attribution—especially for statistics and contested claims.

This is increasingly important as search experiences shift toward multi-model systems and AI-mediated answers, where selection and attribution can vary by engine and model routing. Axios’ reporting on multi-model AI strategies is a useful lens for why “structure” matters across systems: Microsoft’s Multi-Model AI Strategy (Axios).

Comparison criteria for this review (impact, effort, risk, measurability)

- Retrieval lift: does the structure make the right passage easier to retrieve?

- Citation likelihood: does it increase the chance an engine attributes and links to you?

- Implementation effort: templates, editorial time, and engineering work required.

- Risk of misinterpretation: chance of wrong extraction, wrong entity association, or misleading citations.

- Measurability: how cleanly you can test before/after impact (and isolate confounds).

For a broader foundation, see our pillar pages on Generative Engine Optimization (GEO) and AI Visibility.

Technique #1: Entity-first structuring (schema + entity markup + disambiguation)

What to implement: Organization/Person/Product/Article schema + sameAs + entity glossary

Entity-first structuring makes “who/what/where” explicit. The goal is to reduce ambiguity so retrieval systems can match your page to entity-led prompts (definitions, comparisons, pricing, leadership, locations) and attribute the right brand or product.

- Use schema.org types that match reality (Organization, Person, Product, SoftwareApplication, Article) and keep them consistent across templates.

- Add sameAs links to authoritative profiles (e.g., Wikidata, Crunchbase, GitHub, LinkedIn, app stores) where appropriate.

- Create canonical entity pages (stable URLs) for products, categories, people, and core concepts; link to them consistently.

- Add an on-site entity glossary: short definitions near first mention, plus a dedicated glossary/hub for disambiguation.

- Standardize author bios (credentials, role, profile URL) to reduce author/entity confusion and improve attribution.

When it works best (and when it fails)

Entity-first work tends to perform best when your brand, product, or people are frequently confused with similar names, when you operate in a crowded category, or when you need consistent attribution across many pages. It can underperform when schema is added inconsistently, when entity names drift across pages, or when the underlying content doesn’t clearly define the entity’s scope.

Entity-first structuring: pros and cons

- Reduces ambiguity for brand/entity queries

- Improves consistency of attribution across a site

- Scales well via templates once patterns are set

- Schema errors can silently negate benefits

- Over-markup or mismatched types can confuse parsers

- Entity drift when content updates aren’t reflected in markup

Effort, risks, and measurement

Effort is usually moderate: initial template work plus an entity cleanup pass. The biggest risks are inconsistency (different names for the same thing), invalid schema, and “entity drift” after updates. Measure impact by tracking citation frequency for entity-led queries (e.g., “What is X?”, “X vs Y”, “X pricing”) and checking whether engines quote the correct entity definition.

Entity-first pilot: example metrics to track

Illustrative dataset idea for a 20-page pilot (replace with your internal numbers).

Internal link: Schema markup strategy for entity disambiguation (relationship: SUPPORTS).

Technique #2: Chunk-first structuring (semantic chunking, headings, and answer blocks)

Chunk design patterns: 40–120 word answer blocks, scannable H2/H3, and TL;DR sections

Chunk-first structuring optimizes for passage retrieval. You design self-contained, query-aligned blocks so systems can lift the exact span that answers a prompt. Done well, it improves AI Visibility and reduces the odds your content is paraphrased into something inaccurate.

Start each section with a direct answer block

Write a 40–120 word answer that can stand alone, then expand below it.

Use scannable H2/H3 that match prompt language

Prefer “What is…”, “How to…”, “Best for…”, “Limitations…”, “X vs Y…” over clever headings.

Add constraints and assumptions

Explicitly state scope, prerequisites, and edge cases to prevent mis-citation.

Include a page-level TL;DR

Summarize the 3–5 key points so short-context retrieval still preserves intent.

Comparison: “narrative pages” vs. “retrieval-optimized pages”

Narrative pages can be excellent for humans but often bury the answer. Retrieval-optimized pages keep narrative, but they surface extractable units: definitions, step lists, and “best for / not for” blocks that map to common prompts.

How to avoid over-fragmentation and loss of context

The tradeoff is real: too-long chunks reduce retrievability; too-short chunks lose context and raise mis-citation risk. Use a consistent rule (e.g., answer blocks 40–120 words; supporting blocks 120–200 words) and keep a page-level summary to anchor meaning.

Chunking audit: example pre/post signals

Illustrative trend showing how chunk length and quote accuracy can move after restructuring.

Internal link: Content chunking templates for retrieval-optimized pages (relationship: IMPLEMENTS).

Technique #3: Evidence-first structuring (citations, sources, and verifiability scaffolding)

Evidence patterns: inline citations, source tables, and methodology notes

Evidence-first structuring makes claims easy to verify. You pair assertions with sources, dates, and methods so engines (and humans) can justify attribution. This tends to matter most for statistic-driven queries, contested topics, and “best X” comparisons where engines prefer citable, attributable passages.

- Inline citations for key claims (especially numbers, rankings, and definitions).

- A “Sources & methodology” section (what you measured, when, and how).

- Numbered references and stable source links; avoid link rot where possible.

- Freshness signals: “Last reviewed” / “Updated” with a short change log.

Freshness and traceability show up repeatedly in practitioner research on how LLMs find and prefer citations; see Passionfruit’s analysis of what LLMs look for in sources: How LLMs search for citations.

Comparison: “claim-heavy” vs. “evidence-heavy” pages for AI citation likelihood

Evidence scaffolding: what changes on-page

| Page pattern | What it looks like | Likely outcome in AI answers |

|---|---|---|

| Claim-heavy | Many assertions, few sources, no dates/methods | Higher risk of uncited paraphrase or non-attribution |

| Evidence-heavy | Key claims cited, sources table, updated date, methods note | Higher chance of direct citation and safer quoting |

Editorial governance: freshness, attribution, and audit trails

Evidence-first work fails when it becomes “citation spam” (lots of low-quality sources), when links break, or when claims don’t match what the source actually says. Use a minimum source-quality rubric (primary data > reputable secondary analysis > opinion) and add an audit trail so updates don’t invalidate citations.

For a benchmark view of what domains get cited most often in AI experiences (and why community validation can matter), see Semrush’s analysis: Most cited domains in AI.

Side-by-side comparison: Which structural feature engineering method should you prioritize?

Comparison matrix (impact vs. effort vs. risk)

| Technique | Retrieval lift | Citation likelihood | Effort | Risk | Measurability |

|---|---|---|---|---|---|

| Entity-first | Medium | Medium–High | Medium | Medium (schema errors) | Medium |

| Chunk-first | High | Medium | Low–Medium | Low | High |

| Evidence-first | Medium | High | Medium–High | Medium (source quality) | Medium–High |

Recommendations by site type (SaaS, publisher, ecommerce, local)

- SaaS: start chunk-first on feature/solution pages, then entity-first for product + integrations, then evidence-first for benchmarks and comparisons.

- Publishers: chunk-first for explainers, evidence-first for stats and investigations, entity-first for author/topic hubs.

- Ecommerce: entity-first for products/brands, chunk-first for buying guides and FAQs, evidence-first for claims (materials, tests, guarantees).

- Local: entity-first for NAP consistency and location entities, chunk-first for service pages, evidence-first for licensing, pricing ranges, and policies.

90-day implementation roadmap (minimum viable GEO structure)

Weeks 1–2: Templates + baseline

Pick 15–30 high-intent pages. Capture baseline citations/mentions/quote accuracy. Add chunking and heading templates first for fastest retrieval wins.

Weeks 3–6: Roll out chunk-first to the pilot set

Add answer blocks, TL;DRs, and consistent H2/H3 patterns. Re-test the same prompt set weekly to detect early movement.

Weeks 7–10: Add entity-first disambiguation

Fix naming consistency, add canonical entity pages, and validate schema. Focus on pages that compete on “What is X?” and “X vs Y”.

Weeks 11–12: Evidence scaffolding on competitive pages

Add sources/methodology and freshness signals to pages where citations decide the winner (stats, comparisons, claims). Measure citation uplift and uncited paraphrase reduction.

“In AI-mediated search, structure is a ranking feature and an attribution feature. If a system can’t confidently extract and verify a passage, it will often choose a different source—or use yours without naming you.”

Technique scoring (example)

Example relative scores (1–5) across the review criteria; replace with your pilot benchmarks.

Measurement & QA: Proving AI Visibility gains from structural changes

Define AI Visibility metrics: discoverability, retrievability, citability

- Discoverability: your pages appear as candidates (mentions, surfaced links, or suggested sources).

- Retrievability: engines extract the correct passage for the prompt (passage match rate).

- Citability: engines attribute your content with a link/mention and quote it accurately.

Test design: prompt sets, query clusters, and before/after controls

Use a fixed prompt set per topic (20–50 prompts), grouped by intent (definition, comparison, pricing, how-to, troubleshooting). Track citations/mentions, extract quoted spans, and log engine + date to control for model drift. If possible, keep a control group of similar pages untouched for 30–60 days to isolate lift from broader engine updates.

QA checklist: schema validation, chunk integrity, and citation accuracy

Validate schema, lint headings/chunks, check broken links, and verify each key claim matches its cited source.

Internal link: Measuring citations and mentions across AI answer engines (relationship: MEASURES).

Key takeaways

Structural feature engineering improves AI Visibility by making pages easier to retrieve, extract, and cite—separate from “writing better.”

Chunk-first usually delivers the fastest retrieval wins; entity-first reduces ambiguity and strengthens attribution; evidence-first increases citation likelihood on competitive queries.

Measure with a fixed prompt set and track citations/mentions, quoted spans, and quote accuracy over time (14/30/60/90 days).

Governance matters: schema validation, consistent entity naming, and claim-to-source checks prevent “structure” from becoming a new failure mode.

FAQ

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning — analysis and GEO implications for AI search.

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants — analysis and GEO implications for AI search.