Generative Engine Optimization (GEO) — citation diagnostics & repair

Learn how to diagnose and repair missing or wrong citations in Google AI Overviews using Structured Data, entity signals, and a repeatable audit workflow.

Generative Engine Optimization (GEO) — citation diagnostics & repair

If your content is accurate but Google AI Overviews doesn’t cite it (or cites the wrong URL), you’re usually dealing with a citation failure: the system can’t reliably retrieve, understand, or trust your page as the best evidence for a specific answer. The fastest way to diagnose and repair that failure is to treat citations as an observable output, then systematically debug the inputs—technical retrieval signals, entity clarity, and Structured Data that aligns your page to the Knowledge Graph.

This spoke is a practical playbook for auditing citation gaps, tagging root causes, and implementing repairs that increase citation incidence and citation accuracy without “markup theater.” For adjacent research on why structure matters for LLM citations, see The Impact of Content Structure on LLM Citations: Insights from Recent Studies.

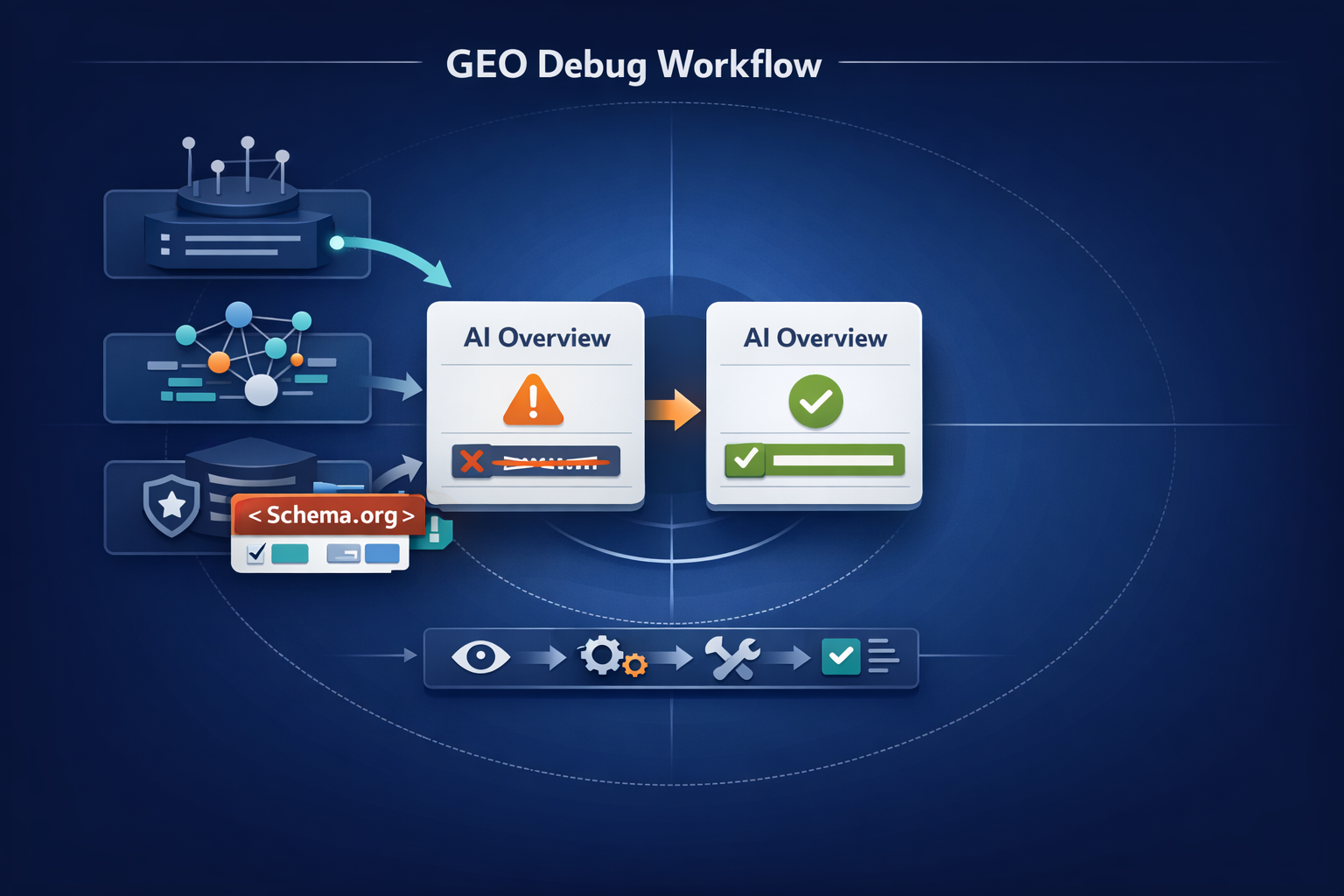

Citation diagnostics & repair is a repeatable GEO workflow to (1) identify why a page isn’t cited (or is mis-cited) for a set of AI Overview intents, and (2) fix the underlying retrieval/understanding/trust issues—most often through canonical hygiene, entity disambiguation, and Structured Data that matches what the page actually says.

What “citation failure” really is in AI Overviews (and why Structured Data is the lever)

Featured snippet-style definition: citation diagnostics & repair

In GEO, citation diagnostics & repair means building a tracked query set, observing which URLs AI Overviews cite (and whether they’re correct), then debugging the three layers that drive citations: retrieval (can Google fetch/index the right page?), understanding (does the system resolve your entities and claims correctly?), and trust (is your page corroborated and attributable enough to be used as evidence?).

My thesis: most GEO “wins” are debugging, not copywriting

Teams often assume “better writing” is the lever. In practice, many AI Overview citation gaps come from boring, fixable issues: the wrong canonical, inconsistent entity naming across templates, missing Organization/Person provenance, or weak machine-readable relationships. That’s why GEO programs that treat visibility as an engineering problem (instrument → diagnose → repair → retest) tend to compound faster. For a Knowledge Graph–led example of this mindset, explore The Rise of Generative Engine Optimization (GEO): Navigating AI-Driven Search Landscapes (Case Study: Knowledge Graph–Led Entity Optimization).

How AI Overviews pick sources: entity confidence + retrieval signals + trust

While the exact ranking and generation pipeline isn’t fully transparent, citations generally emerge when the system can: (a) retrieve candidate documents reliably, (b) extract and align entities/relationships to the query intent, and (c) corroborate claims with trustworthy sources. Structured Data is the lever because it’s one of the most testable ways to reduce ambiguity about what the page is about, which entity it represents, and how it relates to other entities—the same primitives Knowledge Graphs use.

This focus on citation reliability is also showing up in research on LLM citation practices (accuracy, validity, and failure patterns). See: LLMs' Citation Practices Under Scrutiny: Ensuring Accuracy in AI-Generated Content and the 2026 diagnostic framing in Generative Engine Optimization (GEO) — citation diagnostics & repair (AgentGEO).

A practical diagnostic: 7 citation failure modes you can actually test

A useful way to stop guessing is to bucket every citation problem into a failure mode with a measurable symptom. Here are seven that show up repeatedly in audits.

Fix technical retrieval + canonical consistency first, then entity clarity, then Structured Data enrichment. Retrieval can’t cite what it can’t reliably fetch; understanding can’t cite what it can’t disambiguate.

- Failure mode 1 — Entity ambiguity: your brand/product/person name collides with another entity. Symptom: AI Overviews cite competitors for “your” branded facts or cite Wikipedia/aggregators that disambiguate better than you do.

- Failure mode 2 — Weak topical alignment: the page ranks, but the answer engine doesn’t see it as the best evidence for the specific question. Symptom: you get classic organic visibility, but citations go to narrower, more directly aligned pages (often competitor FAQs or docs).

- Failure mode 3 — Thin corroboration: your claims are “true,” but not supported with sources, definitions, or consistent entity context. Symptom: AI Overviews prefer third-party citations (standards bodies, research, government, major publishers).

- Failure mode 4 — Crawl/index/render issues: the content you think is “on the page” isn’t consistently accessible to Googlebot. Symptom: cached/HTML differs from rendered, blocked resources, delayed rendering, or partial indexing.

- Failure mode 5 — Canonical/duplication traps: multiple URLs compete for the same entity/topic, and the wrong one becomes the “citation target.” Symptom: AI Overview cites a tag page, parameter URL, or category page instead of the canonical guide (or cites an old version).

- Failure mode 6 — Structured Data gaps: Schema.org exists, but it doesn’t declare the primary entity, publisher, or page type clearly. Symptom: brand facts are repeatedly misattributed; authorship/provenance is unclear; the page isn’t eligible for certain rich interpretations.

- Failure mode 7 — Broken entity relationships: your page doesn’t connect the dots between entities (product ↔ organization, person ↔ role, feature ↔ category). Symptom: the engine cites “explainers” elsewhere because they provide cleaner relationship framing and disambiguation.

Example audit distribution: citation failure modes (n=100 pages)

Illustrative distribution you can replicate in your own audit by tagging each missed/mis-citation to a dominant failure mode. Use your own crawl + SERP evidence to replace the sample values.

To scale this beyond a one-off audit, you need a repeatable workflow and a change log. If you’re operationalizing Knowledge Graph updates and monitoring AI visibility, the automation angle in Case Study: Using Marketing Automation Platform Features to Orchestrate Knowledge Graph Updates for AI Visibility Monitoring is a useful companion.

Citation diagnostics workflow (repeatable): from query set to root cause

Build a citation query set that mirrors AI Overview intents

Output: a spreadsheet of 30–200 queries grouped by intent (definition, comparison, “how to,” troubleshooting, pricing, compliance). Include branded + non-branded variants, and map each query to the page you believe should be cited.

Capture evidence: SERP snapshots + cited URLs + what’s being claimed

Output: a citation map per query: (a) whether an AI Overview appeared, (b) which domains/URLs were cited, (c) which claim(s) each citation appears to support, and (d) whether your intended canonical URL was cited. Store before/after snapshots so you can prove deltas.

Isolate the cause: retrieval vs understanding vs trust

Output: root-cause tags. For each missed or wrong citation, decide the dominant layer: retrieval (indexing/canonical/render), understanding (entity ambiguity, topical mismatch), or trust (lack of provenance/corroboration). This is where Structured Data becomes a controlled input rather than a “best practice.”

Prioritize repairs by expected citation lift

Output: a fix backlog ranked by (a) number of affected queries, (b) severity (wrong entity/wrong URL vs missing), (c) ease of implementation (template vs one-off), and (d) time-to-retest (crawl frequency, indexation speed).

Counterpoint: “You can’t reverse-engineer AI Overviews.” True—fully. But you can still run controlled diagnostics: treat citations as an observable output and iterate on measurable inputs (canonical hygiene, entity clarity, Structured Data parity, corroboration). That’s enough to improve outcomes over repeated cycles.

KPI framework example: citation rate and canonical accuracy over a 30-day repair sprint

Illustrative trend lines showing how teams often measure progress: more tracked queries where you are cited, and fewer instances where the wrong URL is cited.

If you want a broader “why now” view on GEO adoption and visibility, use this workflow as the execution layer beneath your strategy. For research tying Knowledge Graph readiness to AI-search visibility, see Generative Engine Optimization (GEO) Adoption Research: How Knowledge Graph Readiness Predicts AI-Search Visibility.

Repair playbook: Structured Data fixes that move citations (without spam)

Structured Data doesn’t “force” citations. It reduces ambiguity and improves machine interpretability—especially around entities, provenance, and canonical targets. The goal is parity: your markup should precisely reflect what a human sees on the page.

Repair 1: align the page to one primary entity (and declare it)

Pick a single primary entity per page (the thing the page is “about”), then make it consistent across: title/H1, intro definition, internal anchor text, and Schema.org. For example: a product page should clearly describe the product entity; a company profile should clearly describe the Organization entity; a thought-leadership piece should clearly identify the publisher and author entities.

Repair 2: strengthen relationships (sameAs, about, mentions) to the Knowledge Graph

Relationship markup is where many citation “repairs” actually happen. Patterns that tend to reduce mis-citation include:

- Use

sameAsto link your Organization/Person to authoritative profiles (e.g., official social profiles, Wikidata, Crunchbase where appropriate, standards memberships where applicable). - Use

aboutfor the primary entity/topic andmentionsfor secondary entities that are materially discussed (not keyword-stuffed). - Ensure Organization ↔ Product ↔ Article connections are explicit (publisher, brand, manufacturer, author, reviewedBy where appropriate and truthful).

This is also where transparency matters: as AI search evolves, pressure is increasing for explainable, trustworthy source selection. For the ethics and transparency angle, see Industry Debates: The Ethics and Future of AI in Search—Why Knowledge Graph Transparency Must Be Non‑Negotiable. Related fairness considerations in ranking and retrieval are discussed in Fairness in AI Ranking: Do LLMs Exhibit Bias?.

Repair 3: validate authorship and provenance (E-E-A-T signals via markup + page UX)

AI Overviews often prefer sources that are attributable: clear publisher, clear author (where relevant), and clear update history. Practical repairs include: consistent author pages, Organization markup with verifiable identifiers, and Article markup that matches visible bylines and dates. The point isn’t to “game E‑E‑A‑T,” it’s to remove uncertainty about who is speaking and why they’re credible.

Repair 4: fix canonical targets so the model cites the right URL

Mis-citations often trace back to URL chaos: parameters, faceted navigation, print views, localization variants, or legacy paths. Repairs that tend to improve citation accuracy include: one canonical per intent, consistent internal linking to the canonical, and eliminating near-duplicate pages that compete for the same entity/topic. If you’re using crawl data to surface these traps, the approach in Screaming Frog SEO Spider Review 2026 (Case Study): Using Crawl Data to Improve Generative Engine Optimization is directly relevant.

Adding irrelevant Schema.org types or stuffing entities into about/mentions can increase ambiguity and reduce trust. Only mark up what is clearly present on the page, keep identifiers consistent across templates, and validate regularly.

Finally, remember the broader ecosystem constraints: answer engines and aggregators face legal and policy pressure around content usage and attribution. Even if this doesn’t change your technical checklist, it strengthens the case for clean provenance and accurate citations. Background context: Perplexity AI (legal battles overview) and a GEO playbook update lens in Anthropic's $1.5 Billion Settlement: A Turning Point for AI and Copyright Law (How to Update Your Generative Engine Optimization Playbook).

What to measure, what to ignore, and how to prove impact

The metrics that matter: citation incidence, citation accuracy, and entity coverage

- Citation incidence rate:

cited queries / total tracked queries(for your domain). - Citation accuracy rate:

correct canonical citations / total citations to your domain. - Entity match rate: % of tracked queries where the AI Overview correctly associates your primary entity with the claim you want credited (use manual review + consistent tagging).

Attribution reality check: correlation vs causation in AI Overview visibility

You won’t get perfect causality because AI Overviews change, queries drift, and competitors ship improvements. But you can still produce credible evidence by running staged rollouts: implement one repair class at a time (canonical fixes → entity clarity → relationship markup), keep a change log, and compare pre/post snapshots on the same query set. This is especially important as model behavior evolves (reasoning, safety, grounding). For how model changes can shift grounding expectations, see GPT-5.4 Thinking vs GPT-5.4 Pro: What the Release Signals for Knowledge Graph Grounding in Google AI Overviews, and for safety/reasoning shifts in AI search, see Anthropic's Claude 4: Redefining AI Search with Enhanced Reasoning and Safety.

| Metric | Baseline (Day 0) | Post-fix (Day 30) | Notes / confidence |

|---|---|---|---|

| Citation incidence rate | 12% | 26% | Compare same tracked queries; note major SERP changes. |

| Citation accuracy rate (canonical) | 68% | 90% | Usually improves fastest after canonical + internal link fixes. |

| Entity match rate | 41% | 58% | Improves with consistent naming + sameAs/about/mentions parity. |

Call to action: run a 30-day citation repair sprint

- Week 1: build the query set + citation map; tag failures by mode.

- Week 2: ship canonical/internal linking fixes and retest.

- Week 3: ship entity clarity + primary entity markup updates (Organization/Person/Product/Article).

- Week 4: enrich relationships (sameAs/about/mentions) and provenance; publish an internal postmortem and roll what worked into templates.

If you’re aligning this sprint with broader platform shifts in AI search experiences, it’s worth tracking how Google positions AI as a “thought partner” and what that implies for grounding and citations: Google's Gemini 3: Transforming Search into a 'Thought Partner'—What It Means for Generative Engine Optimization.

Key takeaways

Treat AI Overview citations as a debuggable output: instrument a query set, capture citations, tag root causes, ship fixes, and retest.

Most GEO gains come from removing ambiguity (entities, canonicals, provenance), not from rewriting copy.

Prioritize retrieval and canonical consistency first; then improve entity clarity; then enrich Structured Data relationships with strict content-to-markup parity.

Prove impact with three KPIs: citation incidence, canonical accuracy, and entity match rate—tracked on the same queries with before/after evidence.

FAQ: GEO citation diagnostics & repair

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning

OpenAI’s GPT-5.5 and the new search/ranking implications of better reasoning — analysis and GEO implications for AI search.

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants

OpenAI GPT — GPT-5.5 ('Spud') release and new model variants — analysis and GEO implications for AI search.