Structured Data in 2026: What Recent AI Search Changes Mean for Schema Markup Strategy

News analysis on how AI Overviews and answer engines are changing Structured Data priorities in 2026—what to update, measure, and expect next.

Structured Data in 2026: What Recent AI Search Changes Mean for Schema Markup Strategy

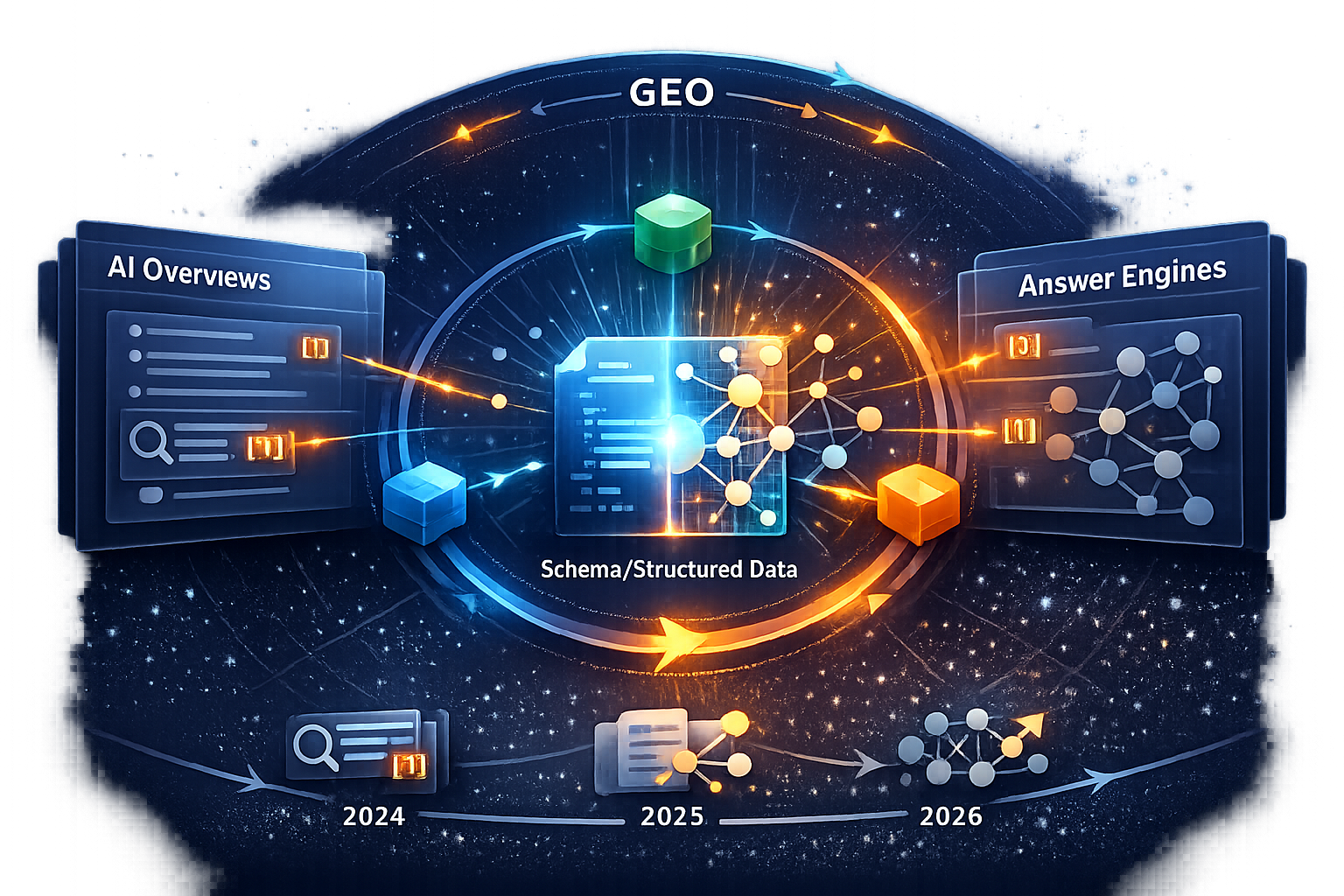

In 2026, Structured Data still matters—but the reason has changed. As Google’s AI Overviews and answer engines like ChatGPT and Perplexity reshape discovery, schema markup is increasingly evaluated less as a “rich result unlock” and more as a machine comprehension layer that helps systems resolve entities, verify provenance, and connect relationships. This spoke breaks down what changed (2024–2026), what to update now, and how to measure whether your markup is improving AI visibility and citations—not just passing validation.

AI answers compress the funnel: users may never reach a SERP list of links. Your schema strategy should prioritize being correctly understood and safely citable (entity clarity + trust signals) rather than only chasing SERP embellishments.

What changed: AI Overviews and answer engines are reshaping how Structured Data gets used

The news hook is simple: AI-generated answers expanded quickly, and “visibility” now includes inclusion in summaries, citations, and conversational recommendations. Google’s ongoing core updates in early 2026 reinforce a broader re-evaluation of content quality and usefulness—conditions where clear entity definitions and consistent provenance become more important for systems that summarize and attribute information.

Recent updates and industry signals worth tracking include Google’s confirmed March 2026 broad core update (The SMB Hub) and the February 2026 Discover Core Update coverage (Lumar), both of which emphasize rewarding genuine value and reducing low-quality tactics—an environment where misleading or inconsistent markup is more likely to be discounted.

Timeline of recent shifts (2024–2026) that altered SERP and citation behavior

Below is a mini-dataset of notable shifts and the associated impact on SERP real estate. Treat the “SERP impact” values as directional (high/medium/low) rather than exact percentages—because third-party studies vary by market, query class, and measurement method.

AI answer expansion signals (2024–2026) and estimated SERP real estate impact

Directional view of major AI-answer shifts and how strongly they can displace traditional organic click opportunities (higher = more SERP real estate captured by AI answer modules).

Why Structured Data is moving from “rich results” to “machine comprehension”

Historically, many schema projects were justified by eligibility: stars, breadcrumbs, FAQs, and other enhancements. In 2026, the more durable value is semantic disambiguation: helping AI systems confidently answer “who/what is this?”, “how is it related?”, and “can I trust it enough to cite?”

This is especially relevant as answer engines grapple with citation integrity. Research like GhostCite highlights how LLMs can fabricate or mis-handle citations and proposes strategies to improve citation reliability (arXiv). While schema markup can’t “force” correct citations, it can reduce ambiguity around entities and sources—making it easier for systems to attribute correctly.

Scope note: this article focuses on prioritizing schema types/properties for AI comprehension and citation. It does not re-teach JSON-LD basics or every Schema.org type.

The new priority: entity clarity and relationship mapping (not just eligibility for rich results)

If your schema markup describes pages but not entities, it’s increasingly underpowered. AI systems rely on entity resolution: matching mentions to a stable identity, then traversing relationships (creator → organization → product/service → topic) to build answers.

Definition (for featured snippet / AI extraction)

Structured Data is machine-readable annotation (often Schema.org in JSON-LD) that defines entities (people, organizations, products, concepts) and relationships between them so search engines and AI systems can interpret content consistently.

From keywords to entities: how Structured Data supports Knowledge Graph alignment

Entity-first markup reduces ambiguity by using consistent identifiers and explicit relationships. In practice, that means you should treat schema as a graph, not isolated blobs:

- Use a persistent

@idfor each real-world entity (your Organization, each author, each product line), reused across pages. - Add

sameAslinks to authoritative profiles (e.g., Wikipedia/Wikidata, official social profiles) where appropriate and accurate. - Connect pages to entities using

mainEntityOfPageand consistent canonical URLs.

For teams thinking about how structured knowledge supports assistants and answer engines, see our related briefing Samsung's Bixby Reborn: A Perplexity-Powered AI Assistant, which is useful when comparing how assistants consume and operationalize structured information across devices and contexts.

Which relationships matter most: creator, organization, product/service, and topical hierarchy

A practical way to prioritize is to maximize “relationship density” around the entities that drive trust and conversion. Start with these relationship classes:

- Provenance: Organization ↔ Person (authors/editors) ↔ Article (publisher, author, reviewedBy, dateModified).

- Commercial clarity: Product/Service ↔ Offer/pricing ↔ aggregateRating/review (only if visible and policy-compliant).

- Topical hierarchy: WebPage ↔ about ↔ (Thing/Topic) and isPartOf ↔ CollectionPage/Blog to show content architecture.

Over-marking up weak or missing on-page claims can backfire. If the page doesn’t visibly support an attribute (e.g., ratings, awards, reviewers), don’t encode it. In a trust-tightening environment, misleading markup is more likely to be ignored—or become a quality signal against you.

What to update now: a 2026-ready Structured Data checklist for AI comprehension

Think of this as a migration from “page-level snippets” to a maintainable entity graph. The goal is that any crawler (search or AI) can reliably answer: who published this, who created it, what entity is it about, and how does it connect to your offerings and expertise.

High-impact schema patterns: @id strategy, sameAs, mainEntityOfPage, and author/organization graphs

Create a persistent @id namespace

Define stable URIs for entities (e.g., https://example.com/#organization, https://example.com/#person-jane-doe). Reuse them across templates so the same entity is never “re-invented” per page.

Connect Article ↔ Person ↔ Organization

On articles and guides, ensure author and publisher are explicit, and that the Person and Organization nodes have their own @id, URLs, and sameAs (when available).

Use mainEntityOfPage and about deliberately

Make the primary entity unambiguous: the page should declare what it is mainly about (a product, a concept, a person, a service) and tie that to the canonical URL via mainEntityOfPage.

Normalize types and remove conflicts

Avoid contradictory typing (e.g., the same node switching between Organization and LocalBusiness across pages unless it truly is both and modeled correctly). One canonical entity per real-world thing; one dominant interpretation per page.

Validate, then align with visible content

After syntax validation, do a content-to-markup alignment review. If a claim isn’t visible and supported, remove or revise the property.

Content-type specifics: Article, Organization, Product/Service, FAQ (and when to avoid over-markup)

| Content type | Must-have entities/properties (2026) | Common mistakes to fix | |||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|

| Article / Guide | Person (author) with @id; Organization (publisher) with @id; datePublished/dateModified; mainEntityOfPage; about | Missing author identity; “generic” Person nodes per page; inconsistent publisher naming; markup claims not visible on-page | Organization | Stable @id; url; logo; sameAs; contactPoint (if applicable); knowsAbout (only if defensible) | Multiple competing Organization entities; weak/incorrect sameAs; inconsistent address/contact details across templates | Product / Service | Product/Service entity with @id; brand (Organization); offers (where accurate); review/aggregateRating only when policy-compliant and visible | Marking up reviews that aren’t shown; mismatched pricing; orphaned products without brand/offer relationships | FAQ | FAQPage only when the Q&A is fully visible and helpful; keep answers precise and consistent with page copy | Over-markup across many pages; duplicated FAQs; answers that contradict main content or change frequently without updates |

| Local / Publisher signals (cross-cutting) | Consistent NAP where relevant; clear publisher country/locale; editorial policy pages linked (as WebPage/AboutPage) | Inconsistent location metadata; missing About/Editorial pages; unclear ownership between brands/sub-brands |

If you’re optimizing for assistant ecosystems and answer-first UX, monitor how AI search accessibility expands via device integrations. Commentary around Perplexity’s expansion and “deep research” positioning is one example of why entity clarity and provenance become more valuable upstream of the answer layer (Medium).

Common schema audit issues to prioritize (example distribution)

Illustrative audit-style breakdown of frequent issues that reduce entity clarity and trust. Use as a template for your own reporting, not as universal benchmarks.

Measurement: how to tell if Structured Data is improving AI visibility (and not just passing validation)

Validation is table stakes. The measurement shift for 2026 is from “did we implement schema?” to “did AI systems understand and reuse it?” That requires outcome-oriented KPIs tied to citations, inclusion, and entity consistency.

KPIs that map to AI outcomes: citations, inclusion, and entity consistency

- Entity consistency score: count duplicate entities reduced (e.g., how many distinct Organization nodes exist across the site after normalization).

- Schema coverage by template: % of key templates emitting the correct graph (Organization/Person/Article/Product) with stable @id reuse.

- Rich result impressions (where applicable): still useful as a leading indicator, but not the end goal.

- AI visibility proxies: tracked brand/entity mentions and citations in repeatable prompt sets (ChatGPT/Perplexity), plus any measurable AI referral patterns.

Instrumentation: Search Console, log files, schema testing, and LLM mention tracking

A practical approach is to combine four streams:

- Google Search Console: enhancement reports, rich result performance, and query/page changes after template rollouts.

- Server logs: crawl frequency shifts by template (especially for entity hub pages like authors and organization/about).

- Schema testing + automated linting: errors/warnings trends and “graph integrity” checks (e.g., @id reuse, sameAs presence).

- LLM mention tracking: a controlled prompt set run weekly (same prompts, same geography if possible), capturing whether your brand/entities are mentioned and whether citations point to your canonical pages.

| Metric | Baseline | 30 days | 60 days | 90 days |

|---|---|---|---|---|

| Structured data errors (count) | 120 | 70 | 45 | 30 |

| Rich result impressions (where eligible) | 8,500 | 9,200 | 10,100 | 10,600 |

| Tracked AI mentions/citations (count) | 14 | 18 | 23 | 28 |

| Crawl hits to entity hubs (authors/org) | Low | Medium | Medium | High |

Example 90-day trend: errors down, AI mentions up (illustrative)

Illustrative time series showing how schema cleanup and entity graph normalization can correlate with improved visibility proxies over a 90-day rollout.

One more measurement caveat: LLM-driven ranking and summarization can introduce biases that aren’t visible in classic SEO tooling. Studies on fairness and bias in LLM ranking systems underscore why you should measure across multiple prompts, query intents, and sources rather than relying on a single “AI visibility” number (ACL Anthology / NAACL 2024).

What happens next: predictions for Structured Data as AI search matures

The direction of travel is toward stricter trust, clearer provenance, and more durable entity graphs. As AI systems become more comfortable answering directly, they also become more conservative about which sources they rely on—especially in categories where accuracy and accountability matter.

Likely near-term changes: stricter trust signals and provenance expectations

- More weight on publisher identity: consistent Organization markup, clear ownership, and stable author identities.

- Tighter tolerance for misleading markup: validation won’t be enough if markup diverges from visible reality.

- Greater emphasis on cross-source consistency: sameAs and identifiers that match widely recognized references will matter more for disambiguation.

Implications for Entity Optimization for AI: building durable entity graphs across the site

The most future-proof move is governance: embed entity graph rules into CMS templates so every new page strengthens the same graph. That means defined @id conventions, a controlled vocabulary for types, and routine audits for drift.

Scenario model: effort vs expected impact for structured data maturity (illustrative)

Illustrative planning model comparing three maturity levels by estimated hours, templates covered, and expected error reduction. Customize with your own baseline.

Prioritize a maintainable entity graph strategy over one-off schema snippets. If you can only do one thing in 2026: standardize @id reuse and connect Organization → Person → content across your site.

Key takeaways

Structured Data in 2026 is less about rich snippets and more about entity resolution, provenance, and safe citation in AI answers.

Build a graph: stable @id + sameAs + mainEntityOfPage, and connect Organization ↔ Person ↔ Article/Product/Service consistently.

Measure outcomes, not just validation: track entity consistency, template coverage, crawl behavior, and repeatable AI mention/citation tests.

Expect stricter trust expectations: misleading or conflicting markup is more likely to be ignored or treated as a quality risk.

FAQ

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

Apple's Safari to Integrate AI Search Engines: A Strategic Shift in Browsing

Deep dive on Safari’s AI search integration: strategic drivers, market impact, and what it means for SEO/entity optimization, with data and expert insights.

Re-Rankers as Relevance Judges: A New Paradigm in AI Search Evaluation

News analysis on re-rankers becoming relevance judges in AI search evaluation—what changed, why it matters for Knowledge Graph visibility, and what to measure next.