Perplexity AI on the Samsung Galaxy S26: Why On-Device Citations Will Redefine Generative Engine Optimization

Perplexity AI’s rumored Galaxy S26 integration could mainstream cited answers on mobile—raising the bar for Generative Engine Optimization and AI visibility.

Perplexity AI on the Samsung Galaxy S26: Why On-Device Citations Will Redefine Generative Engine Optimization

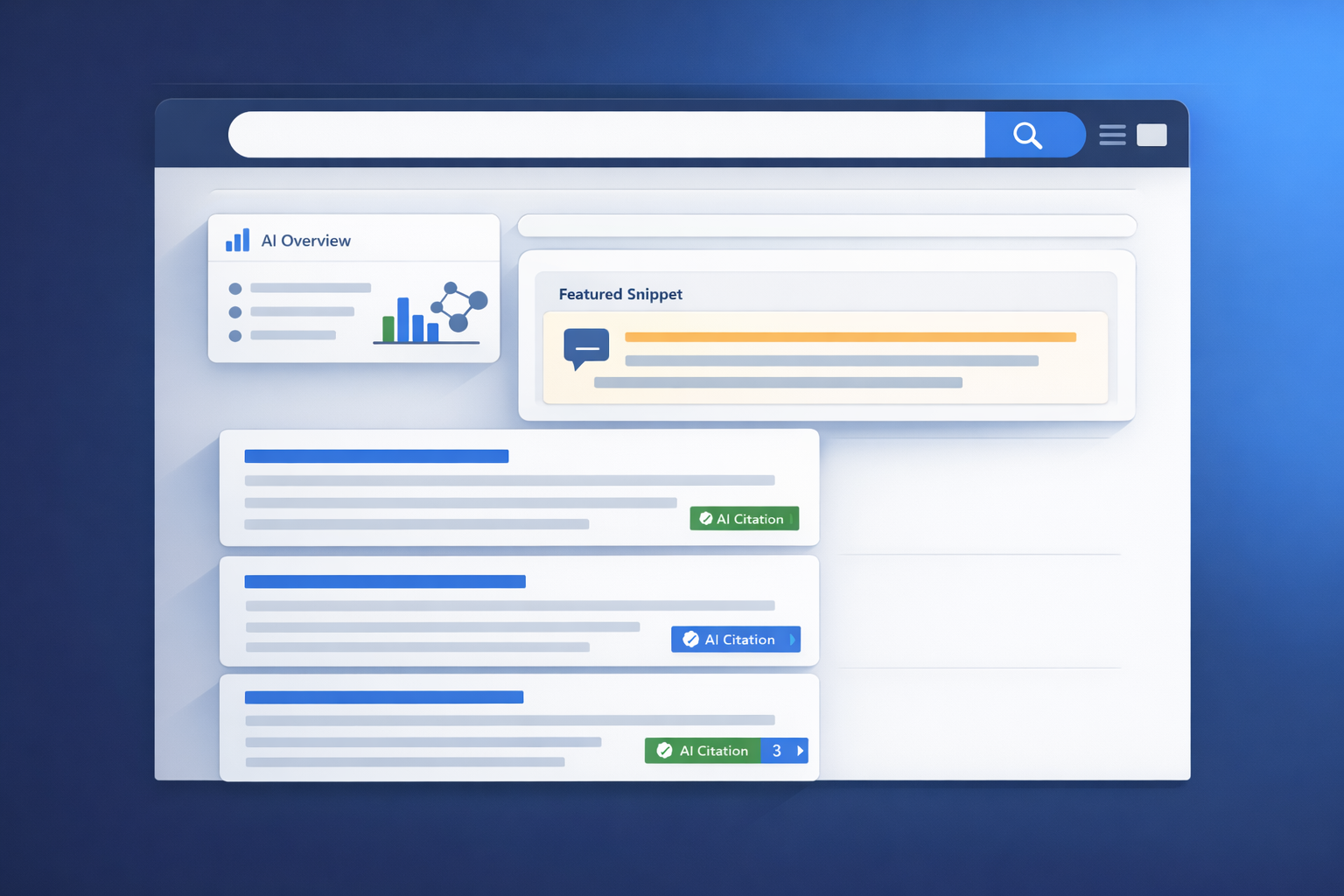

If Perplexity becomes a deeply integrated assistant on the Samsung Galaxy S26—invoked by voice, a wake-button long press, and embedded into everyday apps—then cited answers stop being a “power user” behavior and become the default mobile UX. That distribution shift matters more than incremental model quality: it forces brands to compete for AI citations (being selected as a source) rather than only rankings (being listed as a result). In practice, this is where Generative Engine Optimization (GEO) becomes non-optional: you’ll need content that is easy to retrieve, easy to verify, and easy to cite—at mobile scale.

This spoke unpacks what changes when Perplexity is embedded in the OS, why citations become the trust interface, and how to build a citation-first GEO playbook—while acknowledging the concentration and fairness risks that come with default answer engines.

The thesis: Galaxy S26 + Perplexity makes citations a default UX (and GEO becomes non-optional)

From “search app” to “system-level answer engine”

The reported direction (and teasing) is not “Perplexity as another app,” but Perplexity as an assistant that can be summoned like a core OS feature and connected to first-party surfaces (Calendar, Gallery, voice wake words, and the wake button). That’s a distribution shock. Historically, defaults change behavior because they remove friction and make “micro-queries” socially and ergonomically normal: quick checks, comparisons, definitions, and fact validation.

Why citations are the new trust interface on mobile

On a phone, the primary constraint is attention. A fully rendered answer is the end state; the user doesn’t want ten tabs. Citations become the compact trust interface: a way to sanity-check a claim without leaving the assistant, and a way to “open the source” only when needed. If the assistant is default, then citations become the new top-of-funnel real estate.

GEO inflection points happen when answer engines gain default surfaces (wake button, lock screen, voice, camera, share sheet), not only when models improve. When distribution changes, the citation economy changes—fast.

Why defaults matter: adoption lift from “optional app” to “system-level placement” (illustrative)

Illustrative model showing how default placement can increase assistant usage frequency by reducing activation friction. Replace with your internal telemetry where available.

For teams building AI visibility strategies, this is the same pattern we’ve already seen in answer-engine competition dynamics—where citation selection and “confidence” can outweigh classic rank positions. For a deeper lens on how systems judge relevance beyond traditional ranking, see Re-Rankers as Relevance Judges: A New Paradigm in AI Search Evaluation.

What changes when Perplexity is embedded: the citation supply chain moves closer to the user

Citations as a product feature: how “citation confidence” becomes visible

In an embedded assistant, citations are not a footnote—they’re UI. When a user can tap a source card instantly, the assistant’s product success depends on the perceived reliability of its sources. That shifts optimization from “how do I rank?” to “how do I become the most cite-worthy source for this claim?”

Answer surfaces on mobile: lock screen, sidebar, voice, camera, and share sheet

The “supply chain” of a citation—user intent → retrieval → synthesis → source selection → tap-through—compresses when the assistant is always one gesture away. Expect more:

- Micro-queries (quick validation, specs, pricing checks, definitions).

- Multimodal queries (camera-based “what is this?” and “is this safe?”), which often demand citations for credibility.

- In-the-moment comparisons (best X for Y) where the assistant must justify tradeoffs with sources.

Behavioral shift model: more “answer-only” sessions, fewer blue-link clicks (illustrative)

Illustrative funnel showing how embedded assistants can increase sessions that end inside the assistant while still creating a smaller—but higher-intent—citation tap-through segment.

The implication is uncomfortable but actionable: being “the click” matters less; being “the cited proof” matters more. That’s why discrepancies between classic rankings and LLM citations are becoming a core diagnostic problem—covered in LLM Citations vs. Google Rankings: Unveiling the Discrepancies.

The GEO implications: how to earn Perplexity citations in a Galaxy S26 world

A citation-first playbook is not generic SEO with a new label. It’s about making your content retrievable, disambiguated, and verifiable—so an answer engine can confidently attach your URL to a specific claim.

Optimize for entity clarity and knowledge graph consistency

On-device usage increases volume, but it also increases ambiguity: users speak messier queries, refer to “that thing,” and ask follow-ups. Your content needs strong entity hygiene so retrieval and re-ranking don’t second-guess what your page is about.

- Use canonical names and synonyms early (H1 + first paragraph), and keep them consistent across pages.

- Create explicit “definition blocks” (1–2 sentences) that can be safely quoted.

- Reinforce relationships (Product ↔ Manufacturer ↔ Model ↔ Compatible accessories ↔ Standards) with clear internal linking and consistent terminology.

This is also where “knowledge graph-ready content” stops being a technical nice-to-have and becomes a growth lever, especially as performance and UX constraints still matter. See Google Core Web Vitals Ranking Factors 2025: What’s Changed and What It Means for Knowledge Graph-Ready Content.

Structured data that actually helps answer engines

Schema is not a magic “get cited” switch, but it reduces ambiguity and improves machine readability—especially for entities and attribution. Prioritize structured data that clarifies:

- Who is speaking:

Organization,Person, author/editor profiles, and credentials. - What the thing is:

Product(with identifiers),SoftwareApplication,MedicalEntity(where applicable), and clear naming. - When it’s valid:

datePublished,dateModified, and versioning notes for fast-changing topics.

This is especially relevant if Samsung positions Perplexity as one of several assistants: structured data improves portability across answer engines and assistant ecosystems. For a related perspective on assistant strategy and integrations, see Samsung's Bixby Reborn: A Perplexity-Powered AI Assistant.

Citation-ready writing: claim density, sourcing, and update signals

Answer engines cite pages that make it easy to extract a defensible claim. A practical pattern is: short claim → immediate evidence → scope/limitations → link to primary sources.

| Page element | What to do | Why it helps citations |

|---|---|---|

| Definition block | Add a 1–2 sentence “What it is” near the top; keep it stable across updates. | Creates a quote-safe snippet with low ambiguity. |

| Evidence block | Use bullets for key facts; link to primary sources; include methodology when you publish data. | Improves verification and “citation confidence” for specific claims. |

| Update notes | Add “What changed” + date; avoid meaningless timestamp churn. | Signals freshness without undermining trust. |

Before publishing or updating: (1) Is the primary entity unambiguous in the first 100 words? (2) Are top claims supported by a primary source link? (3) Are dates and scope stated? (4) Would a citation to this page justify one specific sentence in an answer? If not, rewrite until the page earns a clean, quotable claim.

Measurement framework for Perplexity citation performance (example KPI set)

Example metrics you can track weekly for a target query set to quantify GEO progress and diagnose citation volatility.

To operationalize this, you’ll also want faster anomaly detection loops—especially if citations fluctuate after updates. That’s where the newer monitoring workflows discussed in Google Search Console 2025 Enhancements: Hourly Data + 24-Hour Comparisons for Faster GEO/SEO Anomaly Detection become relevant—even if your north-star KPI shifts from clicks to citations.

Counterpoint: integration doesn’t guarantee fair citations (and could concentrate visibility)

The winner-take-most risk for publishers and niche brands

Default answer engines can unintentionally concentrate attention on a small set of domains that are easiest to parse, frequently updated, and already well-linked. If Perplexity becomes a default assistant, the “citation graph” could become more winner-take-most—especially for head queries.

Bias toward ‘clean’ sources: why some expertise gets filtered out

Citation concentration risk (how to measure it)

A simple way to monitor whether citations are concentrating: track the top-10 domains’ share of citations across a fixed query set over time (illustrative trend).

In citation-first ecosystems, visibility can be gated by machine legibility. Highly expert content can lose to cleaner, better-structured pages. Treat GEO as the adaptation layer: make your expertise extractable, attributable, and versioned.

If you’re concerned about fairness and bias in AI-driven ranking and citation selection, it’s worth adopting evaluation checks that include knowledge graph validation and bias testing. See LLMs and Fairness: How to Evaluate Bias in AI-Driven Search Rankings (with Knowledge Graph Checks).

What to do in the next 90 days: a GEO action plan for the Perplexity-on-S26 scenario

Treat Perplexity citations like a distribution channel. Build content the way you’d build for an API: structured, testable, and maintained with change logs. Here’s an opinionated 90-day plan.

Audit (Weeks 1–2): map where you’re already cited vs invisible

Pick 30–50 target queries (mix of branded + non-branded). Test them in Perplexity. Log: which domains get cited, which URLs repeat, and which claim types you lose (definitions, comparisons, pricing, “how to”). Create a gap list by entity and intent.

Build (Weeks 3–8): create a “citation moat” with proprietary data + methodology

Publish 3–5 definitive pages where you can be the primary source (benchmarks, pricing indices, checklists, original research). Include methodology, limitations, and downloadable data where feasible. Add structured data, author/editor attribution, and internal links that reinforce entity relationships.

Harden (Weeks 6–10): improve machine legibility and performance

Fix pages that are hard to cite: burying the answer, lacking dates, unclear entities, slow templates, and inaccessible tables. Ensure key facts render server-side and are readable without heavy interaction.

Advocate (Weeks 8–12): align product, SMEs, PR, and legal around “cite-worthy” claims

Create a “source of truth” hub: stable URLs, versioned statements, and attributable claims. Make it easy for journalists, partners, and answer engines to cite the same canonical page. If you publish comparisons, document criteria and avoid unverifiable superlatives.

Finally, instrument reporting. If you’re already using Search Console for SEO diagnostics, extend the practice to GEO monitoring and cross-channel signals—see Google Search Console Social Channel Performance Tracking: Unifying SEO + Social Signals for Faster GEO/SEO Diagnosis.

Key Takeaways

If Perplexity becomes a system-level assistant on Galaxy S26, citations become default mobile UX—making GEO a core growth function, not an experiment.

On-device surfaces drive more micro-queries and “answer-first” sessions; being cited is the new top-of-funnel position even when clicks decline.

Winning citations requires entity clarity, knowledge-graph consistency, structured data for disambiguation, and citation-ready writing (claim → evidence → scope → sources).

Default answer engines can concentrate visibility; the practical defense is to publish machine-legible primary data and measure citation share-of-voice over time.

FAQ: Perplexity on Galaxy S26 and GEO

One final strategic note: as answer engines proliferate (and assistants become multi-provider), portability matters. Standards for integrations and consistent tooling will shape how quickly teams can adapt—see Model Context Protocol: Standardizing Answer Engine Integrations Across Platforms (How-To).

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

LLMs and Fairness: How to Evaluate Bias in AI-Driven Search Rankings (with Knowledge Graph Checks)

Learn a step-by-step method to detect and quantify bias in LLM-driven search rankings using audits, Knowledge Graph checks, and fairness metrics.

The Complete Guide to AI Citations: How to Get Cited by ChatGPT and Other LLMs

Learn how AI citations work and how to earn mentions in ChatGPT and other LLMs with step-by-step tactics, testing insights, and a practical framework.