Perplexity AI's Comet Browser: Redefining Search with Integrated AI Assistants

Deep dive into Perplexity’s Comet browser and what AI-native browsing means for Generative Engine Optimization, citations, and AI visibility.

Perplexity AI's Comet Browser: Redefining Search with Integrated AI Assistants

Perplexity’s Comet browser matters because it turns “search” into an assistant-led browsing workflow: the AI sits inside the browser, synthesizes pages as you navigate, and routes attention toward a small set of sources it can confidently ground. For Generative Engine Optimization (GEO), that shifts the goal from “rank for a query” to “become the most citable, verifiable source node” for an entity and its claims—so you earn mentions, links, and downstream actions inside answer engines.

This spoke focuses on how Comet-style integrated assistants change discovery, evaluation, and citation behavior—and what to do (structure, entities, and structured data) to improve Citation Confidence and AI Visibility.

In assistant-led browsing, the interface becomes the gatekeeper: the assistant summarizes, compares, and quotes. If your content isn’t easy to extract and verify (clear claims, stable sections, provenance, and schema), you may lose visibility even if you still rank in classic search.

Executive Summary: Why Comet Matters for Generative Engine Optimization

What Comet is (and what it is not): AI-native browser vs. chatbot

Comet is best understood as an AI-native browsing layer: it embeds an assistant into the act of navigating the web, not just answering a single prompt. Unlike a standalone chatbot, the assistant can (a) read the page you’re on, (b) follow links, (c) reconcile multiple sources, and (d) keep context across tabs and tasks—making “browsing” feel like guided research and execution.

For product context, see the public overview of Comet and Perplexity’s broader platform direction: Comet (browser) and Perplexity AI.

The GEO takeaway: citations, AI visibility, and answer-engine behavior shift

Comet-style experiences reduce the user’s need to scan “10 blue links.” Instead, the assistant pre-selects sources, extracts the parts that support an answer, and often keeps the user inside a synthesis view. That raises the stakes on being (1) retrievable, (2) disambiguated as the right entity, and (3) quotable with minimal risk of misinterpretation.

This aligns with what we see across answer engines: content structure and machine-readable cues influence whether models cite you at all. For supporting evidence, read The Impact of Content Structure on LLM Citations: Insights from Recent Studies.

Market context: adoption signals for AI answer engines (directional)

A directional snapshot (not a single-source census) showing how quickly AI answer experiences are entering the search journey via tools like ChatGPT, Perplexity, and AI Overviews. Use as a planning baseline; validate with your own analytics.

If you’re building a GEO program, Comet is a preview of where interfaces are going: assistant-first, citation-mediated, and entity-grounded. For broader framing, explore The Rise of Generative Engine Optimization (GEO): Navigating AI-Driven Search Landscapes (Case Study: Knowledge Graph–Led Entity Optimization).

How Comet’s Integrated Assistant Changes the Search Journey (Mechanics That Impact Citations)

From query to action: in-browser synthesis, follow-ups, and source grounding

In classic search, users bounce between a SERP and multiple tabs. In Comet-style browsing, the assistant becomes the primary interface: it answers, proposes follow-ups, and can “carry” the task forward (compare options, extract requirements, draft an email, summarize a PDF). The result is fewer pageviews distributed across fewer domains—meaning the assistant’s source selection logic becomes your distribution channel.

Where citations happen: UI placement, link pathways, and ‘trust’ signals

Citations in assistant-led browsing tend to appear as inline footnotes, expandable source drawers, or “used sources” lists. Practically, that means your content must work when decontextualized: a quoted sentence should still be accurate without the surrounding narrative, and the page should clearly communicate authorship, date, and what exactly is being claimed.

In AI answer interfaces, the citation is the new click. If the citation looks risky (unclear author, unclear date, unclear claim), the model has incentives to choose a safer source.

Implications for Knowledge Graph understanding and entity resolution

Integrated assistants behave like entity resolvers: they try to map pages to “things” (companies, products, people, standards) and relationships (compares-to, depends-on, caused-by, located-in). If your brand, product names, and definitions vary across pages—or you mix synonyms without clarifying equivalence—you increase ambiguity and reduce the chance the assistant treats your page as an authoritative node.

This is where Knowledge Graph readiness becomes operational. For a practical example of orchestrating entity updates, see Case Study: Using Marketing Automation Platform Features to Orchestrate Knowledge Graph Updates for AI Visibility Monitoring.

What Comet Prioritizes When Choosing Sources: A ‘Citation Confidence’ Model

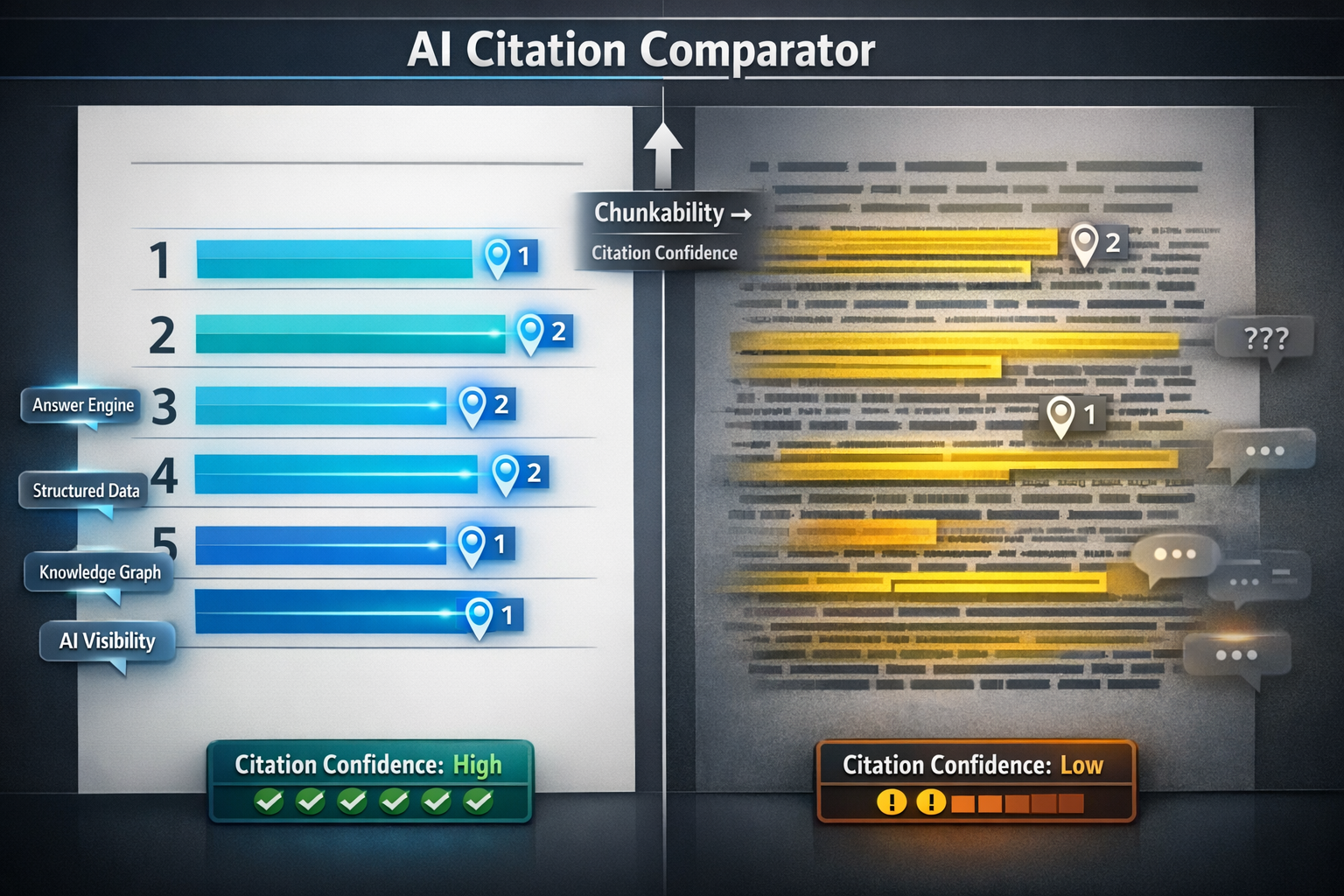

While Perplexity/Comet’s exact ranking and citation logic isn’t fully public, you can reverse-engineer a practical model that matches observed answer-engine behavior: the assistant prefers sources that minimize the risk of misquoting, outdated claims, or entity confusion. Think in terms of Citation Confidence—your probability of being selected and safely quoted.

Signals likely to increase Citation Confidence (clarity, corroboration, provenance)

- Claim specificity: precise definitions, scoped statements (who/what/when), and quantified claims where possible.

- Provenance: author identity, editorial policy, citations to primary sources, and visible “last updated” dates.

- Entity disambiguation: consistent naming, clear “what it is / what it isn’t,” and unambiguous references to standards, products, and organizations.

- Corroboration: alignment with other reputable sources (the assistant can triangulate and feel safer citing you).

- Extractability: labeled tables, concise summaries, and stable section headings that can be referenced.

Signals that reduce citation likelihood (thin content, ambiguous claims, missing authorship)

- Thin or purely promotional copy with no verifiable claims, methodology, or references.

- Ambiguous statements (“best,” “leading,” “most trusted”) without evidence or definitions.

- Missing author/editor information, no update date, or unclear organization ownership.

- Unstable URLs, aggressive gating, or content that requires heavy client-side rendering to access core facts.

Structured Data as a dependency: Schema.org, authors, dates, and entities

Structured data doesn’t “force” citations, but it reduces ambiguity and improves machine readability—especially around authorship, dates, and entity identity. This is increasingly important as models add structured data capabilities and grounding behaviors. For monitoring implications, see OpenAI GPT-5.4 Launch (2026): What the New Structured Data Capabilities Mean for AI Visibility Monitoring.

At a minimum, ensure your key pages are eligible for unambiguous parsing with Schema.org types such as Organization, Person, Article/BlogPosting, and where appropriate FAQPage/HowTo. Also ensure canonical URLs and consistent entity naming across templates.

Citation Confidence model: which page features tend to raise “safe-to-cite” likelihood

A practical weighting model you can use in audits. Customize weights by niche (YMYL topics should weight provenance and corroboration higher).

Pick one target query and ask: can an assistant quote a 1–2 sentence answer from this page without rewriting? If not, add a labeled definition block, cite a primary source, and clarify the entity (“X is…”, “X is not…”). That single change often improves both citation accuracy and selection probability.

Deep-Dive: Optimization Playbook for Comet-Style Browsing (Focused GEO Tactics)

Designing ‘quotable’ sections for assistant extraction (definitions, steps, constraints)

Assistant-led browsing rewards “quotable” content: compact, explicit, and correctly scoped. Build sections that can be lifted verbatim with minimal risk:

- 40–60 word definition blocks under an H2/H3 (“What is X?”) with one supporting citation.

- Constraint blocks (“Works best when…”, “Doesn’t apply if…”) to prevent misapplication.

- Labeled comparisons (tables or bullet lists) with consistent criteria names.

- Step-by-step procedures with explicit inputs/outputs (ideal for HowTo-style extraction).

If you want a data-backed view of which formats tend to be mentioned, see Content Types That Earn Mentions in LLMs: A Data-Driven Approach.

Entity-first information architecture for AI visibility

Treat each important page as an entity dossier, not a keyword container. That means: consistent canonical terminology (e.g., always use “Generative Engine Optimization (GEO)” on first mention), explicit relationships (“Structured Data influences AI Visibility”), and a clean H2/H3 hierarchy so assistants can retrieve the right passage quickly.

If your citations are inconsistent or missing, use a diagnostics workflow like Generative Engine Optimization (GEO) — citation diagnostics & repair.

Verification-ready content: primary sources, methodology notes, and transparent updates

Comet-like assistants are optimized to reduce user effort, but they also increase the risk of misattribution or stale summaries. You can mitigate by making verification easy: cite primary sources, add a short methodology note for original claims, and maintain a visible changelog or “last updated” line. This also supports trust and transparency—an increasingly central debate in AI search ecosystems.

For the governance angle, read Industry Debates: The Ethics and Future of AI in Search—Why Knowledge Graph Transparency Must Be Non‑Negotiable.

If your page only implies key claims (instead of stating them precisely), assistants may paraphrase incorrectly. Add explicit definitions, constraints, and citations near the top of the page—then keep them updated—to reduce hallucinated or outdated summaries.

Measurement & Experimentation: Proving Comet’s Impact on AI Visibility

Instrumentation: what to track (AI referrals, on-page engagement, citation mentions)

You can measure Comet/assistant-led impact without perfect tooling by combining analytics segmentation with a repeatable citation sampling routine. Track: (1) sessions from AI referrers (Perplexity, ChatGPT, Copilot, etc.), (2) engagement on pages that are frequently cited (scroll depth, time, outbound clicks), and (3) citation mentions for a fixed query set (manual or automated collection).

Experiment design: query sets, control pages, and evaluation rubric

Define a fixed query set

Pick 20–50 informational queries mapped to your core entities (brand, product category, problem). Keep them stable for 4–6 weeks.

Create control vs. treatment pages

Select 5–10 pages to improve (treatment) and keep 5–10 similar pages unchanged (control).

Apply “quotable + provenance + schema” changes

Add definition blocks, labeled tables, author/date, primary citations, and relevant Schema.org markup. Keep URLs stable.

Score outcomes weekly

For each query, record whether you’re cited, where the citation appears, and whether the quoted claim is accurate. Tie this to referral sessions and on-site engagement.

Risks and constraints: hallucinations, misattribution, and brand safety

Expect imperfections: assistants may cite the wrong page, attribute your claim to another domain, or summarize an outdated section. Mitigate with canonical URLs, clear on-page provenance, and frequent updates to high-risk pages. Also consider fairness and bias dynamics in AI rankings—especially if you compete with aggregators.

For a comparative view of bias and ranking implications, see LLMs and Fairness: Addressing Bias in AI-Driven Rankings (Comparison Review for AI Visibility).

| Metric | How to measure | Target (example) |

|---|---|---|

| AI referral sessions | Segment by referrer (Perplexity/ChatGPT/etc.), landing page, and query cluster | +15–30% over 6–8 weeks on treatment pages |

| Citation rate | % of query set where your domain is cited in the answer | +10 points vs. control pages |

| Citation accuracy score | Manual review: 0–2 scale (0 wrong, 1 partially right, 2 accurate) | ≥1.6 average on treatment pages |

| Assisted conversion rate | Conversions where first touch is AI referral; compare to organic and direct | Parity or better vs. organic on high-intent pages |

If you’re seeing AI-driven traffic underperform due to missing machine-readable steps or product entities, the pattern mirrors other assistant commerce failures—often a structured data and clarity issue more than “UX.” For an illustrative case, see Walmart: ChatGPT Checkout Converted 3x Worse Than the Website—A Structured Data Problem, Not a UX Problem.

Key Takeaways

Comet shifts discovery from SERP scanning to assistant-led browsing, making source selection and citation placement the new “top of funnel.”

To earn citations, optimize for Citation Confidence: specific claims, clear provenance, entity disambiguation, freshness, and extractable formatting.

Structured data and entity-first architecture reduce ambiguity—helping assistants resolve “who/what” your page represents and quote it safely.

Prove impact with a repeatable query set and a simple rubric: citation rate, citation accuracy, AI referrals, and assisted conversions.

FAQ: Comet Browser + Generative Engine Optimization (People Also Ask Targets)

Frequently Asked Questions

Further reading on adjacent assistant ecosystems and research workflows: Anthropic’s Claude models, and an overview of reasoning/research model concepts: Reasoning model. For market dynamics context, see Sacra’s analysis of Perplexity’s business trajectory: How Perplexity hits $656M ARR (Sacra PDF).

Founder of Geol.ai

Senior builder at the intersection of AI, search, and blockchain. I design and ship agentic systems that automate complex business workflows. On the search side, I’m at the forefront of GEO/AEO (AI SEO), where retrieval, structured data, and entity authority map directly to AI answers and revenue. I’ve authored a whitepaper on this space and road-test ideas currently in production. On the infrastructure side, I integrate LLM pipelines (RAG, vector search, tool calling), data connectors (CRM/ERP/Ads), and observability so teams can trust automation at scale. In crypto, I implement alternative payment rails (on-chain + off-ramp orchestration, stable-value flows, compliance gating) to reduce fees and settlement times versus traditional processors and legacy financial institutions. A true Bitcoin treasury advocate. 18+ years of web dev, SEO, and PPC give me the full stack—from growth strategy to code. I’m hands-on (Vibe coding on Replit/Codex/Cursor) and pragmatic: ship fast, measure impact, iterate. Focus areas: AI workflow automation • GEO/AEO strategy • AI content/retrieval architecture • Data pipelines • On-chain payments • Product-led growth for AI systems Let’s talk if you want: to automate a revenue workflow, make your site/brand “answer-ready” for AI, or stand up crypto payments without breaking compliance or UX.

Related Articles

The Rise of Listicles: Dominating AI Search Citations

Deep dive on why listicles earn disproportionate AI search citations—and how to structure them for Generative Engine Optimization and higher citation confidence.

Understanding How LLMs Choose Citations: Implications for SEO

Deep dive into how LLMs select citations and what it means for Generative Engine Optimization—authority signals, retrieval, formatting, and measurement.